Running a local OpenShift cluster used to mean wrestling with Minishift and oc cluster up. Those methods are long gone. Today, CodeReady Containers (CRC) is the official tool for running a single-node OpenShift cluster on your local machine. It gives you a fully functional OpenShift environment for development and testing without needing a multi-node cluster or cloud subscription. This guide covers setting up CRC on RHEL 10 and Fedora from download to deploying your first application.

What Are OKD and CRC

OKD (formerly OpenShift Origin) is the upstream community distribution of Kubernetes that powers Red Hat OpenShift. It adds a web console, integrated CI/CD, developer workflows, and an opinionated security model on top of standard Kubernetes.

CRC (CodeReady Containers) packages a minimal OpenShift cluster into a single virtual machine that runs on your workstation. It is designed for developers who need a local OpenShift environment without provisioning real infrastructure. CRC manages the VM lifecycle, networking, and DNS so you can focus on building applications.

System Requirements

CRC is resource-intensive because it runs a full OpenShift control plane. Your machine needs:

- RAM: 9 GB free (14 GB+ total recommended so your host OS remains responsive)

- CPU: 4 physical CPU cores

- Disk: 35 GB free space

- OS: RHEL 10, Fedora (latest two releases), or other supported Linux distributions

- Virtualization: Hardware virtualization (Intel VT-x / AMD-V) enabled in BIOS

- User: Non-root user with sudo access

Verify hardware virtualization is enabled:

grep -E 'vmx|svm' /proc/cpuinfo | head -1If this returns output containing “vmx” (Intel) or “svm” (AMD), virtualization is supported and enabled.

Download CRC

Download CRC from the Red Hat console. You need a free Red Hat account (the same one used for Red Hat Developer subscriptions).

Visit https://console.redhat.com/openshift/create/local and download the CRC archive for Linux. You will also see a “Pull Secret” on the same page – copy it and save it to a file, as you will need it during setup.

Extract the archive and move the binary to your PATH:

tar xvf crc-linux-amd64.tar.xz

sudo cp crc-linux-*-amd64/crc /usr/local/bin/

sudo chmod +x /usr/local/bin/crcVerify the binary works:

crc versionRun crc setup

The setup command prepares your host by configuring the hypervisor (libvirt), networking, and DNS. Run it as your regular user (not root):

crc setupThis process takes several minutes. It installs the CRC libvirt driver, configures NetworkManager for the CRC DNS domain, and downloads the OpenShift VM image. You may be prompted for your sudo password during the process.

On Fedora, if libvirt is not installed, install it first:

sudo dnf install -y libvirt libvirt-daemon-kvm qemu-kvm

sudo systemctl enable --now libvirtdVerify setup completed without errors. The last line should indicate success.

Start the OpenShift Cluster

Optionally configure resource allocation before starting. The defaults are 9 GB RAM and 4 CPUs, but you can increase them:

crc config set memory 12288

crc config set cpus 6Start the cluster:

crc startOn first start, CRC prompts for the pull secret you downloaded earlier. Paste it when asked. The VM boots, the OpenShift cluster initializes, and CRC configures the local kubeconfig. This takes 5-10 minutes depending on your hardware.

When complete, CRC prints the web console URL and login credentials. Save these. The output looks similar to:

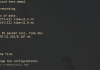

Started the OpenShift cluster.

The server is accessible via web console at:

https://console-openshift-console.apps-crc.testing

Log in as administrator:

Username: kubeadmin

Password: xxxxx-xxxxx-xxxxx-xxxxx

Log in as user:

Username: developer

Password: developerAccess the Web Console

Open the web console URL in your browser. You may see a certificate warning since CRC uses a self-signed certificate – accept it to proceed.

Log in as kubeadmin for administrative access or developer for a regular user perspective. The kubeadmin account has cluster-admin privileges and can manage nodes, operators, and cluster settings. The developer account is scoped to project-level access.

Verify the console loads and shows the cluster dashboard with node status, resource usage, and running pods.

Login with the oc CLI

CRC includes the oc CLI tool. Set up your shell environment:

eval $(crc oc-env)Log in as the developer user:

oc login -u developer -p developer https://api.crc.testing:6443Verify access by listing projects:

oc get projectsCheck the cluster nodes:

oc get nodesYou should see a single node with “Ready” status.

Deploy Your First Application

Create a new project and deploy a sample application to verify the cluster works end to end:

oc new-project demo

oc new-app --name=hello httpd~https://github.com/sclorg/httpd-ex.gitThis triggers an S2I (source-to-image) build that clones the repository, builds a container image, and deploys it. Watch the build progress:

oc logs -f buildconfig/helloOnce the build completes, check the pod is running:

oc get podsExpose the application with a route:

oc expose service/helloGet the route URL:

oc get route helloOpen the URL in your browser. You should see the Apache HTTP Server test page, confirming the full pipeline works – source code to running application with a public route.

Managing the CRC Cluster

Common lifecycle commands:

# Check cluster status

crc status

# Stop the cluster (preserves state)

crc stop

# Start it again

crc start

# Delete the cluster entirely (destroys all data)

crc delete

# View console URL and credentials

crc console --credentialsWrapping Up

CRC replaces the old Minishift and oc-cluster-up workflows with a supported, maintained tool for running OpenShift locally. It demands significant resources – 9 GB RAM minimum is not negotiable – but delivers a real OpenShift environment with the web console, S2I builds, routes, and operators. For development and learning, it is the fastest path to a working OpenShift cluster without cloud infrastructure.

Thanks Mates. Great post. Any reason why you chose to use Docker instead of Podman …to stay in RH spirit?