Zimbra is an email and collaboration platform used by organizations worldwide. Running it in production without proper monitoring is asking for trouble – mail queues can silently back up, disk space fills without warning, and services crash at the worst possible time. A monitoring stack built on Grafana, InfluxDB, and Telegraf gives you real-time visibility into every critical Zimbra metric.

This guide walks through setting up a complete monitoring solution for your Zimbra server. We cover installing InfluxDB 2.8 as the time-series database, Telegraf 1.38 as the metrics collector with custom Zimbra inputs, and Grafana 12.4 for dashboards and alerting. The setup works on Ubuntu 24.04 / 22.04 and Debian 12 systems running Zimbra.

Prerequisites

- A running Zimbra mail server (version 8.8.15 or 10.x)

- A separate monitoring server running Ubuntu 24.04 / 22.04 or Debian 12 (recommended – keeps monitoring independent from the mail server)

- Root or sudo access on both servers

- Minimum 2 GB RAM and 20 GB free disk on the monitoring server

- Network connectivity between the Zimbra server and monitoring server on ports 8086 (InfluxDB) and 3000 (Grafana)

Step 1: Install InfluxDB on the Monitoring Server

InfluxDB is the time-series database that stores all the metrics Telegraf collects from your Zimbra server. We use InfluxDB 2.x which includes a built-in web UI and token-based authentication.

Add the InfluxData repository and install InfluxDB:

curl -fsSL https://repos.influxdata.com/influxdata-archive_compat.key | sudo gpg --dearmor -o /usr/share/keyrings/influxdata-archive-keyring.gpg

echo "deb [signed-by=/usr/share/keyrings/influxdata-archive-keyring.gpg] https://repos.influxdata.com/debian stable main" | sudo tee /etc/apt/sources.list.d/influxdata.list

sudo apt update && sudo apt install -y influxdb2Enable and start the InfluxDB service:

sudo systemctl enable --now influxdb

sudo systemctl status influxdbThe service should show active (running):

● influxdb.service - InfluxDB is an open-source, distributed, time series database

Loaded: loaded (/lib/systemd/system/influxdb.service; enabled; preset: enabled)

Active: active (running) since Sat 2026-03-22 10:15:32 UTC; 5s ago

Main PID: 12345 (influxd)

Memory: 64.0M

CPU: 1.200s

CGroup: /system.slice/influxdb.service

└─12345 /usr/bin/influxdRun the initial setup to create an organization, bucket, and admin token. Replace the password with something strong:

influx setup \

--username admin \

--password StrongPassword123 \

--org myorg \

--bucket zimbra \

--retention 30d \

--forceThe setup creates a bucket named zimbra with 30-day retention. Save the API token from the output – you need it for Telegraf configuration.

Retrieve the token if you missed it:

influx auth listCopy the token value from the Token column. You will use this in the Telegraf configuration.

Step 2: Install Telegraf on the Zimbra Server

Telegraf runs on the Zimbra server itself, collecting metrics locally and shipping them to InfluxDB. If you have already installed Telegraf on Ubuntu / Debian before, the process is the same.

Add the InfluxData repository on the Zimbra server and install Telegraf:

curl -fsSL https://repos.influxdata.com/influxdata-archive_compat.key | sudo gpg --dearmor -o /usr/share/keyrings/influxdata-archive-keyring.gpg

echo "deb [signed-by=/usr/share/keyrings/influxdata-archive-keyring.gpg] https://repos.influxdata.com/debian stable main" | sudo tee /etc/apt/sources.list.d/influxdata.list

sudo apt update && sudo apt install -y telegrafVerify the installation:

telegraf --versionYou should see the installed version confirmed:

Telegraf 1.38.1 (git: HEAD@abcdef12)Step 3: Configure Telegraf Inputs for Zimbra Monitoring

The power of this setup is in the custom Telegraf inputs that pull Zimbra-specific metrics. We use the exec input plugin to run Zimbra commands and parse their output into time-series data.

Create a dedicated Telegraf configuration file for Zimbra inputs:

sudo vi /etc/telegraf/telegraf.d/zimbra.confAdd the following configuration to collect Zimbra service status, mail queue depth, and mailbox store sizes:

# Zimbra service status - tracks running/stopped state of each service

[[inputs.exec]]

commands = ["/usr/local/bin/zimbra_services.sh"]

timeout = "30s"

data_format = "influx"

name_override = "zimbra_services"

interval = "60s"

# Zimbra mail queue - monitors messages waiting for delivery

[[inputs.exec]]

commands = ["/usr/local/bin/zimbra_mailq.sh"]

timeout = "30s"

data_format = "influx"

name_override = "zimbra_mailq"

interval = "60s"

# Zimbra mailbox store sizes - tracks per-account disk usage

[[inputs.exec]]

commands = ["/usr/local/bin/zimbra_mailbox_sizes.sh"]

timeout = "60s"

data_format = "influx"

name_override = "zimbra_mailbox"

interval = "300s"Now create the helper scripts that Telegraf calls. First, the service status script:

sudo vi /usr/local/bin/zimbra_services.shAdd the following script that parses zmcontrol status output into InfluxDB line protocol:

#!/bin/bash

# Collect Zimbra service status as InfluxDB line protocol

su - zimbra -c "zmcontrol status" 2>/dev/null | grep -v "^Host" | while read -r line; do

service=$(echo "$line" | awk '{print $1}')

status=$(echo "$line" | awk '{print $2}')

if [ -n "$service" ] && [ -n "$status" ]; then

if [ "$status" = "Running" ]; then

value=1

else

value=0

fi

echo "zimbra_services,service=$service status=${value}i"

fi

doneNext, create the mail queue monitoring script:

sudo vi /usr/local/bin/zimbra_mailq.shAdd the following to count messages in the Postfix mail queue:

#!/bin/bash

# Count messages in Zimbra/Postfix mail queue

queue_count=$(su - zimbra -c "mailq" 2>/dev/null | tail -1 | grep -oP '\d+(?= Requests)')

if [ -z "$queue_count" ]; then

queue_count=0

fi

echo "zimbra_mailq queue_size=${queue_count}i"Create the mailbox size monitoring script:

sudo vi /usr/local/bin/zimbra_mailbox_sizes.shAdd the script that collects per-account mailbox sizes:

#!/bin/bash

# Collect Zimbra mailbox sizes in bytes

su - zimbra -c "zmprov gqu $(hostname -f)" 2>/dev/null | tail -n +2 | while read -r account used limit; do

if [ -n "$account" ] && [ -n "$used" ]; then

echo "zimbra_mailbox,account=$account used=${used}i,limit=${limit}i"

fi

doneMake all scripts executable:

sudo chmod +x /usr/local/bin/zimbra_services.sh

sudo chmod +x /usr/local/bin/zimbra_mailq.sh

sudo chmod +x /usr/local/bin/zimbra_mailbox_sizes.shThe Telegraf user needs permission to run commands as the zimbra user. Add a sudoers entry:

echo "telegraf ALL=(zimbra) NOPASSWD: ALL" | sudo tee /etc/sudoers.d/telegraf

sudo chmod 440 /etc/sudoers.d/telegrafUpdate the scripts to use sudo -u zimbra instead of su - zimbra since Telegraf runs as its own user. Replace all three scripts by changing su - zimbra -c to sudo -u zimbra. For example, in zimbra_services.sh:

sudo -u zimbra /opt/zimbra/bin/zmcontrol status 2>/dev/null | grep -v "^Host"Step 4: Configure Telegraf System Metrics and Output

Besides Zimbra-specific metrics, Telegraf should collect standard system metrics – CPU, memory, disk, and network. These are essential for correlating Zimbra performance with server resource usage. If you want a deeper dive, check the guide on monitoring Linux systems with Grafana and Telegraf.

Edit the main Telegraf configuration:

sudo vi /etc/telegraf/telegraf.confConfigure the InfluxDB v2 output to send metrics to your monitoring server. Replace MONITORING_SERVER_IP with the actual IP address and YOUR_TOKEN with the InfluxDB API token from Step 1:

[agent]

interval = "30s"

round_interval = true

metric_batch_size = 1000

metric_buffer_limit = 10000

flush_interval = "10s"

hostname = ""

omit_hostname = false

[[outputs.influxdb_v2]]

urls = ["http://MONITORING_SERVER_IP:8086"]

token = "YOUR_TOKEN"

organization = "myorg"

bucket = "zimbra"

# Standard system metrics

[[inputs.cpu]]

percpu = true

totalcpu = true

collect_cpu_time = false

[[inputs.mem]]

[[inputs.disk]]

ignore_fs = ["tmpfs", "devtmpfs", "devfs", "iso9660", "overlay", "aufs", "squashfs"]

[[inputs.diskio]]

[[inputs.net]]

[[inputs.system]]

[[inputs.processes]]

# Monitor Zimbra-specific ports

[[inputs.net_response]]

protocol = "tcp"

address = "localhost:25"

timeout = "5s"

[inputs.net_response.tags]

service = "smtp"

[[inputs.net_response]]

protocol = "tcp"

address = "localhost:143"

timeout = "5s"

[inputs.net_response.tags]

service = "imap"

[[inputs.net_response]]

protocol = "tcp"

address = "localhost:443"

timeout = "5s"

[inputs.net_response.tags]

service = "https"

[[inputs.net_response]]

protocol = "tcp"

address = "localhost:7071"

timeout = "5s"

[inputs.net_response.tags]

service = "admin_console"The net_response inputs check that Zimbra’s critical ports (SMTP, IMAP, HTTPS, admin console) are responding. A failure here triggers immediate visibility in Grafana.

Restart Telegraf and verify it is running:

sudo systemctl restart telegraf

sudo systemctl status telegrafCheck the Telegraf logs for any connection errors:

sudo journalctl -u telegraf --no-pager -n 20Look for lines confirming successful output to InfluxDB. If you see connection refused errors, verify that port 8086 is open on the monitoring server firewall.

Step 5: Install Grafana on the Monitoring Server

Grafana provides the visualization layer. Install it on the same server running InfluxDB.

Add the Grafana APT repository:

sudo apt install -y apt-transport-https software-properties-common

curl -fsSL https://apt.grafana.com/gpg.key | sudo gpg --dearmor -o /usr/share/keyrings/grafana-archive-keyring.gpg

echo "deb [signed-by=/usr/share/keyrings/grafana-archive-keyring.gpg] https://apt.grafana.com stable main" | sudo tee /etc/apt/sources.list.d/grafana.list

sudo apt update && sudo apt install -y grafanaEnable and start Grafana:

sudo systemctl enable --now grafana-server

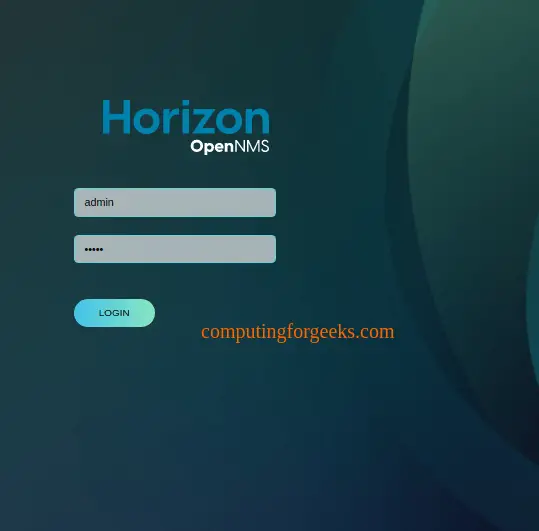

sudo systemctl status grafana-serverGrafana should now be accessible at http://MONITORING_SERVER_IP:3000. The default login is admin / admin – change this immediately on first login.

Step 6: Add InfluxDB Data Source in Grafana

Connect Grafana to InfluxDB so it can query your Zimbra metrics.

- Open Grafana at

http://MONITORING_SERVER_IP:3000 - Navigate to Connections > Data sources > Add data source

- Select InfluxDB

- Configure the following settings:

| Setting | Value |

|---|---|

| Query Language | Flux |

| URL | http://localhost:8086 |

| Organization | myorg |

| Token | Your InfluxDB API token from Step 1 |

| Default Bucket | zimbra |

Click Save & test. You should see a green confirmation message that the data source is working. If it fails, verify InfluxDB is running and the token is correct.

Step 7: Create a Zimbra Monitoring Dashboard

Build a dashboard that gives you a single-pane view of your Zimbra server health. Go to Dashboards > New dashboard > Add visualization.

Zimbra Service Status Panel

Create a stat panel showing whether each Zimbra service is running. Use this Flux query:

from(bucket: "zimbra")

|> range(start: v.timeRangeStart, stop: v.timeRangeStop)

|> filter(fn: (r) => r._measurement == "zimbra_services")

|> filter(fn: (r) => r._field == "status")

|> last()

|> group(columns: ["service"])Set the panel type to Stat. Under value mappings, map 1 to “Running” (green) and 0 to “Stopped” (red). This gives you instant visibility into service health.

Mail Queue Depth Panel

A time-series graph of mail queue size helps you spot delivery problems before they escalate:

from(bucket: "zimbra")

|> range(start: v.timeRangeStart, stop: v.timeRangeStop)

|> filter(fn: (r) => r._measurement == "zimbra_mailq")

|> filter(fn: (r) => r._field == "queue_size")

|> aggregateWindow(every: v.windowPeriod, fn: mean)Set panel type to Time series. Add a threshold at 50 messages (yellow) and 200 messages (red) to highlight abnormal queue buildup.

Disk Usage Panel

Zimbra stores email data in /opt/zimbra. Monitor disk usage to prevent full-disk outages:

from(bucket: "zimbra")

|> range(start: v.timeRangeStart, stop: v.timeRangeStop)

|> filter(fn: (r) => r._measurement == "disk")

|> filter(fn: (r) => r._field == "used_percent")

|> filter(fn: (r) => r.path == "/" or r.path == "/opt/zimbra")

|> aggregateWindow(every: v.windowPeriod, fn: mean)Use a gauge panel with thresholds at 80% (yellow) and 90% (red).

Port Response Panel

Track whether SMTP, IMAP, HTTPS, and admin console ports are responding:

from(bucket: "zimbra")

|> range(start: v.timeRangeStart, stop: v.timeRangeStop)

|> filter(fn: (r) => r._measurement == "net_response")

|> filter(fn: (r) => r._field == "result_code")

|> last()

|> group(columns: ["service"])Use a stat panel with value mappings: 0 = “Up” (green), anything else = “Down” (red). This catches network-level failures even when the Zimbra process appears running.

System Resource Panels

Add standard panels for CPU usage, memory usage, and network throughput using the built-in cpu, mem, and net measurements. These help correlate Zimbra issues with resource constraints – for example, high CPU during spam storms or memory pressure from too many concurrent IMAP connections.

Step 8: Configure Alerts for Zimbra

Dashboards are useless if nobody is watching them. Set up alerts so you get notified the moment something goes wrong.

Configure Email Notifications

Go to Alerting > Contact points > Add contact point. Select Email and add the addresses that should receive alerts. Grafana uses the SMTP settings from its configuration file:

sudo vi /etc/grafana/grafana.iniFind the [smtp] section and configure it with your mail relay:

[smtp]

enabled = true

host = localhost:25

user =

password =

from_address = [email protected]

from_name = Grafana AlertsRestart Grafana after changing SMTP settings:

sudo systemctl restart grafana-serverConfigure Slack Notifications

For Slack alerts, create an incoming webhook in your Slack workspace. Then add a Slack contact point in Grafana under Alerting > Contact points > Add contact point. Select Slack and paste your webhook URL.

Create Alert Rules

Navigate to Alerting > Alert rules > New alert rule. Create rules for the critical Zimbra conditions:

| Alert Name | Condition | Severity |

|---|---|---|

| Zimbra Service Down | zimbra_services status = 0 for 2 minutes | Critical |

| Mail Queue High | zimbra_mailq queue_size > 100 for 5 minutes | Warning |

| Disk Usage Critical | disk used_percent > 90% for 5 minutes | Critical |

| Port Unresponsive | net_response result_code != 0 for 2 minutes | Critical |

| High Memory Usage | mem used_percent > 90% for 5 minutes | Warning |

For each rule, set the evaluation interval to 1 minute and the pending period to match the “for” duration in the table. Assign the appropriate contact point (email, Slack, or both).

Step 9: Configure Firewall Rules

Open the required ports on both servers. For detailed Zimbra firewall configuration, see the guide on Zimbra firewall configuration with UFW and Firewalld.

On the monitoring server, open ports for InfluxDB and Grafana:

sudo ufw allow 8086/tcp comment "InfluxDB"

sudo ufw allow 3000/tcp comment "Grafana"

sudo ufw reloadFor better security, restrict InfluxDB access to only the Zimbra server IP:

sudo ufw allow from ZIMBRA_SERVER_IP to any port 8086 proto tcp comment "InfluxDB from Zimbra"If the monitoring server uses firewalld instead of UFW:

sudo firewall-cmd --permanent --add-port=8086/tcp

sudo firewall-cmd --permanent --add-port=3000/tcp

sudo firewall-cmd --reloadVerify the firewall rules are active:

sudo ufw status verboseThe output should list both ports as ALLOW:

To Action From

-- ------ ----

8086/tcp ALLOW Anywhere # InfluxDB

3000/tcp ALLOW Anywhere # GrafanaConclusion

You now have a complete monitoring stack for your Zimbra mail server – InfluxDB stores time-series metrics, Telegraf collects both system and Zimbra-specific data, and Grafana gives you dashboards with real-time alerting. This setup catches problems like backed-up mail queues, failed services, and disk pressure before they impact users.

For production hardening, put Grafana behind a reverse proxy with TLS, restrict InfluxDB access to known IPs only, and set up backup retention policies in InfluxDB to prevent unbounded storage growth. Consider adding Grafana and InfluxDB monitoring for multiple servers if you run a Zimbra multi-server deployment.