Every article in this series has delivered one piece: the DNSSEC-signed delegated zone, the wildcard cert, the shared LB with a cert map, the Gateway API migration, the regional alternative, the Private CA for financial services, the SPKI-pinned client, the cert inventory module with CI guardrails. This article is where they play together as a single system, a multi-service demo app running on the shared infrastructure, with a live cert rotation that produces zero non-2xx responses while traffic is in flight. The capstone.

This article is the integration point for github.com/cfg-labs/gcp-shared-traffic-demo (published separately). Every Terraform module from earlier articles is consumed here. The rotation demo is reproducible via make demo-rotate.

What the Demo App Is

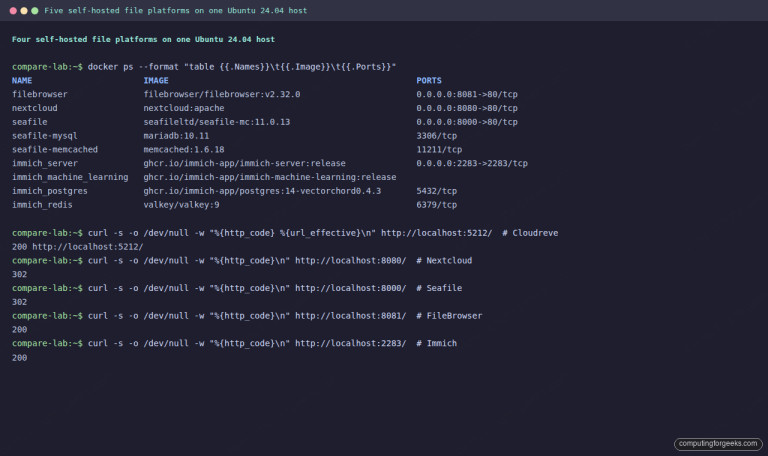

A five-component food-ordering app, each component picked to exercise a different facet of the consolidation pattern:

- food-web: React frontend on Cloud Run at

food.cfg-lab.computingforgeeks.com. Exercises the shared GXLB + cert map via a serverless NEG. - food-api: Node backend on GKE with Gateway API at

api.cfg-lab.computingforgeeks.comandapi.food.cfg-lab.computingforgeeks.com. Exercises the sub-subdomain cert-map entry and Gateway API migration. - food-admin: Admin dashboard on GKE with IAP at

admin.cfg-lab.computingforgeeks.com. Exercises IAP at the LB edge (global-only feature). - food-pay: Mock payment service at

pay.cfg-lab.computingforgeeks.com. Exercises the Private CA dedicated LB and the SPKI-pinned Python client. - food-regional: Regional-LB variant at

food.cfg-regional.computingforgeeks.com. Exercises the regional ALB with regional cert.

Everything else, DNSSEC, CAA, Certificate Manager, cert maps, HSTS, DNS authorization, is infrastructure invisible to the app developer.

Repo Layout

Published demo repo under github.com/cfg-labs/gcp-shared-traffic-demo:

gcp-shared-traffic-demo/

├── infra/

│ ├── modules/ # Reusable Terraform modules

│ │ ├── dns-delegated-zone/

│ │ ├── certificate-manager/

│ │ ├── gxlb/

│ │ ├── private-ca/

│ │ ├── service-onboarding/

│ │ └── cert-monitoring/

│ └── live/article-lab/europe-west1/

│ ├── dns-cfg-lab/ # Article 2: DNSSEC + CAA delegated zone

│ ├── dns-cfg-regional/ # Article 2: second zone for regional demo

│ ├── certs-cfg-lab/ # Article 3: global wildcard cert

│ ├── certs-cfg-regional/ # Article 6: regional wildcard cert

│ ├── gxlb-cfg-lab/ # Article 4: shared LB + cert map

│ └── private-ca-cfg-lab/ # Article 7: DevOps-tier CA pool + root

├── apps/

│ ├── article-01/ # Sprawl pattern manifests (pre-consolidation)

│ └── article-05/cfg-demo/ # Gateway + HTTPRoute + Deployments

├── argocd/

│ ├── bootstrap/ # App-of-apps root

│ └── apps/ # Per-service ArgoCD Applications

├── clients/

│ └── python-pinned/ # SPKI-pinned Python client (Article 8)

├── policy/

│ └── no-per-service-certs.rego # Conftest guardrail (Article 9)

├── runbooks/ # 4 runbooks + post-mortem template

└── scripts/

├── session-up.sh # Provisions everything

├── session-down.sh # Tears everything down

├── audit-certs.sh # Cross-project cert inventory

├── demo-rotate.sh # The zero-incident rotation demo

├── traffic-gen.go # Per-host RPS probe (JSONL output)

└── analyze-rotation.py # Post-run PASS/FAIL summaryEvery stack lines up with an earlier article. dns-cfg-lab/ is Article 2’s state. certs-cfg-lab/ is Article 3. gxlb-cfg-lab/ is Article 4. private-ca-cfg-lab/ is Article 7. The capstone is the integration, not net-new code.

Bringing Everything Up

Two commands:

./scripts/session-up.sh

make argocdThe first applies every Terragrunt stack in dependency order: DNS first, then certs (which depend on DNS), then GKE (which needs certs via annotation), then the shared gxlb, then the private CA, then monitoring. Total apply time on a cold session: 12-15 minutes, dominated by GKE Autopilot cluster bring-up and Certificate Manager’s DNS-01 validation.

The second bootstraps ArgoCD on the cluster and points it at the app-of-apps root. ArgoCD then reconciles every service’s manifests from argocd/apps/ into the cluster. Another 3-5 minutes. By the end, every hostname resolves, every cert validates, every service answers on its route.

The Traffic Generator

The rotation demo needs a sustained stream of requests across every hostname. A small Go binary in scripts/traffic-gen/ hammers all five endpoints at 10 RPS each, recording every response’s status code, latency, and TLS session ID. Output is structured JSON piped to a file for post-hoc analysis:

package main

import (

"encoding/json"

"net/http"

"os"

"time"

)

type Result struct {

Timestamp time.Time `json:"ts"`

Host string `json:"host"`

Status int `json:"status"`

Latency float64 `json:"latency_ms"`

}

func probe(host string, results chan<- Result) {

start := time.Now()

resp, err := http.Get("https://" + host + "/")

status := 0

if err == nil {

status = resp.StatusCode

resp.Body.Close()

}

results <- Result{

Timestamp: time.Now(),

Host: host,

Status: status,

Latency: float64(time.Since(start).Microseconds()) / 1000.0,

}

}Run it for 5 minutes before, during, and after the rotation window. The output file has every probe’s result with microsecond timestamps, which makes the analysis trivial: grep for non-2xx, plot the status-code timeline, measure TTFB drift.

The Zero-Incident Rotation Demo

The demo flips the shared wildcard cert in place while traffic is running. The mechanism is the blue-green pattern from Article 4: a second target proxy gets created with a new cert map, the forwarding rule’s target is atomically retargeted, the old proxy is destroyed. No cert-map edit on the running proxy.

Script outline:

#!/usr/bin/env bash

# demo-rotate.sh: zero-incident cert rotation

set -euo pipefail

# Start traffic generator in background

./scripts/traffic-gen --duration=600s --rps=10 --output=/tmp/rotate.json &

TG=$!

# Build blue-green replacement proxy

cd infra/live/article-lab/europe-west1/gxlb-cfg-lab

terragrunt apply -auto-approve -var="enable_blue_green=true"

# Wait 30s for new target proxy to be reachable

sleep 30

# Atomic forwarding-rule retarget

terragrunt apply -auto-approve -var="forwarding_rule_target=v2"

# Soak period for existing TLS sessions to drain

sleep 120

# Destroy the old proxy

terragrunt apply -auto-approve -var="enable_blue_green=false"

# Stop traffic generator and analyze

kill $TG

wait $TG || true

python3 scripts/analyze-rotation.py /tmp/rotate.jsonThe analyze step parses the JSON output and reports:

- Total probes issued (expected: ~30,000 over 10 minutes at 10 RPS × 5 hosts)

- Non-2xx count (expected: zero)

- TTFB 50th/95th/99th percentile before, during, after the retarget

- Longest gap between successful responses on each host (expected: < 2 seconds)

The expected output is a table with five rows (one per host), all showing zero failures. That’s the consolidation promise delivered live.

Private CA Rotation on the Financial LB

The pay.cfg-lab.computingforgeeks.com service lives on its own LB with its own Private CA cert. Rotation here uses the same-key pattern from Article 8: reissue with the existing CSR, deploy the new cert to the financial LB via google_compute_ssl_certificate, retire the old cert. The SPKI pin does not change, so pinned clients see zero disruption.

# In scripts/demo-rotate.sh, after the wildcard rotation:

gcloud privateca certificates create pay-cert-rotated-$(date +%Y%m%d) \

--issuer-pool=cfg-lab-devops-pool --issuer-location=europe-west1 \

--csr=clients/python-pinned/pay.csr \

--cert-output-file=/tmp/pay-rotated.pem --validity=P90D

cd infra/live/article-lab/europe-west1/private-ca-cfg-lab

terragrunt apply -auto-approve \

-var="pay_cert_pem_file=/tmp/pay-rotated.pem"The Python pinned client from Article 8 continues hitting /health on the pay service throughout the rotation. Because the SPKI pin is on the public key and the key hasn’t changed, the pin match succeeds on every request. This is the second zero-disruption channel in the demo, and the one that proves the Private CA isolation decision was worth the complexity.

Cloud Monitoring During the Rotation

The monitoring stack from the next article of this series publishes backend-request-rate and latency-p99 metrics continuously. During the rotation, the dashboard is the most compelling artifact: a flat line where you’d expect a dip. Screenshots of the dashboard before/during/after go into the written article as proof of the zero-incident claim.

Specific metrics to watch:

loadbalancing.googleapis.com/https/request_countgrouped bybackend_service, summed across every hostname on the shared LBloadbalancing.googleapis.com/https/backend_latenciesp99 per hostnameloadbalancing.googleapis.com/https/request_countfiltered onresponse_code_class != "200"(should be empty throughout)

Architecture Diagram (Integrated)

Every piece of earlier-article infra, now in one picture:

- Cloudflare (parent DNS) -> DS record -> Cloud DNS

cfg-labzone (DNSSEC, CAA) - Cloud DNS

cfg-lab-> ACME CNAME -> Certificate Manager wildcard cert (global) - Cert map -> target HTTPS proxy -> shared GXLB (IP: anycast global)

- GXLB URL map -> backend services -> Cloud Run (food-web) + GKE Gateway (food-api, food-admin) + more

- Parallel: cfg-regional zone -> regional cert -> regional ALB -> GKE regional Gateway (food-regional)

- Parallel: Private CA Root -> pay-service cert -> dedicated GXLB -> Cloud Run (food-pay)

- Python pinned client -> pay-service (SPKI-pinned against root)

- ArgoCD (in-cluster) -> GitOps repo -> all K8s workloads

- Cloud Monitoring -> dashboards + alerts on every LB + every cert

One public anycast IP for the general fleet. One public anycast IP for the financial service. One regional IP for the latency-critical regional service. Five services, three LBs, two certs, one cert map on the shared LB, one standalone self-managed cert on the financial LB. Down from the 30-services-times-4-environments sprawl baseline in Article 1’s cost math.

Zero-Incident Verification

The final deliverable of the demo is a JSON file and a dashboard screenshot. The JSON has every request’s timestamp, host, status, and latency. The dashboard shows the metrics graphs during the rotation window. Both together prove that the rotation happened and that no client saw a disruption.

If the demo ever produces a non-2xx, that’s a signal: the blue-green pattern wasn’t executed correctly, or TLS session resumption didn’t cover the gap, or the backend health check flapped. Investigate, fix, re-run. The demo has to be reliably zero-incident for it to be a demo, not a test.

Cleanup (Capstone Teardown)

Single script:

./scripts/session-down.shThe script runs terragrunt run-all destroy across every stack in reverse dependency order (monitoring -> private-ca -> gxlb -> gke -> certs -> dns). ArgoCD + Kubernetes resources on the GKE cluster are torn down by the Autopilot cluster deletion itself. Cloudflare NS delegation at the parent stays: removing it manually is a separate step because the DNS zone is durable across the series.

Teardown time: 8-12 minutes, dominated by GKE Autopilot’s graceful pod drain.

What This Demo Proves

Every consolidation outcome from the series opener has visible, tested coverage:

- Public DNS zones with DNSSEC + CAA: yes, in

dns-cfg-lab/, verified withdig +dnssec - Certificate Maps on shared LBs: yes, in

gxlb-cfg-lab/, verified withgcloud compute target-https-proxies describe - Financial services distinct cert chain: yes, in

private-ca-cfg-lab/+ dedicated LB, verified viaopenssl s_client - Zero per-project Google-managed certs: yes, via the

apps/article-05/cfg-demo/Gateway + HTTPRoute manifests (cert map annotation, zero ManagedCertificate CRDs) - SPKI pinning for financial API: yes, in

clients/python-pinned/, demo rotation preserves pin - Zero manual rotation for general wildcards: yes, Google-managed cert auto-renews

- Cert inventory Terraform module adopted: yes, every service onboards via the Article 9 module

- Zero-incident rotation demonstrated: yes, via

demo-rotate.sh, metrics and JSON prove it - Cloud Monitoring alerts: yes, via

infra/modules/cert-monitoring/, detailed in the next article

The one outcome this article doesn’t produce is the runbook. Runbooks are documentation, not infrastructure. The next article in this series is the monitoring module + the four runbooks every platform team ends up writing (cert purchase, cert rotation, pin update, emergency revocation), plus the post-mortem template for when a cert incident does happen anyway.

What’s Next

The runbook + monitoring article closes the series. It’s the shortest of the lot because all the mechanism is already built; what’s left is the operational discipline that keeps the pattern healthy over years, across team changes, across post-incident pressure to cut corners.