I’ve been planning on putting together an article covering the installation of Ceph 18 (Reef) Storage Cluster on Ubuntu 22.04|20.04 Linux servers and this is the delivery day. Ceph is software software-defined storage solution designed for building distributed storage clusters on commodity hardware. The requirements for building the Ceph 18 (Reef) Storage Cluster on Ubuntu 22.04|20.04 will depend largely on the desired use case.

This setup is not for running mission-critical intense write applications. You may need to consult official project documentation for such requirements, especially on networking and storage hardware. Below are the standard Ceph components that will be configured in this installation guide:

- Ceph MON – Monitor Server

- Ceph MDS – Metadata Servers

- Ceph MGR – Ceph Manager daemon

- Ceph OSDs – The object storage daemons

There are packages required when setting up the Ceph 18 (Reef) Storage Cluster. These are:

- Python 3

- Systemd

- Podman or Docker for running containers

- Time synchronization (such as chrony or NTP)

- LVM2 for provisioning storage devices

Install Ceph 18 (Reef) Storage Cluster on Ubuntu 22.04|20.04

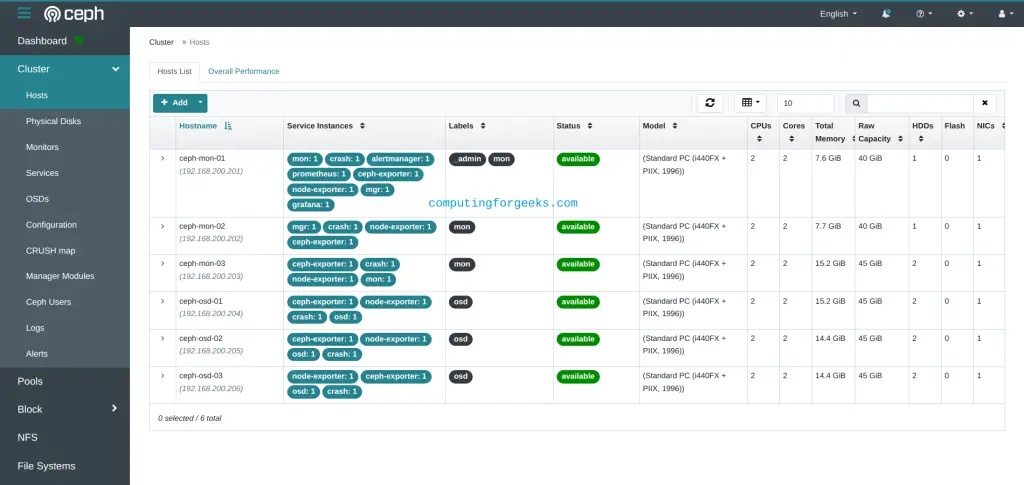

Before you begin the deployment of Ceph 18 (Reef) Storage Cluster on Ubuntu 22.04|20.04 Linux servers you need to prepare the servers needed. Below is a picture of my servers ready for setup.

As seen in the picture, my Lab have the following server names and IP addresses.

| Server Hostname | Server IP Address | Ceph components | Server Specs |

| ceph-mon-01 | 172.16.20.10 | Ceph MON, MGR,MDS | 8gb ram, 4vpcus |

| ceph-mon-02 | 172.16.20.11 | Ceph MON, MGR,MDS | 8gb ram, 4vpcus |

| ceph-mon-03 | 172.16.20.12 | Ceph MON, MGR,MDS | 8gb ram, 4vpcus |

| ceph-osd-01 | 172.16.20.13 | Ceph OSD | 16gb ram, 8vpcus |

| ceph-osd-02 | 172.16.20.14 | Ceph OSD | 16gb ram, 8vpcus |

| ceph-osd-03 | 172.16.20.15 | Ceph OSD | 16gb ram, 8vpcus |

Step 1: Prepare first Monitor node

The Ceph component used for deployment is Cephadm. Cephadm deploys and manages a Ceph cluster by connection to hosts from the manager daemon via SSH to add, remove, or update Ceph daemon containers.

Login to your first Monitor node:

$ ssh root@ceph-mon-01

Enter passphrase for key '/var/home/jkmutai/.ssh/id_rsa':

Welcome to Ubuntu 20.04 LTS (GNU/Linux 5.4.0-33-generic x86_64)

* Documentation: https://help.ubuntu.com

* Management: https://landscape.canonical.com

* Support: https://ubuntu.com/advantage

Last login: Tue Jun 2 20:36:36 2020 from 172.16.20.10

root@ceph-mon-01:~# Update /etc/hosts file with the entries for all the IP addresses and hostnames.

# vim /etc/hosts

127.0.0.1 localhost

# Ceph nodes

172.16.20.10 ceph-mon-01

172.16.20.11 ceph-mon-02

172.16.20.12 ceph-mon-03

172.16.20.13 ceph-osd-01

172.16.20.14 ceph-osd-02

172.16.20.15 ceph-osd-03Update and upgrade OS:

sudo apt update && sudo apt -y upgrade

sudo systemctl rebootInstall Ansible and other basic utilities:

sudo apt update

sudo apt -y install software-properties-common git curl vim bash-completion ansibleConfirm Ansible has been installed.

$ ansible --version

ansible 2.9.6

config file = /etc/ansible/ansible.cfg

configured module search path = ['/root/.ansible/plugins/modules', '/usr/share/ansible/plugins/modules']

ansible python module location = /usr/lib/python3/dist-packages/ansible

executable location = /usr/bin/ansible

python version = 3.8.2 (default, Apr 27 2020, 15:53:34) [GCC 9.3.0]Ensure /usr/local/bin path is added to PATH.

echo "PATH=\$PATH:/usr/local/bin" >>~/.bashrc

source ~/.bashrcCheck your current PATH:

$ echo $PATH

/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games:/usr/local/binGenerate SSH keys:

$ ssh-keygen -t rsa -b 4096 -N '' -f ~/.ssh/id_rsa Generating public/private rsa key pair. Your identification has been saved in /root/.ssh/id_rsa Your public key has been saved in /root/.ssh/id_rsa.pub The key fingerprint is: SHA256:3gGoZCVsA6jbnBuMIpnJilCiblaM9qc5Xk38V7lfJ6U root@ceph-admin The key's randomart image is: +---[RSA 4096]----+ | ..o. . | |. +o . | |. .o.. . | |o .o .. . . | |o%o.. oS . o .| |@+*o o… .. .o | |O oo . ….. .E o| |o+.oo. . ..o| |o .++ . | +----[SHA256]-----+

There are two ways of installing Cephadm. In this guide, we will use the curl-based installation. First, obtain the latest release version from the releases page.

Export the obtained version:

CEPH_RELEASE=18.2.0Now pull the binary using the command:

curl --silent --remote-name --location https://download.ceph.com/rpm-${CEPH_RELEASE}/el9/noarch/cephadm

chmod +x cephadm

sudo mv cephadm /usr/local/bin/Confirm cephadm is available for use locally:

cephadm --helpStep 2: Use Ansible to Prepare Other Nodes

With the first Mon node configured, create an ansible playbook to update all nodes, and push ssh public key and update /etc/hosts file in all nodes.

cd ~/

vim prepare-ceph-nodes.ymlModify the below contents to set the correct timezone and add it to the file.

---

- name: Prepare ceph nodes

hosts: ceph_nodes

become: yes

become_method: sudo

vars:

ceph_admin_user: cephadmin

tasks:

- name: Set timezone

timezone:

name: Africa/Nairobi

- name: Update system

apt:

name: "*"

state: latest

update_cache: yes

- name: Install common packages

apt:

name: [vim,git,bash-completion,lsb-release,wget,curl,chrony,lvm2]

state: present

update_cache: yes

- name: Create user if absent

user:

name: "{{ ceph_admin_user }}"

state: present

shell: /bin/bash

home: /home/{{ ceph_admin_user }}

- name: Add sudo access for the user

lineinfile:

path: /etc/sudoers

line: "{{ ceph_admin_user }} ALL=(ALL:ALL) NOPASSWD:ALL"

state: present

validate: visudo -cf %s

- name: Set authorized key taken from file to Cephadmin user

authorized_key:

user: "{{ ceph_admin_user }}"

state: present

key: "{{ lookup('file', '~/.ssh/id_rsa.pub') }}"

- name: Install Docker

shell: |

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo apt-key add -

echo "deb [arch=amd64] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" > /etc/apt/sources.list.d/docker-ce.list

apt update

apt install -qq -y docker-ce docker-ce-cli containerd.io

- name: Reboot server after update and configs

reboot:Create an inventory file.

$ vim hosts

[ceph_nodes]

ceph-mon-01

ceph-mon-02

ceph-mon-03

ceph-osd-01

ceph-osd-02

ceph-osd-03Save key passphrase if you use one.

$ eval `ssh-agent -s` && ssh-add ~/.ssh/id_rsa_jmutai

Agent pid 3275

Enter passphrase for /root/.ssh/id_rsa_jmutai:

Identity added: /root/.ssh/id_rsa_jkmutai (/root/.ssh/id_rsa_jmutai)Alternatively, you can copy the SSH key on all the hosts but first, ensure root login is enabled on all hosts:

$ sudo vim /etc/ssh/sshd_config

...

PermitRootLogin yes

....Save the file and restart SSH:

sudo systemctl restart sshdNow copy the SSH keys on the generated node to all other hosts with the command:

for host in ceph-mon-01 ceph-mon-02 ceph-mon-03 ceph-osd-01 ceph-osd-02 ceph-osd-03; do

ssh-copy-id root@$host

doneConfigure ssh:

tee -a ~/.ssh/config<<EOF

Host *

UserKnownHostsFile /dev/null

StrictHostKeyChecking no

IdentitiesOnly yes

ConnectTimeout 0

ServerAliveInterval 300

EOFExecute Playbook:

##As root with SSH keys:

$ ansible-playbook -i hosts prepare-ceph-nodes.yml

# As root user with default ssh key:

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --user root

# As root user with password:

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --user root --ask-pass

# As sudo user with password - replace ubuntu with correct username

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --user ubuntu --ask-pass --ask-become-pass

# As sudo user with ssh key and sudo password - replace ubuntu with correct username

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --user ubuntu --ask-become-pass

# As sudo user with ssh key and passwordless sudo - replace ubuntu with correct username

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --user ubuntu --ask-become-pass

# As sudo or root user with custom key

$ ansible-playbook -i hosts prepare-ceph-nodes.yml --private-key /path/to/private/key <options>In my case I’ll run:

ansible-playbook -i hosts prepare-ceph-nodes.yml --private-key ~/.ssh/id_rsa_jkmutaiExecution output:

PLAY [Prepare ceph nodes] ******************************************************************************************************************************

TASK [Gathering Facts] *********************************************************************************************************************************

ok: [ceph-mon-03]

ok: [ceph-mon-02]

ok: [ceph-mon-01]

ok: [ceph-osd-01]

ok: [ceph-osd-02]

ok: [ceph-osd-03]

TASK [Update system] ***********************************************************************************************************************************

changed: [ceph-mon-01]

changed: [ceph-mon-02]

changed: [ceph-mon-03]

changed: [ceph-osd-02]

changed: [ceph-osd-01]

changed: [ceph-osd-03]

TASK [Install common packages] *************************************************************************************************************************

changed: [ceph-mon-02]

changed: [ceph-mon-01]

changed: [ceph-osd-02]

changed: [ceph-osd-01]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

TASK [Add ceph admin user] *****************************************************************************************************************************

changed: [ceph-osd-02]

changed: [ceph-mon-02]

changed: [ceph-mon-01]

changed: [ceph-mon-03]

changed: [ceph-osd-01]

changed: [ceph-osd-03]

TASK [Create sudo file] ********************************************************************************************************************************

changed: [ceph-mon-02]

changed: [ceph-osd-02]

changed: [ceph-mon-01]

changed: [ceph-osd-01]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

TASK [Give ceph admin user passwordless sudo] **********************************************************************************************************

changed: [ceph-mon-02]

changed: [ceph-mon-01]

changed: [ceph-osd-02]

changed: [ceph-osd-01]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

TASK [Set authorized key taken from file to ceph admin] ************************************************************************************************

changed: [ceph-mon-01]

changed: [ceph-osd-01]

changed: [ceph-mon-03]

changed: [ceph-osd-02]

changed: [ceph-mon-02]

changed: [ceph-osd-03]

TASK [Set authorized key taken from file to root user] *************************************************************************************************

changed: [ceph-mon-01]

changed: [ceph-mon-02]

changed: [ceph-mon-03]

changed: [ceph-osd-01]

changed: [ceph-osd-02]

changed: [ceph-osd-03]

TASK [Install Docker] **********************************************************************************************************************************

changed: [ceph-mon-01]

changed: [ceph-mon-02]

changed: [ceph-osd-02]

changed: [ceph-osd-01]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

TASK [Reboot server after update and configs] **********************************************************************************************************

changed: [ceph-osd-01]

changed: [ceph-mon-02]

changed: [ceph-osd-02]

changed: [ceph-mon-01]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

PLAY RECAP *********************************************************************************************************************************************

ceph-mon-01 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-mon-02 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-mon-03 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-01 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-02 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-03 : ok=9 changed=8 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

Test ssh as Ceph admin user created on the nodes:

$ ssh cephadmin@ceph-mon-02 Welcome to Ubuntu 20.04 LTS (GNU/Linux 5.4.0-28-generic x86_64) * Documentation: https://help.ubuntu.com * Management: https://landscape.canonical.com * Support: https://ubuntu.com/advantage The programs included with the Ubuntu system are free software; the exact distribution terms for each program are described in the individual files in /usr/share/doc/*/copyright. Ubuntu comes with ABSOLUTELY NO WARRANTY, to the extent permitted by applicable law. To run a command as administrator (user "root"), use "sudo ". See "man sudo_root" for details. cephadmin@ceph-mon-01:~$ sudo su - root@ceph-mon-01:~# logout cephadmin@ceph-mon-01:~$ exit logout Connection to ceph-mon-01 closed.

Configure /etc/hosts

Update /etc/hosts on all nodes if you don’t have active DNS configured for hostnames on all cluster servers.

Here is the playbook to modify:

$ vim update-hosts.yml

---

- name: Prepare ceph nodes

hosts: ceph_nodes

become: yes

become_method: sudo

tasks:

- name: Clean /etc/hosts file

copy:

content: ""

dest: /etc/hosts

- name: Update /etc/hosts file

blockinfile:

path: /etc/hosts

block: |

127.0.0.1 localhost

172.16.20.10 ceph-mon-01

172.16.20.11 ceph-mon-02

172.16.20.12 ceph-mon-03

172.16.20.13 ceph-osd-01

172.16.20.14 ceph-osd-02

172.16.20.15 ceph-osd-03Running playbook:

$ ansible-playbook -i hosts update-hosts.yml --private-key ~/.ssh/id_rsa_jmutai

PLAY [Prepare ceph nodes] ******************************************************************************************************************************

TASK [Gathering Facts] *********************************************************************************************************************************

ok: [ceph-mon-01]

ok: [ceph-osd-02]

ok: [ceph-mon-03]

ok: [ceph-mon-02]

ok: [ceph-osd-01]

ok: [ceph-osd-03]

TASK [Clean /etc/hosts file] ***************************************************************************************************************************

changed: [ceph-mon-02]

changed: [ceph-mon-01]

changed: [ceph-osd-01]

changed: [ceph-osd-02]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

TASK [Update /etc/hosts file] **************************************************************************************************************************

changed: [ceph-mon-02]

changed: [ceph-mon-01]

changed: [ceph-osd-01]

changed: [ceph-osd-02]

changed: [ceph-mon-03]

changed: [ceph-osd-03]

PLAY RECAP *********************************************************************************************************************************************

ceph-mon-01 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-mon-02 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-mon-03 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-01 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-02 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

ceph-osd-03 : ok=3 changed=2 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

Confirm by connecting to any node:

ssh cephadmin@ceph-mon-01Once connected, view the hosts file as shown:

$ cat /etc/hosts

# BEGIN ANSIBLE MANAGED BLOCK

127.0.0.1 localhost

172.16.20.10 ceph-mon-01

172.16.20.11 ceph-mon-02

172.16.20.12 ceph-mon-03

172.16.20.13 ceph-osd-01

172.16.20.14 ceph-osd-02

172.16.20.15 ceph-osd-03

# END ANSIBLE MANAGED BLOCKStep 3: Deploy Ceph Storage Cluster on Ubuntu 22.04|20.04

To bootstrap a new Ceph Cluster on Ubuntu 22.04|20.04, you need the first monitor address – IP or hostname.

sudo mkdir -p /etc/ceph

cephadm bootstrap \

--mon-ip 172.16.20.10 \

--initial-dashboard-user admin \

--initial-dashboard-password Str0ngAdminP@ssw0rdExecution output:

INFO:cephadm:Verifying podman|docker is present...

INFO:cephadm:Verifying lvm2 is present...

INFO:cephadm:Verifying time synchronization is in place...

INFO:cephadm:Unit chrony.service is enabled and running

INFO:cephadm:Repeating the final host check...

INFO:cephadm:podman|docker (/usr/bin/docker) is present

INFO:cephadm:systemctl is present

INFO:cephadm:lvcreate is present

INFO:cephadm:Unit chrony.service is enabled and running

INFO:cephadm:Host looks OK

INFO:root:Cluster fsid: 8dbf2eda-a513-11ea-a3c1-a534e03850ee

INFO:cephadm:Verifying IP 172.16.20.10 port 3300 ...

INFO:cephadm:Verifying IP 172.16.20.10 port 6789 ...

INFO:cephadm:Mon IP 172.16.20.10 is in CIDR network 172.31.1.1

INFO:cephadm:Pulling latest docker.io/ceph/ceph:v15 container...

INFO:cephadm:Extracting ceph user uid/gid from container image...

INFO:cephadm:Creating initial keys...

INFO:cephadm:Creating initial monmap...

INFO:cephadm:Creating mon...

INFO:cephadm:Waiting for mon to start...

INFO:cephadm:Waiting for mon...

INFO:cephadm:mon is available

INFO:cephadm:Assimilating anything we can from ceph.conf...

INFO:cephadm:Generating new minimal ceph.conf...

INFO:cephadm:Restarting the monitor...

INFO:cephadm:Setting mon public_network...

INFO:cephadm:Creating mgr...

INFO:cephadm:Wrote keyring to /etc/ceph/ceph.client.admin.keyring

INFO:cephadm:Wrote config to /etc/ceph/ceph.conf

INFO:cephadm:Waiting for mgr to start...

INFO:cephadm:Waiting for mgr...

INFO:cephadm:mgr not available, waiting (1/10)...

INFO:cephadm:mgr not available, waiting (2/10)...

INFO:cephadm:mgr not available, waiting (3/10)...

INFO:cephadm:mgr not available, waiting (4/10)...

.....

INFO:cephadm:Waiting for Mgr epoch 13...

INFO:cephadm:Mgr epoch 13 is available

INFO:cephadm:Generating a dashboard self-signed certificate...

INFO:cephadm:Creating initial admin user...

INFO:cephadm:Fetching dashboard port number...

INFO:cephadm:Ceph Dashboard is now available at:

URL: https://ceph-mon-01:8443/

User: admin

Password: Str0ngAdminP@ssw0rd

INFO:cephadm:You can access the Ceph CLI with:

sudo /usr/local/bin/cephadm shell --fsid 8dbf2eda-a513-11ea-a3c1-a534e03850ee -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring

Or, if you are only running a single cluster on this host:

sudo /usr/local/bin/cephadm shell

INFO:cephadm:Please consider enabling telemetry to help improve Ceph:

ceph telemetry on

For more information see:

https://docs.ceph.com/docs/master/mgr/telemetry/

INFO:cephadm:Bootstrap complete.Install Ceph tools. First, add the repo for the desired Ceph release.

For release 18.*, use:

cephadm add-repo --release reefOnce added, install the required tools:

cephadm install ceph-commonVerify the installation:

$ ceph -v

ceph version 18.2.0 (5dd24139a1eada541a3bc16b6941c5dde975e26d) reef (stable)Add extra monitors if you have them by running the below commands:

##Copy Ceph SSH key##

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-mon-02

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-mon-03

## Add nodes to the cluster ##

ceph orch host add ceph-mon-02

ceph orch host add ceph-mon-03

##Label the nodes with mon ##

ceph orch host label add ceph-mon-01 mon

ceph orch host label add ceph-mon-02 mon

ceph orch host label add ceph-mon-03 mon

## Apply configs##

ceph orch apply mon ceph-mon-02

ceph orch apply mon ceph-mon-03View a list of hosts and labels.

# ceph orch host ls

HOST ADDR LABELS STATUS

ceph-mon-01 172.16.20.10 _admin,mon

ceph-mon-02 172.16.20.11 mon

ceph-mon-03 172.16.20.12 mon

3 hosts in clusterContainers running:

# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

7d666ae63232 prom/alertmanager "/bin/alertmanager -…" 3 minutes ago Up 3 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-alertmanager.ceph-mon-01

4e7ccde697c7 prom/prometheus:latest "/bin/prometheus --c…" 3 minutes ago Up 3 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-prometheus.ceph-mon-01

9fe169a3f2dc ceph/ceph-grafana:latest "/bin/sh -c 'grafana…" 8 minutes ago Up 8 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-grafana.ceph-mon-01

c8e99deb55a4 prom/node-exporter "/bin/node_exporter …" 8 minutes ago Up 8 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-node-exporter.ceph-mon-01

277f0ef7dd9d ceph/ceph:v15 "/usr/bin/ceph-crash…" 9 minutes ago Up 9 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-crash.ceph-mon-01

9de7a86857aa ceph/ceph:v15 "/usr/bin/ceph-mgr -…" 10 minutes ago Up 10 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-mgr.ceph-mon-01.qhokxo

d116bc14109c ceph/ceph:v15 "/usr/bin/ceph-mon -…" 10 minutes ago Up 10 minutes ceph-8dbf2eda-a513-11ea-a3c1-a534e03850ee-mon.ceph-mon-01Step 4: Deploy Ceph OSDs

Install the cluster’s public SSH key in the new OSD node root user’s authorized_keys file:

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-osd-01

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-osd-02

ssh-copy-id -f -i /etc/ceph/ceph.pub root@ceph-osd-03Tell Ceph that the new node is part of the cluster:

## Add hosts to cluster ##

ceph orch host add ceph-osd-01

ceph orch host add ceph-osd-02

ceph orch host add ceph-osd-03

##Give new nodes labels ##

ceph orch host label add ceph-osd-01 osd

ceph orch host label add ceph-osd-02 osd

ceph orch host label add ceph-osd-03 osdView all devices on storage nodes:

# ceph orch device ls

HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS

ceph-osd-01 /dev/sdb hdd QEMU_HARDDISK_drive-scsi1 5120M Yes 79s ago

ceph-osd-02 /dev/sdb hdd QEMU_HARDDISK_drive-scsi1 5120M Yes 38s ago

ceph-osd-03 /dev/sdb hdd QEMU_HARDDISK_drive-scsi1 5120M Yes 38s ago A storage device is considered available if all of the following conditions are met:

- The device must have no partitions.

- The device must not have any LVM state.

- The device must not be mounted.

- The device must not contain a file system.

- The device must not contain a Ceph BlueStore OSD.

- The device must be larger than 5 GB.

Tell Ceph to consume the available and unused storage device(/dev/sdb for my case)

# ceph orch daemon add osd ceph-osd-01:/dev/sdb Created osd(s) 0 on host 'ceph-osd-01' # ceph orch daemon add osd ceph-osd-02:/dev/sdb Created osd(s) 1 on host 'ceph-osd-02' # ceph orch daemon add osd ceph-osd-03:/dev/sdb Created osd(s) 1 on host 'ceph-osd-03'

Check Ceph cluster status:

# ceph -s

cluster:

id: a0438f44-471b-11ee-8b36-25e1769b22fc

health: HEALTH_OK

services:

mon: 2 daemons, quorum ceph-mon-01,ceph-mon-03 (age 10m)

mgr: ceph-mon-01.ixlvxj(active, since 12m), standbys: ceph-mon-02.ltlamn

osd: 3 osds: 3 up (since 40s), 3 in (since 59s)

data:

pools: 1 pools, 1 pgs

objects: 2 objects, 577 KiB

usage: 83 MiB used, 15 GiB / 15 GiB avail

pgs: 1 active+cleanStep 5: Access Ceph Dashboard

Ceph Dashboard is now available at the Address of the active MGR server identified in the ceph -s command above.

For this case, it will be https://ceph-mon-01:8443/

If you are unable to access the service, ensure the port is allowed through the firewall on the MGR host:

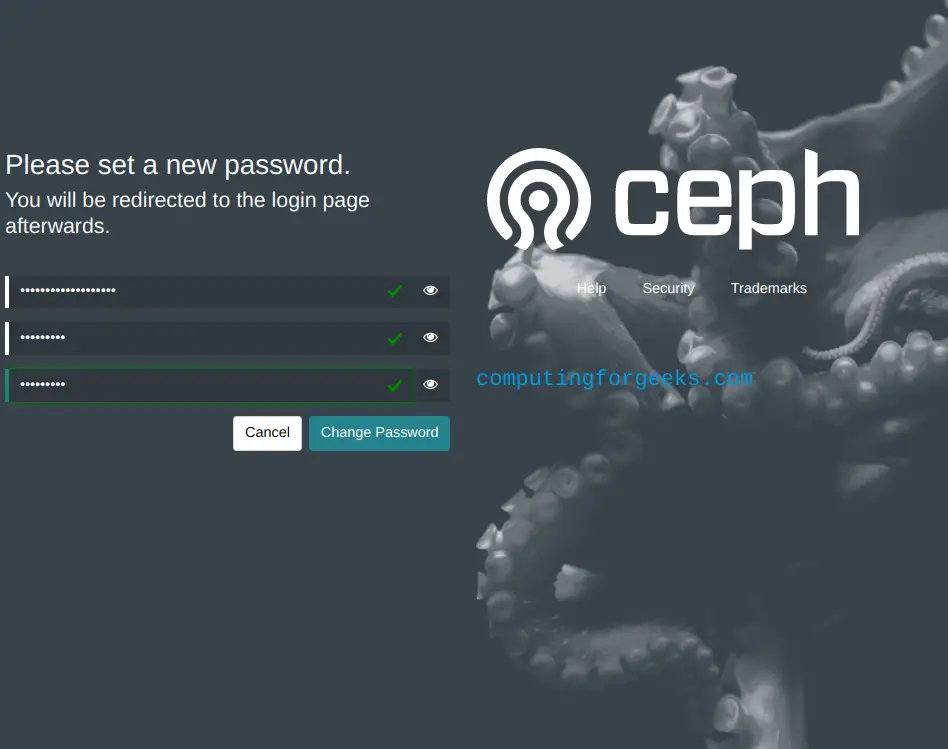

sudo ufw allow 8443Login with the credentials to access the Ceph management dashboard.

User: admin

Password: Str0ngAdminP@ssw0rdOnce connected, set the new password:

Login with the new password and enjoy management of Ceph Storage Cluster on Ubuntu 22.04|20.04 using Cephadm and Containers.

Our next articles will cover adding additional OSDs, removing them, configuring RGW etc. Stay connected for updates.

See more on this page:

Thanks for the superb walkthrough to install octopus, Do you have procedure to install Object Gateway as well ?