This is our third article on setting up a private OpenNebula Cloud to manage your Infrastructure resources efficiently. In our first article we discussed on installing OpenNebula frontend on Debian. In our second article we covered the steps necessary in the installation and configuration of OpenNebula KVM Node on a Debian system.

In this guide we assume you have configured bridged networks on your KVM nodes. You can refer to the guides below for how-to steps.

Once you’ve created a Linux bridge on each host that will be running virtual machines in OpenNebula infrastructure, definition of required virtual networks can be done.

Bridged networks can operate in four different modes depending on the additional traffic filtering made by the OpenNebula:

- Dummy Bridged, no filtering, no bridge setup (legacy no-op driver).

- Bridged, no filtering is made, managed bridge.

- Bridged with Security Groups, iptables rules are installed to implement security groups rules.

- Bridged with ebtables VLAN, same as above plus additional ebtables rules to isolate (L2) each Virtual Networks.

The focus of this article is managed bridge without filtering.

Step 1: Login to Sunstone Web interface

Open your OpenNebula Frontend web console and authenticate.

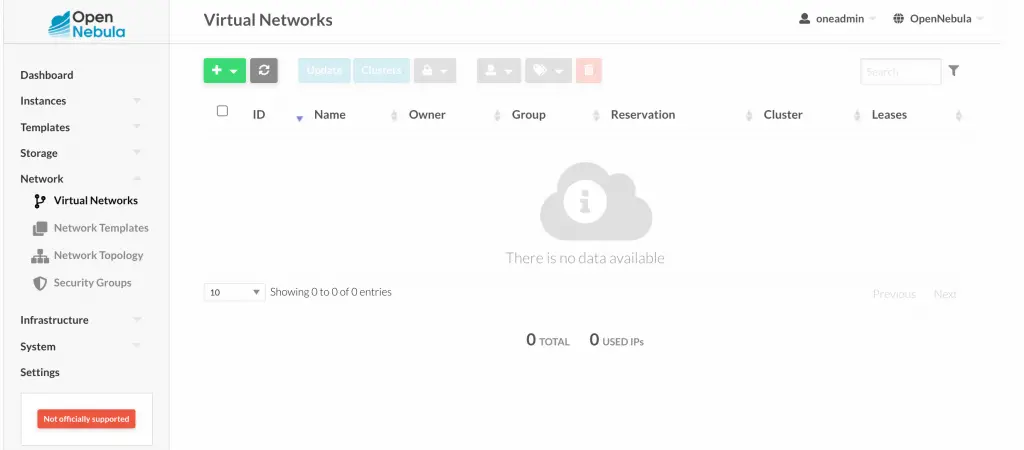

Step 2: Configure Bridged Networks in OpenNebula

Once Logged in navigate to “Network” > “Virtual Networks“

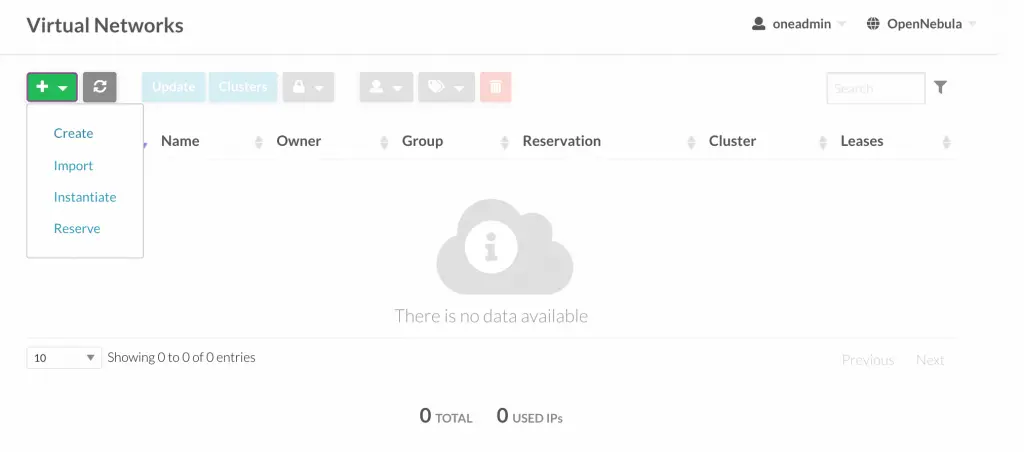

Choose “Create” option

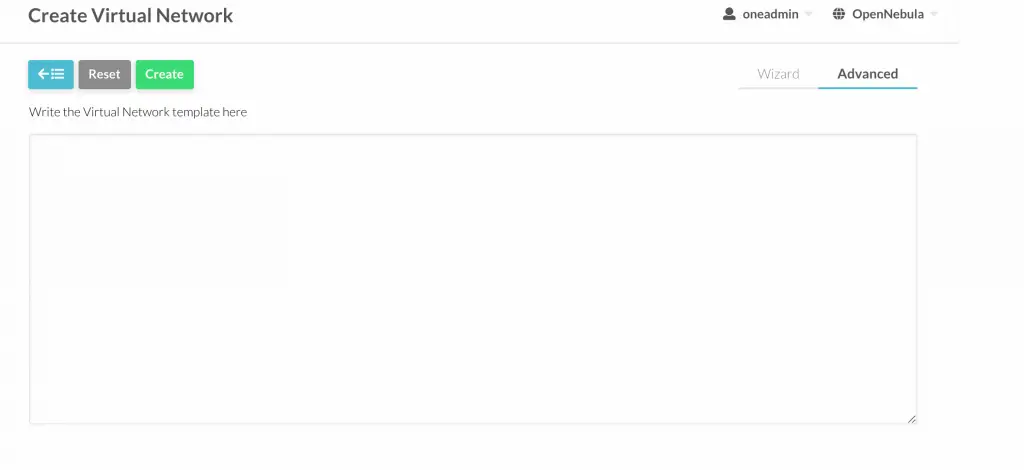

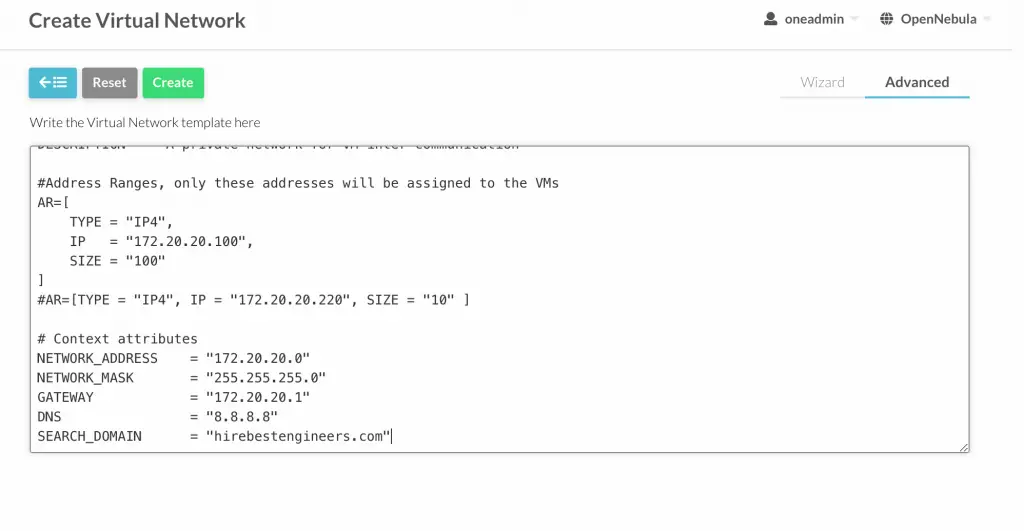

Then select “Advanced” on the right corner.

Before you add network configurations check to confirm network setup on KVM Virtualization Nodes:

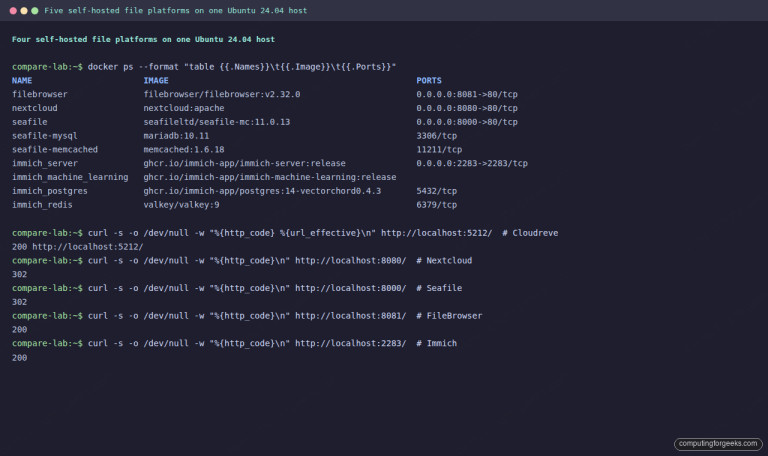

# My setup

$ ip -f inet a s

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

8: br0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

inet 172.21.200.2/29 brd 172.21.200.255 scope global br0

valid_lft forever preferred_lft forever

9: br1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

inet 172.20.20.10/24 brd 172.20.20.255 scope global br1

valid_lft forever preferred_lft foreverEdit and paste network configurations for your bridge.

#Configuration attribute

NAME = "Private"

VN_MAD = "bridge"

BRIDGE = br1

DESCRIPTION = "A private network for VM inter-communication"

#Address Ranges, only these addresses will be assigned to the VMs

AR=[

TYPE = "IP4",

IP = "172.20.20.100",

SIZE = "100"

]

#AR=[TYPE = "IP4", IP = "172.20.20.220", SIZE = "10" ]

# Context attributes

NETWORK_ADDRESS = "172.20.20.0"

NETWORK_MASK = "255.255.255.0"

GATEWAY = "172.20.20.1"

DNS = "8.8.8.8"

SEARCH_DOMAIN = "example.com"A Virtual Network definition consists of three different parts:

- The underlying physical network infrastructure that will support it, including the network driver.

- The logical address space available. Addresses associated to a Virtual Network can be IPv4, IPv6, dual stack IPv4-IPv6 or Ethernet.

- The guest configuration attributes to setup the Virtual Machine network, that may include for example network masks, DNS servers or gateways.

Here is the definition of the network configurations used.

Configuration attribute

- NAME: Name of the Virtual Network

- VN_MAD: The network driver to implement the network (802.1Q, ebtables, fw, ovswitch, vxlan, vcenter, bridge, dummy)

- BRIDGE: Name of the linux bridge in the nodes

Address Range(AR)

- TYPE: IP4 – IPv4 Address Range

- IP: First IP in the range in dot notation

- SIZE: Number of addresses in this range ( In my case this will be 172.20.20.100–172.20.20.200)

Contextualization Attributes

- NETWORK_ADDRESS: Base network address

- NETWORK_MASK: Network mask

- GATEWAY: Default gateway for the network

- DNS: DNS servers, a space separated list of servers

- SEARCH_DOMAIN: Default search domains for DNS resolution

Confirm the configurations and hit the “Create” button.

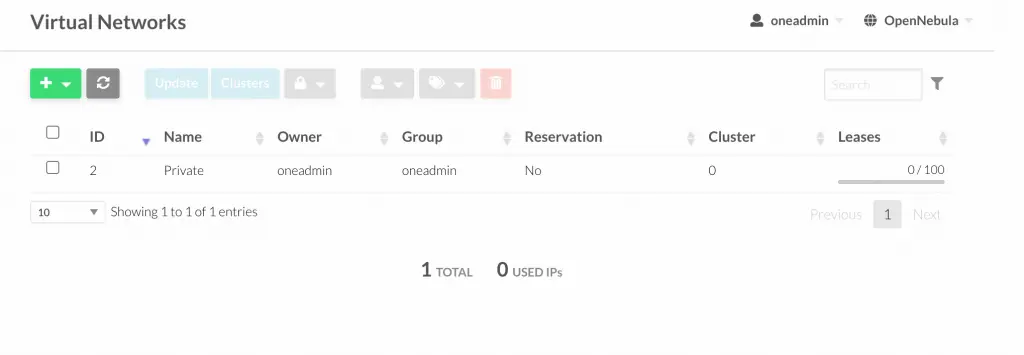

Once created it will be visible under “Virtual Networks” section.

The next task will be configuring storage and adding VM templates. Thereafter we should be ready to create Virtual Machines in our OpenNebula environment.

Other guides to read:

- How To Configure NFS Filesystem as OpenNebula Datastores

- Create CentOS|Ubuntu|Debian VM Templates on OpenNebula

Reference:

Error copying image in the datastore: Error copying docker://nginx?size=2048&filesystem=ext4&format=raw&tag=stable-alpine to /var/lib/one//datastores/1/cce09b8df8ae31d0eaaa04f7e549f764