Amazon EKS supports multiple autoscaling mechanisms that adjust pod count, pod resources, and cluster node capacity based on real-time metrics. The four main tools – Horizontal Pod Autoscaler (HPA), Vertical Pod Autoscaler (VPA), Cluster Autoscaler, and Karpenter – work at different layers to keep workloads right-sized and infrastructure costs under control.

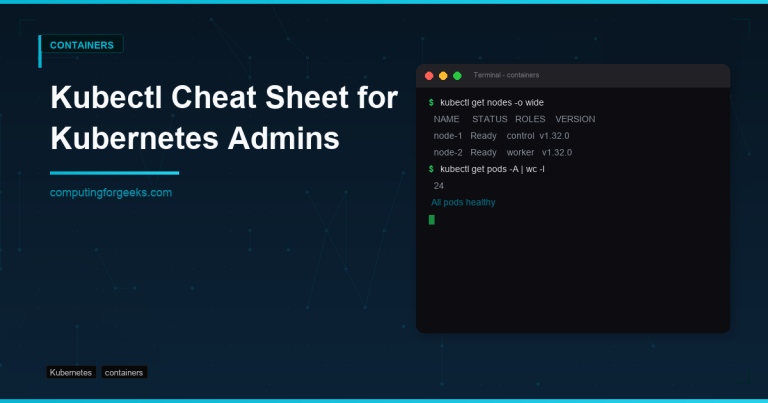

This guide covers installing and configuring each autoscaling component on an EKS cluster. We walk through Metrics Server setup, HPA and VPA configuration, Cluster Autoscaler deployment, Karpenter as a modern node provisioner, and custom metrics with Prometheus Adapter. Every step includes working kubectl commands and YAML manifests tested against EKS.

Prerequisites

Before starting, confirm you have the following in place:

- A running Amazon EKS cluster (version 1.28 or later recommended)

kubectlconfigured and authenticated against your EKS cluster- AWS CLI v2 installed and configured with IAM permissions for EKS, EC2 Auto Scaling, and IAM role management

- Helm 3 installed for chart-based deployments

- An EKS node group with at least 2 nodes (t3.medium or larger) for testing autoscaling behavior

- IAM OIDC provider enabled on the cluster (required for IRSA-based service accounts)

Enable the OIDC provider if not already configured:

eksctl utils associate-iam-oidc-provider --cluster my-cluster --approveStep 1: Install Metrics Server

The Metrics Server collects CPU and memory usage data from kubelets and exposes it through the Kubernetes metrics API. Both HPA and VPA depend on this data to make scaling decisions. Without Metrics Server, autoscaling based on resource utilization will not function.

Deploy Metrics Server using the official manifest:

kubectl apply -f https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yamlWait for the deployment to become ready:

kubectl wait --for=condition=available deployment/metrics-server -n kube-system --timeout=120sThe command returns when the Metrics Server pod is running and ready to serve requests:

deployment.apps/metrics-server condition metVerify that node metrics are being collected:

kubectl top nodesYou should see CPU and memory usage for each node in the cluster:

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

ip-10-0-1-45.eu-west-1.compute.internal 128m 6% 1024Mi 28%

ip-10-0-2-67.eu-west-1.compute.internal 96m 4% 890Mi 24%If kubectl top nodes returns an error about metrics not being available, wait 60 seconds and try again – the Metrics Server needs time to collect the first round of data from kubelets.

Step 2: Configure Horizontal Pod Autoscaler (HPA)

HPA automatically adjusts the number of pod replicas in a Deployment, ReplicaSet, or StatefulSet based on observed CPU utilization, memory usage, or custom metrics. It checks metrics every 15 seconds by default and scales up or down to maintain target utilization.

First, create a sample deployment to test autoscaling:

kubectl create deployment php-apache \

--image=registry.k8s.io/hpa-example \

--requests='cpu=200m' \

--limits='cpu=500m' \

--port=80Expose the deployment as a service:

kubectl expose deployment php-apache --port=80 --type=ClusterIPCreate an HPA that targets 50% CPU utilization and scales between 1 and 10 replicas:

kubectl autoscale deployment php-apache --cpu-percent=50 --min=1 --max=10Check the HPA status to confirm it is active and reading metrics:

kubectl get hpa php-apacheThe output shows the current CPU utilization, target, and replica count:

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

php-apache Deployment/php-apache 0%/50% 1 10 1 30sFor more control, define the HPA with a YAML manifest that includes both CPU and memory targets. Create the file:

sudo vi hpa-advanced.yamlAdd the following HPA specification with scaling behavior policies:

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: php-apache-advanced

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: php-apache

minReplicas: 2

maxReplicas: 20

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 60

- type: Resource

resource:

name: memory

target:

type: Utilization

averageUtilization: 75

behavior:

scaleUp:

stabilizationWindowSeconds: 30

policies:

- type: Percent

value: 100

periodSeconds: 60

scaleDown:

stabilizationWindowSeconds: 300

policies:

- type: Percent

value: 10

periodSeconds: 60The behavior section controls scaling speed. Scale-up allows doubling pods every 60 seconds for fast response to traffic spikes. Scale-down is conservative – only 10% reduction per minute with a 5-minute stabilization window to prevent flapping.

Apply the manifest:

kubectl apply -f hpa-advanced.yamlStep 3: Test HPA with Load Generation

Generate artificial CPU load against the php-apache service to trigger HPA scaling. Run a load generator pod in a separate terminal:

kubectl run load-generator --image=busybox:1.36 --restart=Never -- \

/bin/sh -c "while true; do wget -q -O- http://php-apache; done"Watch the HPA respond to the increased CPU utilization:

kubectl get hpa php-apache --watchWithin 1-2 minutes, the CPU target percentage rises above 50% and HPA begins adding replicas:

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

php-apache Deployment/php-apache 0%/50% 1 10 1 2m

php-apache Deployment/php-apache 148%/50% 1 10 1 2m30s

php-apache Deployment/php-apache 148%/50% 1 10 4 3m

php-apache Deployment/php-apache 62%/50% 1 10 7 4mStop the load generator when done testing:

kubectl delete pod load-generatorAfter load stops, HPA scales pods back down to the minimum over the stabilization window period (default 5 minutes for scale-down).

Step 4: Configure Vertical Pod Autoscaler (VPA)

VPA adjusts CPU and memory requests for individual pods based on historical usage patterns. Instead of adding more replicas (HPA), VPA right-sizes each pod. This is useful for workloads with variable resource needs – databases, batch jobs, and applications that are hard to scale horizontally.

Install VPA using the official repository:

git clone https://github.com/kubernetes/autoscaler.git

cd autoscaler/vertical-pod-autoscaler

./hack/vpa-up.shVerify that all three VPA components are running:

kubectl get pods -n kube-system | grep vpaYou should see the admission controller, recommender, and updater pods all in Running state:

vpa-admission-controller-6b9d45c5f7-x2k4m 1/1 Running 0 45s

vpa-recommender-7c8b5d6f99-n8j2l 1/1 Running 0 45s

vpa-updater-5d4c8b7f68-p3m9r 1/1 Running 0 45sCreate a VPA policy for a deployment. Open the file:

vi vpa-policy.yamlAdd the following VPA configuration that sets resource boundaries and update mode:

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: php-apache-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: php-apache

updatePolicy:

updateMode: "Auto"

resourcePolicy:

containerPolicies:

- containerName: hpa-example

minAllowed:

cpu: 50m

memory: 64Mi

maxAllowed:

cpu: 2000m

memory: 2Gi

controlledResources: ["cpu", "memory"]The updateMode has three options:

- Off – VPA only provides recommendations, does not apply changes (good for initial observation)

- Auto – VPA evicts and recreates pods with updated resource requests

- Initial – VPA sets resources only at pod creation, never updates running pods

Apply the VPA policy:

kubectl apply -f vpa-policy.yamlCheck VPA recommendations after a few minutes of pod activity:

kubectl describe vpa php-apache-vpaThe recommendation section shows suggested CPU and memory values based on observed usage:

Recommendation:

Container Recommendations:

Container Name: hpa-example

Lower Bound:

Cpu: 25m

Memory: 52428800

Target:

Cpu: 100m

Memory: 104857600

Upper Bound:

Cpu: 400m

Memory: 209715200Do not run HPA and VPA on the same metric (CPU or memory) for the same deployment. They will conflict. Use HPA for scaling replica count based on CPU, and VPA for right-sizing memory requests – or use VPA in “Off” mode alongside HPA to get recommendations without automated changes.

Step 5: Install Cluster Autoscaler

Cluster Autoscaler adds or removes EC2 nodes from your EKS node group when pods cannot be scheduled due to insufficient resources, or when nodes are underutilized. It works with EC2 Auto Scaling Groups that back your managed or self-managed node groups.

Create an IAM policy that grants Cluster Autoscaler the permissions it needs. Save the policy document:

vi cluster-autoscaler-policy.jsonAdd the following IAM policy that allows describing and modifying Auto Scaling Groups and EC2 instances:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"autoscaling:DescribeAutoScalingGroups",

"autoscaling:DescribeAutoScalingInstances",

"autoscaling:DescribeLaunchConfigurations",

"autoscaling:DescribeScalingActivities",

"autoscaling:DescribeTags",

"autoscaling:SetDesiredCapacity",

"autoscaling:TerminateInstanceInAutoScalingGroup",

"ec2:DescribeLaunchTemplateVersions",

"ec2:DescribeInstanceTypes",

"ec2:DescribeImages",

"ec2:GetInstanceTypesFromInstanceRequirements",

"eks:DescribeNodegroup"

],

"Resource": "*"

}

]

}Create the IAM policy and note the ARN returned:

aws iam create-policy \

--policy-name AmazonEKSClusterAutoscalerPolicy \

--policy-document file://cluster-autoscaler-policy.jsonCreate a Kubernetes service account with the IAM role attached using IRSA (IAM Roles for Service Accounts). Replace the account ID and cluster name with your values:

eksctl create iamserviceaccount \

--cluster=my-cluster \

--namespace=kube-system \

--name=cluster-autoscaler \

--attach-policy-arn=arn:aws:iam::111122223333:policy/AmazonEKSClusterAutoscalerPolicy \

--override-existing-serviceaccounts \

--approveStep 6: Configure and Deploy Cluster Autoscaler

Deploy Cluster Autoscaler using the official Helm chart. Add the autoscaler Helm repository:

helm repo add autoscaler https://kubernetes.github.io/autoscaler

helm repo updateInstall Cluster Autoscaler with EKS-specific configuration. Replace my-cluster and eu-west-1 with your cluster name and region:

helm install cluster-autoscaler autoscaler/cluster-autoscaler \

--namespace kube-system \

--set autoDiscovery.clusterName=my-cluster \

--set awsRegion=eu-west-1 \

--set rbac.serviceAccount.create=false \

--set rbac.serviceAccount.name=cluster-autoscaler \

--set extraArgs.balance-similar-node-groups=true \

--set extraArgs.skip-nodes-with-system-pods=false \

--set extraArgs.expander=least-waste \

--set extraArgs.scale-down-delay-after-add=5m \

--set extraArgs.scale-down-unneeded-time=5mKey configuration flags explained:

- balance-similar-node-groups – distributes nodes evenly across node groups with similar instance types for better AZ balance

- expander=least-waste – selects the node group that will have the least idle resources after scale-up

- scale-down-delay-after-add – waits 5 minutes after a scale-up before considering scale-down, preventing rapid oscillation

- scale-down-unneeded-time – a node must be underutilized for 5 continuous minutes before removal

Verify the Cluster Autoscaler pod is running:

kubectl get pods -n kube-system -l app.kubernetes.io/name=aws-cluster-autoscalerCheck the autoscaler logs to confirm it discovered your node groups:

kubectl logs -n kube-system -l app.kubernetes.io/name=aws-cluster-autoscaler --tail=20The logs should show the autoscaler discovering your Auto Scaling Groups and starting to monitor them for pending pods.

Tag your EKS node group Auto Scaling Groups so Cluster Autoscaler can discover them automatically. These tags are required:

aws autoscaling create-or-update-tags --tags \

ResourceId=eks-nodegroup-xxxxxxxx,ResourceType=auto-scaling-group,Key=k8s.io/cluster-autoscaler/my-cluster,Value=owned,PropagateAtLaunch=true \

ResourceId=eks-nodegroup-xxxxxxxx,ResourceType=auto-scaling-group,Key=k8s.io/cluster-autoscaler/enabled,Value=true,PropagateAtLaunch=trueIf you created the node group with eksctl, these tags are already applied. You can verify with:

aws autoscaling describe-auto-scaling-groups \

--query "AutoScalingGroups[?Tags[?Key=='k8s.io/cluster-autoscaler/enabled']].AutoScalingGroupName" \

--output textStep 7: Install Karpenter (Modern Alternative)

Karpenter is a newer node provisioning tool built by AWS that replaces Cluster Autoscaler with a faster, more flexible approach. Instead of relying on pre-defined Auto Scaling Groups, Karpenter directly provisions EC2 instances with the right instance type and size for pending pods. It responds to unschedulable pods in seconds rather than minutes.

Set up environment variables for the Karpenter installation. Replace these with your actual values:

export KARPENTER_NAMESPACE="kube-system"

export KARPENTER_VERSION="1.1.1"

export CLUSTER_NAME="my-cluster"

export AWS_DEFAULT_REGION="eu-west-1"

export AWS_ACCOUNT_ID="$(aws sts get-caller-identity --query Account --output text)"

export TEMPOUT="$(mktemp)"Create the Karpenter IAM roles and instance profile using the CloudFormation template provided by the project:

curl -fsSL "https://raw.githubusercontent.com/aws/karpenter-provider-aws/v${KARPENTER_VERSION}/website/content/en/preview/getting-started/getting-started-with-karpenter/cloudformation.yaml" > "${TEMPOUT}" \

&& aws cloudformation deploy \

--stack-name "Karpenter-${CLUSTER_NAME}" \

--template-file "${TEMPOUT}" \

--capabilities CAPABILITY_NAMED_IAM \

--parameter-overrides "ClusterName=${CLUSTER_NAME}"Create the IRSA service account for Karpenter:

eksctl create iamserviceaccount \

--cluster="${CLUSTER_NAME}" \

--name=karpenter \

--namespace="${KARPENTER_NAMESPACE}" \

--role-name="${CLUSTER_NAME}-karpenter" \

--attach-policy-arn="arn:aws:iam::${AWS_ACCOUNT_ID}:policy/KarpenterControllerPolicy-${CLUSTER_NAME}" \

--override-existing-serviceaccounts \

--approveInstall Karpenter via Helm:

helm install karpenter oci://public.ecr.aws/karpenter/karpenter \

--version "${KARPENTER_VERSION}" \

--namespace "${KARPENTER_NAMESPACE}" \

--set "settings.clusterName=${CLUSTER_NAME}" \

--set "settings.interruptionQueue=${CLUSTER_NAME}" \

--set controller.resources.requests.cpu=1 \

--set controller.resources.requests.memory=1Gi \

--set controller.resources.limits.cpu=1 \

--set controller.resources.limits.memory=1Gi \

--waitVerify Karpenter is running:

kubectl get pods -n kube-system -l app.kubernetes.io/name=karpenterBoth the Karpenter controller pod should show Running status:

NAME READY STATUS RESTARTS AGE

karpenter-6f4b8d9c7f-x8k2n 1/1 Running 0 60sCreate a NodePool that defines what instance types Karpenter can provision. Open the file:

vi karpenter-nodepool.yamlAdd the following NodePool configuration:

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

requirements:

- key: kubernetes.io/arch

operator: In

values: ["amd64"]

- key: karpenter.sh/capacity-type

operator: In

values: ["on-demand", "spot"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-generation

operator: Gt

values: ["5"]

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

limits:

cpu: "100"

memory: 400Gi

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

consolidateAfter: 1m

---

apiVersion: karpenter.k8s.aws/v1

kind: EC2NodeClass

metadata:

name: default

spec:

amiSelectorTerms:

- alias: al2023@latest

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

role: "KarpenterNodeRole-my-cluster"This configuration allows Karpenter to select from compute-optimized (c), general-purpose (m), and memory-optimized (r) instance families, generation 6 and above. It supports both On-Demand and Spot instances, with a cluster-wide limit of 100 vCPUs and 400 GiB memory. The consolidation policy automatically replaces underutilized nodes with smaller ones.

Apply the NodePool:

kubectl apply -f karpenter-nodepool.yamlStep 8: Custom Metrics with Prometheus Adapter

HPA supports custom metrics beyond CPU and memory. Using Prometheus with the Prometheus Adapter, you can autoscale based on application-specific metrics like HTTP requests per second, queue depth, or active connections.

This step assumes you already have Prometheus running in your cluster. If not, deploy it first using the kube-prometheus-stack Helm chart.

Install the Prometheus Adapter:

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm repo updateCreate a values file for the adapter. Open it:

vi prometheus-adapter-values.yamlAdd the following configuration that maps Prometheus metrics to Kubernetes custom metrics API:

prometheus:

url: http://kube-prometheus-stack-prometheus.monitoring.svc

port: 9090

rules:

default: false

custom:

- seriesQuery: 'http_requests_total{namespace!="",pod!=""}'

resources:

overrides:

namespace: {resource: "namespace"}

pod: {resource: "pod"}

name:

matches: "^(.*)_total$"

as: "${1}_per_second"

metricsQuery: 'rate(<<.Series>>{<<.LabelMatchers>>}[2m])'

- seriesQuery: 'nginx_connections_active{namespace!="",pod!=""}'

resources:

overrides:

namespace: {resource: "namespace"}

pod: {resource: "pod"}

name:

as: "nginx_active_connections"

metricsQuery: 'avg(<<.Series>>{<<.LabelMatchers>>})'The first rule converts the http_requests_total counter into a http_requests_per_second rate metric. The second rule exposes active Nginx connections as a custom metric. Both become available through the Kubernetes custom metrics API.

Install the adapter with the custom values:

helm install prometheus-adapter prometheus-community/prometheus-adapter \

--namespace monitoring \

--values prometheus-adapter-values.yamlVerify the custom metrics are registered:

kubectl get --raw "/apis/custom.metrics.k8s.io/v1beta1" | python3 -m json.tool | head -30Create an HPA that uses the custom requests-per-second metric. Open the file:

vi hpa-custom-metrics.yamlAdd the following HPA specification targeting 100 requests per second per pod:

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: webapp-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: webapp

minReplicas: 2

maxReplicas: 30

metrics:

- type: Pods

pods:

metric:

name: http_requests_per_second

target:

type: AverageValue

averageValue: "100"

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70This HPA scales on both custom metrics (HTTP request rate) and built-in CPU metrics. The highest scaling recommendation from either metric wins – if request rate demands 8 replicas but CPU only needs 4, HPA scales to 8.

Apply the custom metrics HPA:

kubectl apply -f hpa-custom-metrics.yamlStep 9: EKS Autoscaling Best Practices

After setting up the autoscaling components, follow these operational practices to keep scaling reliable and cost-effective.

Set resource requests on every pod

HPA, VPA, and node autoscalers all depend on resource requests to make decisions. Pods without CPU and memory requests are invisible to the scheduler’s resource accounting. Always define both requests and limits:

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 500m

memory: 512MiUse Pod Disruption Budgets

When Cluster Autoscaler or Karpenter removes nodes, Pod Disruption Budgets (PDBs) prevent too many pods from going down simultaneously. Create a PDB for every production deployment:

apiVersion: policy/v1

kind: PodDisruptionBudget

metadata:

name: webapp-pdb

spec:

minAvailable: "50%"

selector:

matchLabels:

app: webappSeparate Karpenter and Cluster Autoscaler

Do not run Karpenter and Cluster Autoscaler on the same node groups. If you are migrating from Cluster Autoscaler to Karpenter, keep a small managed node group for system components (CoreDNS, kube-proxy, Karpenter itself) managed by Cluster Autoscaler, and let Karpenter handle application workload nodes.

Monitor autoscaling events

Set up CloudWatch logging for your EKS cluster and monitor autoscaling events. Watch for common issues like:

- FailedScaleUp – Cluster Autoscaler could not add nodes, usually due to EC2 capacity limits or IAM permissions

- ScaleDownDisabled – annotations or PDBs are blocking node removal

- FailedGetResourceMetric – HPA cannot read metrics from Metrics Server, check the metrics-server pods

Use this command to check recent autoscaling events across the cluster:

kubectl get events --field-selector reason=ScalingReplicaSet --sort-by='.lastTimestamp' -AUse Spot instances for non-critical workloads

Both Karpenter and Cluster Autoscaler support Spot instances. Use Spot for batch processing, development environments, and stateless web frontends. Keep databases, stateful workloads, and system components on On-Demand instances. With Karpenter, the NodePool configuration above already includes Spot in the capacity-type values.

Right-size before autoscaling

Run VPA in “Off” mode for a week on existing workloads before enabling HPA or auto-mode VPA. Review the recommendations to set accurate baseline resource requests. Autoscaling on top of poorly sized pods wastes resources and money.

Conclusion

We covered the four autoscaling layers in EKS – Metrics Server for resource data collection, HPA for horizontal pod scaling, VPA for vertical pod right-sizing, Cluster Autoscaler for EC2 node group management, and Karpenter as a faster node provisioner. Custom metrics with Prometheus Adapter extend HPA beyond basic CPU and memory to application-specific metrics like request rates.

For production clusters, combine HPA with either Cluster Autoscaler or Karpenter (not both on the same node groups), set resource requests on every pod, and use Pod Disruption Budgets to maintain availability during scale-down events. Monitor autoscaling behavior through CloudWatch and Kubernetes events to catch misconfigurations early.