You deployed to EKS with kubectl apply, then shell scripts, then a CI job that ran helm upgrade from a Jenkins runner with hardcoded kubeconfig. The cluster drifted. Someone patched a Deployment live at 3am and nobody remembered why. Rollbacks meant git log detective work and hoping the CI runner still had the old artifacts. GitOps fixes this by flipping the direction of deployment. Instead of CI pushing into the cluster, the cluster pulls from Git and reconciles itself. Argo CD is the tool most EKS teams land on, and it pairs especially well with AWS because it runs inside the cluster and can authenticate to everything else using IRSA or Pod Identity instead of long-lived IAM keys.

This guide walks through a tested Argo CD install on Amazon EKS 1.33 using the official Helm chart, the AWS Load Balancer Controller ingress pattern (including the --insecure gotcha most tutorials miss), your first raw-manifest Application, a Helm chart Application, ApplicationSet with a list generator, real sync waves with PreSync and PostSync hooks, multi-cluster GitOps to a second EKS cluster, RBAC with AppProjects, Slack notifications, and the full troubleshooting playbook with exact error strings we hit during testing. Everything here was run on a live cluster, not copied from docs.

Tested April 2026 on Amazon EKS 1.33 with ArgoCD v3.3.6 (argo-cd Helm chart 9.5.0) and the AWS Load Balancer Controller

ArgoCD vs Flux vs Raw Helm: Decision Matrix

Before the install, a quick sanity check on whether Argo CD is actually what you want. Three real options cover 95% of EKS teams.

| Dimension | Argo CD | Flux CD | Raw Helm + CI |

|---|---|---|---|

| Delivery model | Pull (agent in cluster) | Pull (agent in cluster) | Push (CI runner into cluster) |

| Web UI | First-class, included | None (Weave GitOps separate) | None |

| Learning curve | Moderate, UI softens it | Steep, CLI and CRDs only | Low for devs, high ops cost |

| Multi-cluster | Native, single control plane | Per-cluster agents | Per-cluster pipelines |

| Helm support | Rendered by repo-server | HelmRelease CRD | Native |

| Drift detection | Continuous, visual diff | Continuous, log-based | None, drifts silently |

| RBAC | AppProject + Casbin CSV | Kubernetes RBAC only | CI runner permissions |

| Best for | Platform teams, many apps, many clusters | GitOps purists, Kustomize shops | Single small cluster, one team |

Argo CD wins when you have a platform team supporting many application teams on one or more EKS clusters. Flux wins when you want a lighter footprint and live entirely in YAML and CLI. Raw Helm from a pipeline is fine until it isn’t, which is usually the day the first drift incident happens in production. This guide is an Argo CD guide because that’s what the majority of EKS teams end up picking.

Quick Start TL;DR

Returning visitors who already read the explanation can copy these five commands and have Argo CD running in under three minutes on any EKS cluster.

helm repo add argo https://argoproj.github.io/argo-helm

helm repo update argo

helm install argocd argo/argo-cd --namespace argocd --create-namespace --version 9.5.0 --wait

kubectl -n argocd port-forward svc/argocd-server 8080:80 &

kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -dBrowse to http://localhost:8080, log in as admin with the printed password, and you’re in. The rest of this article covers everything you’d want to do next: production ingress, real applications, ApplicationSets, multi-cluster, sync waves, RBAC, notifications, troubleshooting, and security hardening.

How Argo CD Works: The Architecture

Argo CD is not a single binary. The chart installs eight components in the argocd namespace, each with a specific job. Understanding which pod does what saves hours when something breaks.

- argocd-server exposes the gRPC API, REST API, and Web UI on port 8080 (metrics on 8083). This is what the CLI and browser talk to.

- argocd-repo-server clones Git repositories and renders Helm, Kustomize, and Jsonnet manifests. Port 8081 internally, metrics on 8084. Stateless, so you can scale it horizontally when repos get huge.

- argocd-application-controller runs the reconciliation loop. It watches live cluster state against the rendered manifests from repo-server and applies the diff. Deployed as a StatefulSet because shard assignment relies on stable pod identity. Metrics on 8082.

- argocd-redis caches rendered manifests and short-lived session data. Stateless from a data durability perspective, so losing it just means a brief performance hit.

- argocd-applicationset-controller watches ApplicationSet resources and generates Applications from the templates. Metrics on 8080 inside the container.

- argocd-notifications-controller emits events to Slack, webhooks, and email. Separate process from argocd-server.

- argocd-dex-server handles SSO federation when you plug in SAML or non-OIDC identity providers. Ports 5556, 5557, and 5558.

- argocd-redis-secret-init is a one-shot Job that sets up the Redis auth secret on first install. You’ll see it in Completed state and that’s normal.

Three CRDs carry the data model. Application is a single deployable unit (one Git path, one destination, one sync policy). ApplicationSet is a template factory that generates Applications from generators like a static list, a Git directory, or a cluster registry. AppProject is the tenant boundary, defining which repos a team can pull from and which clusters or namespaces they can deploy to. Every Application belongs to exactly one AppProject, and default is the fallback when you don’t specify one.

Prerequisites

The guide assumes the usual EKS working environment. Nothing exotic.

- An EKS cluster running Kubernetes 1.29 or newer (tested on 1.33.8-eks)

kubectlv1.29+ configured for your cluster (tested on v1.35.0)- Helm 3.12+ installed locally

- AWS Load Balancer Controller installed in the cluster (needed for Ingress, optional if you stick to port-forward)

- A public Git repository for the demo Applications (the Argo example repo works fine)

- Outbound internet from your worker nodes to pull the Argo CD images from

quay.io

If you haven’t set up IRSA yet, the IRSA on EKS guide walks through the trust policy, OIDC provider, and annotations. You don’t need IRSA to install Argo CD itself, but you’ll want it later when Argo CD needs to pull from ECR or add a second EKS cluster using AWS auth. Keep a tab open on the kubectl cheat sheet if you work across multiple clusters because you’ll be switching contexts a lot in the multi-cluster section.

Install Argo CD via Helm

The Helm chart is the only install path worth using in 2026. The raw manifest install is still documented upstream but it lags behind on chart features and doesn’t give you a clean helm upgrade story. Add the official repo:

helm repo add argo https://argoproj.github.io/argo-helm

helm repo update argoInstall chart version 9.5.0, which ships Argo CD v3.3.6 at the time of writing. Pin the chart version so your install is reproducible instead of silently jumping to whatever is current the next time you run the command:

helm install argocd argo/argo-cd \

--namespace argocd \

--create-namespace \

--version 9.5.0 \

--waitThe --wait flag blocks until every pod is Ready, which takes roughly 45 seconds on a warm cluster. Check the pods afterward:

kubectl -n argocd get podsYou should see eight Running pods, one per architectural component described earlier:

NAME READY STATUS RESTARTS AGE

argocd-application-controller-0 1/1 Running 0 47s

argocd-applicationset-controller-6f964f646f-2jskt 1/1 Running 0 47s

argocd-dex-server-6d9ddd9bd4-9sn7g 1/1 Running 0 47s

argocd-notifications-controller-7d7fb77bb7-xfmg4 1/1 Running 0 47s

argocd-redis-b86c85959-l5tn4 1/1 Running 0 47s

argocd-repo-server-cf9fd566f-ghw7h 1/1 Running 0 47s

argocd-server-76fd7655dc-fb8fl 1/1 Running 0 47sThat’s the non-HA profile. For production you’ll want to switch to the HA values file, which runs three argocd-server replicas, sharded application-controllers, and redis-ha. The chart ships both variants and the switch is a matter of using values-ha.yaml instead of the defaults.

Resource footprint on the default install is modest. On a fresh EKS 1.33 cluster, the eight pods combined sit under 400 MiB of memory and a fraction of a CPU core at idle. Expect repo-server and application-controller to climb as you add Applications, because repo-server caches rendered manifests and the controller holds watcher connections per resource kind per cluster. A rough planning rule: budget 50 MiB of memory per 100 Applications on repo-server, and an extra 100 MiB on the application-controller per 1000 managed resources. These are starting points, not hard limits, and HA changes the math because load spreads across shards.

Access the Argo CD UI

Three options cover every situation. Pick based on whether you want fast, simple, or production-grade.

Option 1: Port-Forward (Testing)

Fastest way to get the UI in a browser. Zero cluster configuration:

kubectl -n argocd port-forward svc/argocd-server 8080:80Browse to http://localhost:8080. Good for demos and the five minutes after install when you just want to check the thing works. Bad for any shared team environment because only you can reach it.

Option 2: LoadBalancer Service

Flip the argocd-server service type and EKS provisions a classic ELB automatically:

kubectl -n argocd patch svc argocd-server -p '{"spec":{"type":"LoadBalancer"}}'Works, costs a dedicated ELB per service, and gives you no path for TLS termination without extra work. Fine for a lab, wasteful in production where you’d rather share an ALB across multiple services.

Option 3: Ingress with AWS Load Balancer Controller (Production)

The production pattern. One shared ALB can front Argo CD plus anything else in the cluster. This is where the undocumented gotcha lives: Argo CD’s server refuses to render the UI over plain HTTP unless you explicitly pass --insecure. The chart does not set this flag. If you terminate TLS at the ALB and forward HTTP to the pod without the flag, the browser shows a blank page and the server logs complain about TLS expectations. Patch the Deployment first:

kubectl -n argocd patch deployment argocd-server --type='json' -p='[

{"op":"add","path":"/spec/template/spec/containers/0/args/-","value":"--insecure"}

]'Open a file for the Ingress manifest:

vim argocd-ingress.yamlPaste the following. The annotations tell the AWS Load Balancer Controller to provision an internet-facing ALB, use IP target type (required for Fargate and recommended everywhere else), listen on HTTP/80, and point the health check at Argo CD’s built-in endpoint. In production you’d also add an ACM certificate ARN and the HTTPS listener (shown further down).

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: argocd-ingress

namespace: argocd

annotations:

alb.ingress.kubernetes.io/scheme: internet-facing

alb.ingress.kubernetes.io/target-type: ip

alb.ingress.kubernetes.io/listen-ports: '[{"HTTP":80}]'

alb.ingress.kubernetes.io/healthcheck-path: /healthz

spec:

ingressClassName: alb

rules:

- http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: argocd-server

port:

number: 80Apply it and wait for the ALB to provision (about 60 seconds):

kubectl apply -f argocd-ingress.yaml

kubectl -n argocd get ingressThe ADDRESS column fills in with the ALB DNS name, which is what your users will hit:

NAME CLASS HOSTS ADDRESS PORTS AGE

argocd-ingress alb * k8s-argocd-argocdin-95808cd49b-1859203249.eu-west-1.elb.amazonaws.com 80 65sVerify the endpoint responds:

curl -s -o /dev/null -w "HTTP %{http_code}\n" http://k8s-argocd-argocdin-95808cd49b-1859203249.eu-west-1.elb.amazonaws.com/A healthy response returns HTTP 200:

HTTP 200And the server reports its own version on the API endpoint, which confirms both the ALB target group and the pod are happy:

curl -s http://k8s-argocd-argocdin-95808cd49b-1859203249.eu-west-1.elb.amazonaws.com/api/versionThe response shows the exact Argo CD version running inside the cluster:

{"Version": "v3.3.6"}For a real production deployment, add an ACM certificate ARN and switch the listen-ports annotation to include HTTPS. Cover all web services via HTTPS with a valid certificate. The pattern looks like alb.ingress.kubernetes.io/certificate-arn: arn:aws:acm:eu-west-1:123456789012:certificate/abc combined with alb.ingress.kubernetes.io/listen-ports: '[{"HTTPS":443}]' and alb.ingress.kubernetes.io/ssl-redirect: '443'.

Get the Admin Password and Log in with the CLI

The chart creates a random initial admin password and stores it in a Secret named argocd-initial-admin-secret. Fetch it:

kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -dCopy the output (no trailing newline). Username is admin. Log in with the UI first to confirm it works, then change the password from the user menu. Argo CD deletes the argocd-initial-admin-secret automatically after the first password change, so don’t panic when it disappears.

Browse to the ALB address (or localhost:8080 if you’re using port-forward) and you’ll see the Argo CD login screen. It’s the same friendly octopus mascot whether you’re on v2 or v3, which tells you the team has better things to worry about than rebranding.

For the CLI, there’s an important gotcha. The argocd CLI speaks gRPC to argocd-server. Plain HTTP ALBs don’t proxy HTTP/2 well without the right annotations, which means CLI login against the ALB address fails with an RPC error. The pragmatic workaround is to keep the ALB for the web UI and use a short-lived port-forward for CLI operations. Spin up the port-forward in a background terminal:

kubectl -n argocd port-forward svc/argocd-server 8080:80 &Then authenticate. The --plaintext flag tells the CLI not to expect TLS on the other end, and --insecure skips cert verification:

argocd login localhost:8080 \

--username admin \

--password 'your-retrieved-password' \

--insecure \

--plaintextA successful login confirms the context is saved locally:

'admin:login' logged in successfully

Context 'localhost:8080' updatedFor a full production CLI experience you can add HTTPS and HTTP/2 to the ALB (ACM cert plus alb.ingress.kubernetes.io/backend-protocol-version: HTTP2) and log in against the public hostname. That avoids the port-forward entirely. Many teams skip that complexity for humans and reserve the port-forward pattern for admins while CI jobs run inside the cluster and talk to argocd-server directly.

Your First Application: Raw Manifests from Git

Time to deploy something. The canonical demo is the guestbook example from the Argo CD team’s own repo. It’s a Deployment plus a Service, no Helm, nothing fancy. Open a file for the Application manifest:

vim guestbook-app.yamlPaste the Application. Note the shape: source points at a Git repo and path, destination points at the in-cluster API server and a target namespace, and syncPolicy.automated enables both prune (delete resources that vanish from Git) and self-heal (revert drift from live edits).

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: guestbook

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/argoproj/argocd-example-apps.git

targetRevision: HEAD

path: guestbook

destination:

server: https://kubernetes.default.svc

namespace: guestbook-demo

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueApply it to the cluster:

kubectl apply -f guestbook-app.yamlArgo CD’s application-controller picks up the new Application within a few seconds, repo-server clones the Git repo and renders the manifests, and the controller applies them. Check the Application status:

kubectl -n argocd get application guestbookBoth sync and health should flip to green within 30 seconds:

NAME SYNC STATUS HEALTH STATUS

guestbook Synced HealthyThe actual workload runs in the target namespace. Verify the pod is up:

kubectl -n guestbook-demo get allOne Running guestbook-ui pod confirms the end-to-end loop works:

NAME READY STATUS RESTARTS AGE

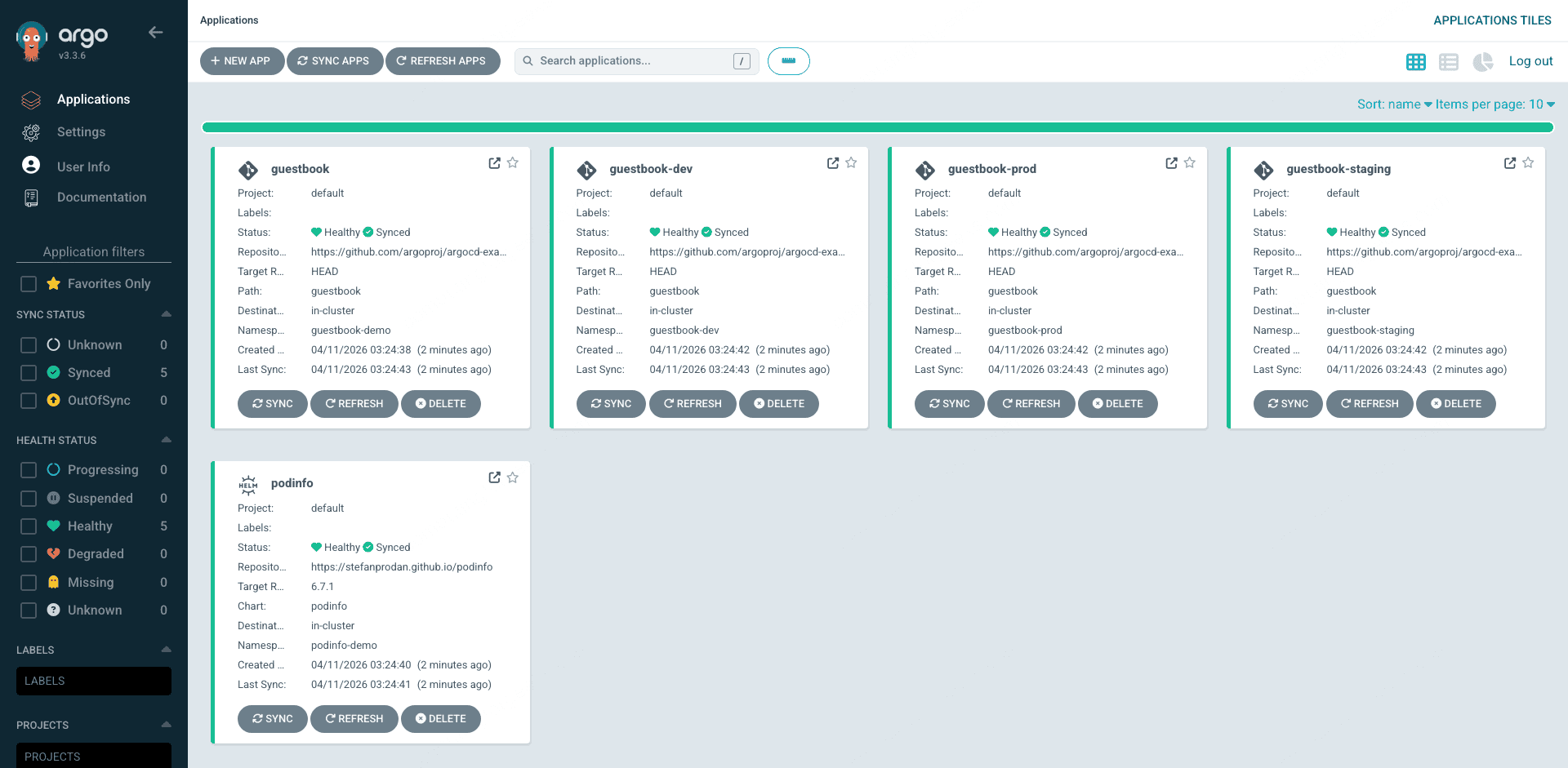

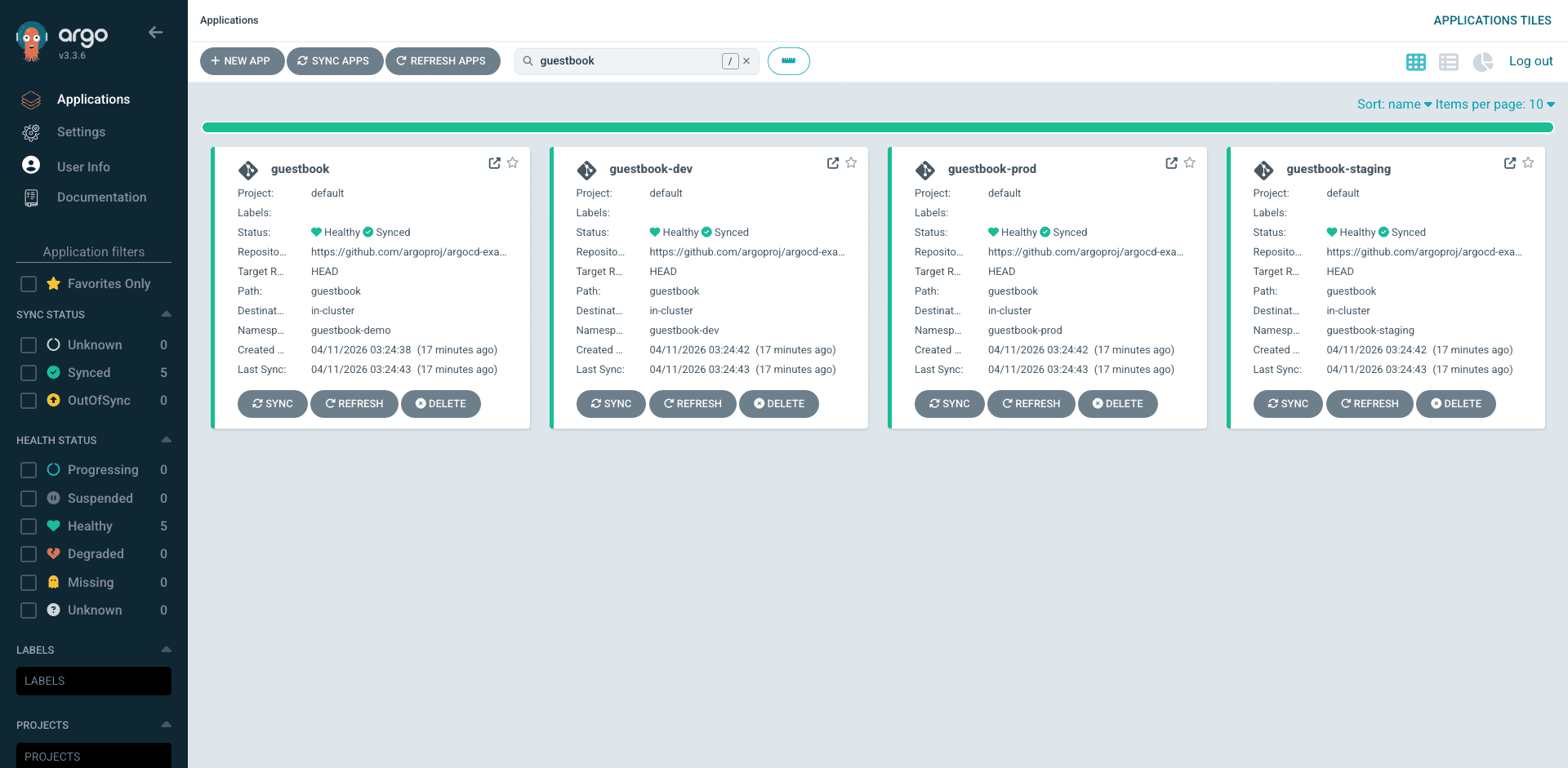

pod/guestbook-ui-85db984648-l5bc9 1/1 Running 0 28sOpen the Argo CD UI and you’ll see guestbook as a tile on the Applications page. Each tile shows the project, sync status, health status, source repo, target namespace, and last sync time at a glance. This is the landing page your team will spend most of their time on.

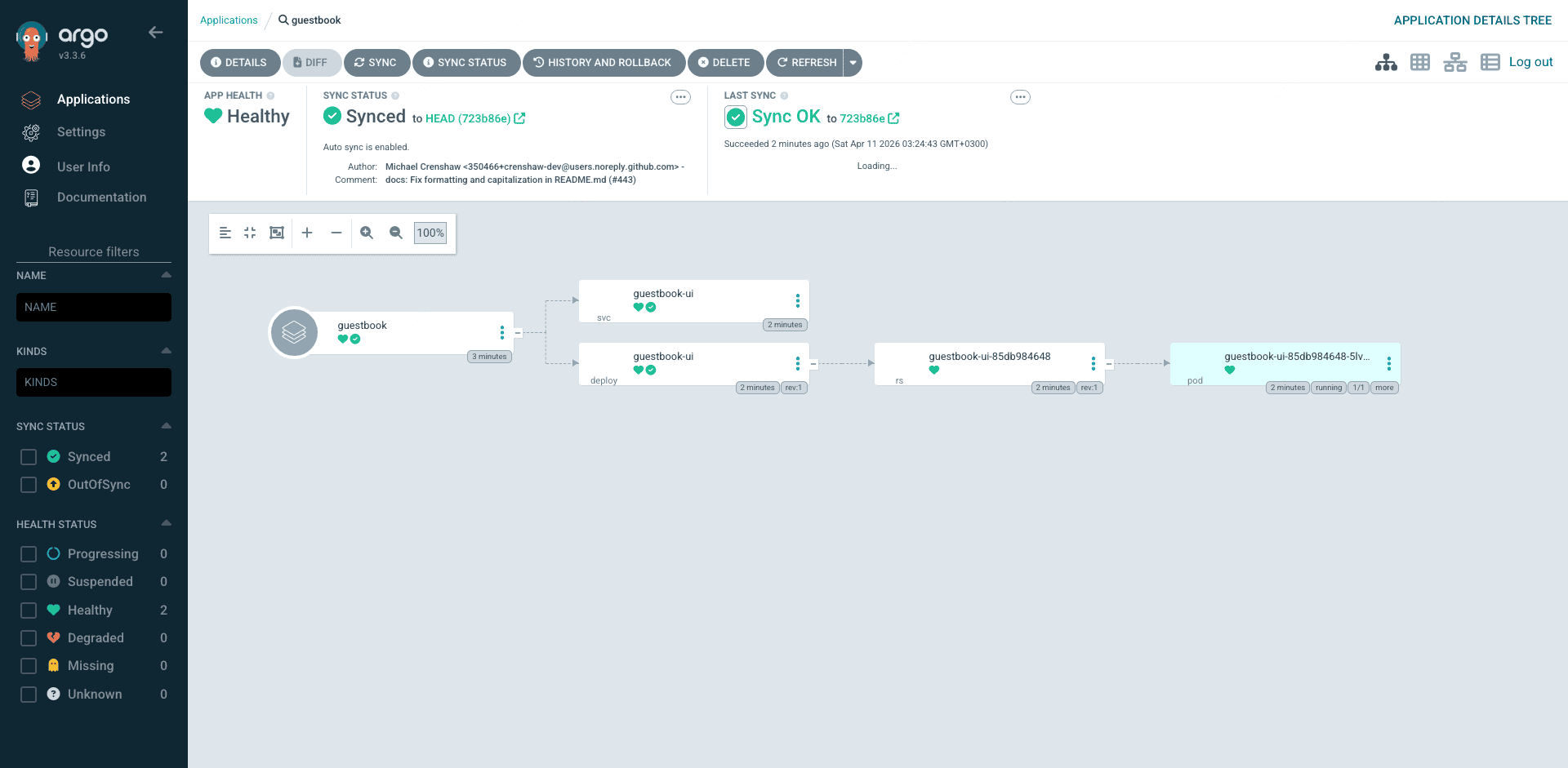

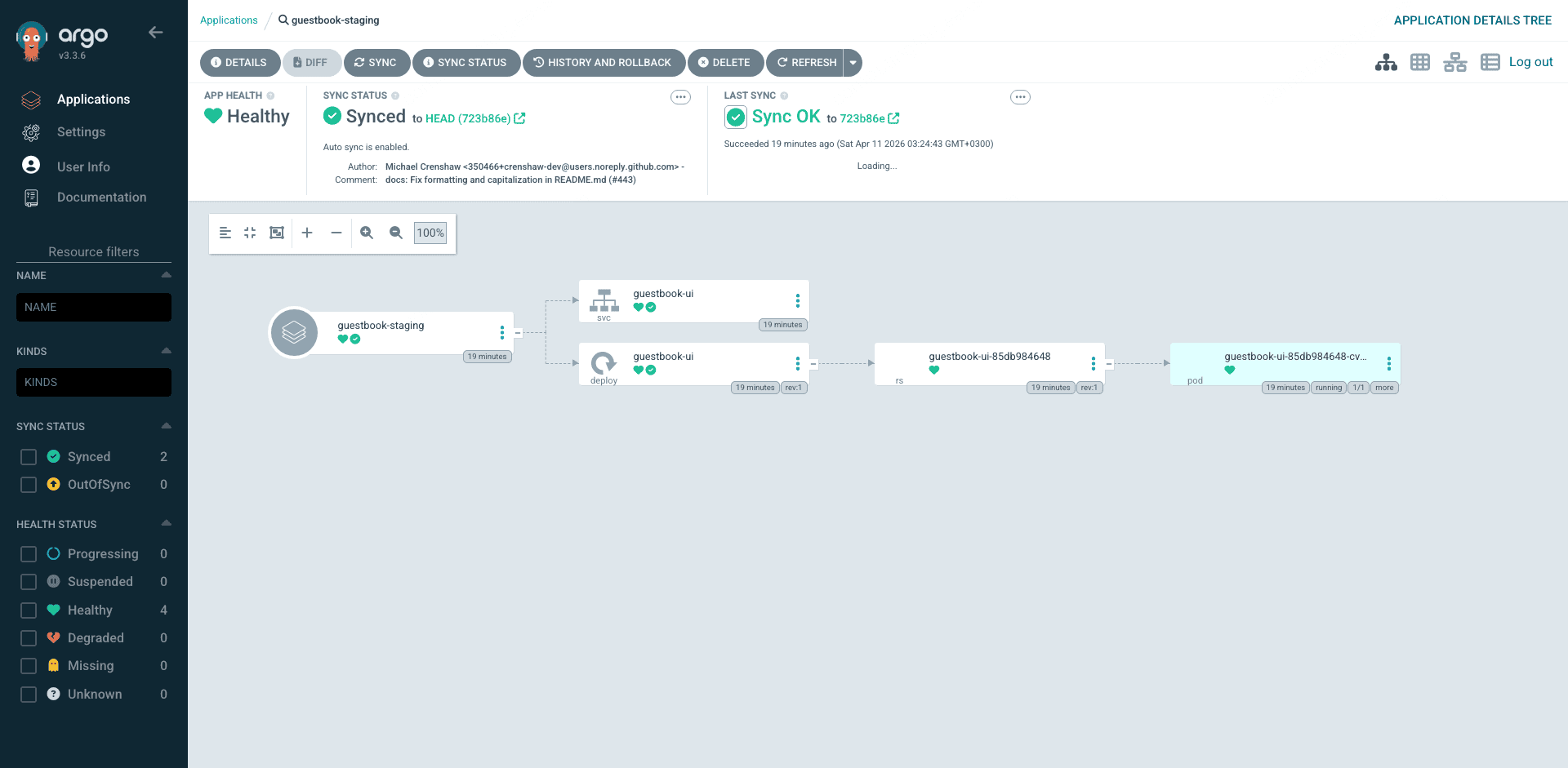

Click the guestbook tile and you drop into the resource tree view. Application, Service, Deployment, ReplicaSet, Pod, each with its own sync and health icon, connected by lines that show the parent/child relationships. The status bar at the top shows healthy/synced state, last sync revision, and the commit hash. Click any node and you get the full YAML diff between Git and the live cluster in a side panel. This visual diff is the single biggest productivity win over CI-based Helm deployments because you can see drift at a glance.

Deploy a Helm Chart Application

Most real workloads ship as Helm charts. Argo CD’s repo-server renders them server-side, so you can deploy a remote chart (any HTTPS Helm repo, any OCI registry, any chart embedded in a Git repo) and override values inline. Stefan Prodan’s podinfo is a good test subject because it’s tiny and deterministic. Open the Application file:

vim podinfo-app.yamlThe key difference from the guestbook example is the source.chart field and the source.helm.values block, which feeds inline values into the chart render. This keeps value overrides declarative without needing a separate values file in Git:

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: podinfo

namespace: argocd

spec:

project: default

source:

repoURL: https://stefanprodan.github.io/podinfo

chart: podinfo

targetRevision: 6.7.1

helm:

values: |

replicaCount: 2

resources:

requests:

cpu: 50m

memory: 64Mi

destination:

server: https://kubernetes.default.svc

namespace: podinfo-demo

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueApply and check:

kubectl apply -f podinfo-app.yaml

kubectl -n argocd get application podinfoSynced and Healthy once repo-server renders the chart and the controller applies the two replicas:

NAME SYNC STATUS HEALTH STATUS

podinfo Synced HealthyThe workload namespace shows both replicas running:

kubectl -n podinfo-demo get podsBoth podinfo pods confirm the Helm render and the replicaCount override took effect:

NAME READY STATUS RESTARTS AGE

podinfo-68955d6868-4wpt4 1/1 Running 0 47s

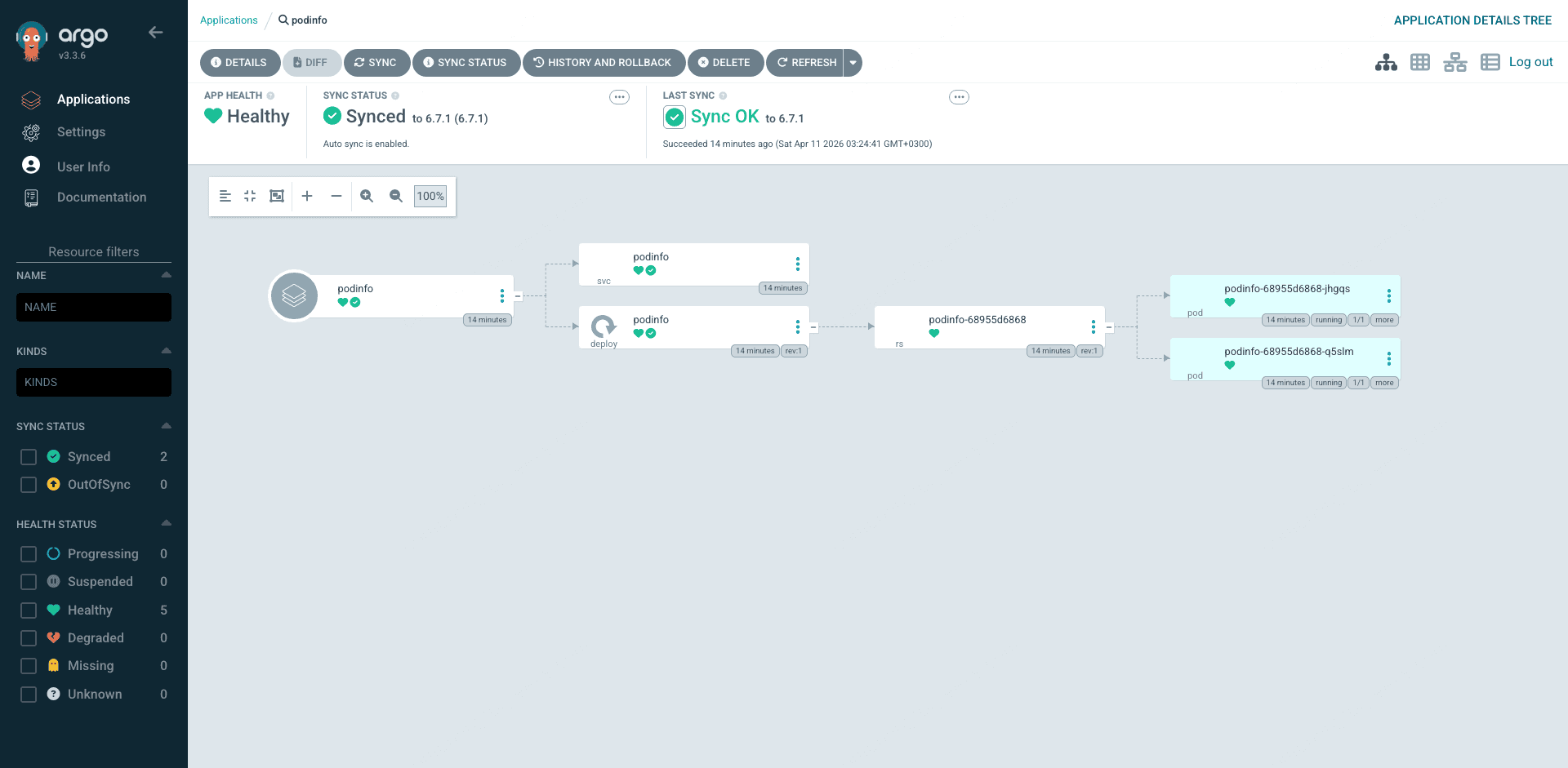

podinfo-68955d6868-mklkr 1/1 Running 0 47sBack in the UI, the podinfo application shows the same resource tree structure but now with two pods (the replicaCount override), a HorizontalPodAutoscaler the chart ships by default, and the additional Service and ServiceAccount resources podinfo creates. This is what a Helm-rendered Application looks like to Argo CD: identical to the raw-manifest guestbook from Argo CD’s point of view because repo-server flattens the chart into plain manifests before the application-controller sees it.

Inline values are convenient for small overrides. For anything substantial, put the values file in a Git repo and reference it with source.helm.valueFiles instead. That keeps value changes under version control and makes them reviewable.

ApplicationSet with List Generator: Multi-Env in One Manifest

Creating three nearly identical Applications for dev, staging, and prod is exactly the kind of copy-paste drudgery ApplicationSets kill. A single ApplicationSet with a list generator expands into N Applications, one per entry, using a template. Open the file:

vim guestbook-appset.yamlThe generators field holds a list of elements, and the template field uses double-curly substitutions like {{env}} to produce one Application per element. The controller watches the ApplicationSet and reconciles the generated Applications on every change.

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: guestbook-multi-env

namespace: argocd

spec:

generators:

- list:

elements:

- env: dev

namespace: guestbook-dev

- env: staging

namespace: guestbook-staging

- env: prod

namespace: guestbook-prod

template:

metadata:

name: 'guestbook-{{env}}'

spec:

project: default

source:

repoURL: https://github.com/argoproj/argocd-example-apps.git

targetRevision: HEAD

path: guestbook

destination:

server: https://kubernetes.default.svc

namespace: '{{namespace}}'

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueApply it and the ApplicationSet controller immediately generates three Applications:

kubectl apply -f guestbook-appset.yaml

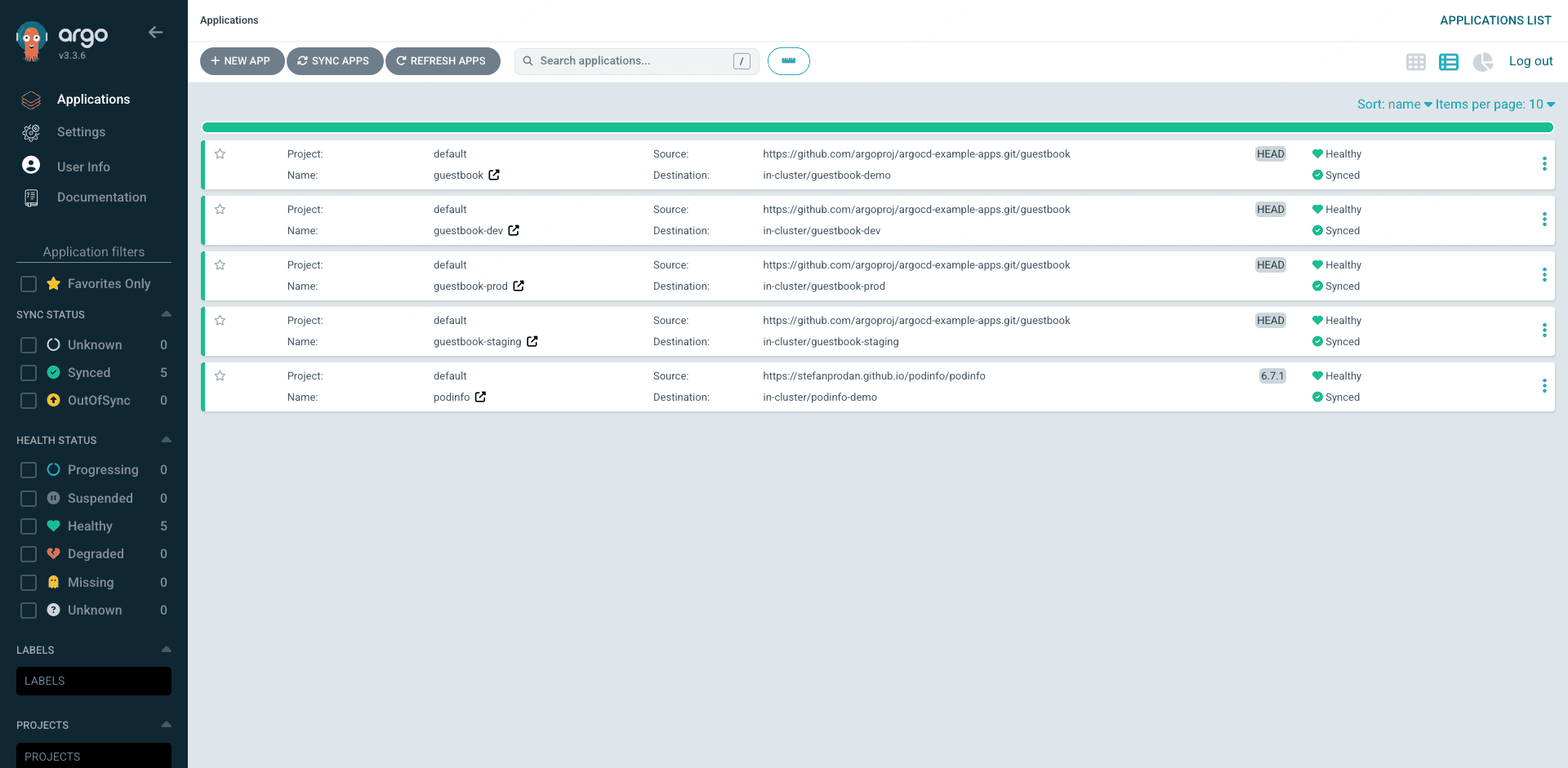

kubectl -n argocd get applicationsYou’ll now see four guestbook Applications (the original plus the three generated ones) and the podinfo Application from the previous section:

NAME SYNC STATUS HEALTH STATUS

guestbook Synced Healthy

guestbook-dev Synced Healthy

guestbook-prod Synced Healthy

guestbook-staging Synced Healthy

podinfo Synced HealthyAll three target namespaces were created automatically thanks to CreateNamespace=true in the sync options:

kubectl get ns | grep guestbookThree namespaces, all Active:

guestbook-dev Active

guestbook-prod Active

guestbook-staging ActiveRefresh the UI and the Applications page now shows all four guestbook apps plus the podinfo Helm app. Filter by the guestbook name and you can see the original plus the three ApplicationSet-generated siblings in one view. The search box supports labels, sync status, health status, and project filters, so teams with hundreds of applications can still find what they need in a couple of keystrokes.

For a flatter view of all five apps side by side, switch to the list mode using the view toggle in the top right. The list mode trades the big tiles for a dense table that’s better when you’re scanning many applications for a specific sync status or project owner.

Click into any of the generated siblings and you get the same resource tree as the hand-written guestbook Application. The ApplicationSet controller doesn’t change how Argo CD manages the child apps: it just stamps them out. From the cluster’s point of view, they’re indistinguishable from manually created Applications.

Need a fourth environment? Add an element to the list and save. The controller notices, creates the fourth Application, and Argo CD syncs it. Need to remove an environment? Delete the element and the corresponding Application is garbage-collected. If you want apps to survive generator deletion (common in production to avoid accidentally wiping an env), set spec.syncPolicy.preserveResourcesOnDeletion: true on the ApplicationSet.

Other ApplicationSet Generators in Brief

The list generator is the simplest but far from the most powerful. Three others cover most production patterns.

The Git generator reads your Git repo and produces one Application per matching directory or file. A common layout is a monorepo with apps/* directories, one per deployable. Point the ApplicationSet at that path and adding a new app is as simple as committing a new directory. No ApplicationSet edit needed. The template uses path variables like {{path.basename}} to name each generated Application after its directory.

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: platform-apps

namespace: argocd

spec:

generators:

- git:

repoURL: https://github.com/your-org/platform-gitops.git

revision: HEAD

directories:

- path: apps/*

template:

metadata:

name: '{{path.basename}}'

spec:

project: platform

source:

repoURL: https://github.com/your-org/platform-gitops.git

targetRevision: HEAD

path: '{{path}}'

destination:

server: https://kubernetes.default.svc

namespace: '{{path.basename}}'

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueThe Cluster generator iterates over every cluster Argo CD knows about. Combined with a label selector, it deploys the same Application to every cluster tagged env=prod, for example. This is how platform teams roll out fleetwide changes like cluster-autoscaler or kube-state-metrics without maintaining a list.

The Matrix generator takes the Cartesian product of two other generators. Combine Git directories with Cluster to get “every app from the platform repo, deployed to every prod cluster.” The Matrix is the most powerful generator but also the one most likely to create Applications you didn’t expect, so test it in a non-prod cluster first.

Pull Request, SCM Provider, and Plugin generators round out the set for preview environments and custom logic. See the ApplicationSet generator reference for the full list.

Sync Waves and Hooks: Ordered Rollouts

Real deployments don’t apply everything at once. A database migration runs before the backend, the backend comes up before the frontend, and a maintenance page goes up during the rollout and comes down at the end. Argo CD handles this with sync waves (numeric ordering) and sync hooks (resources that run at specific phases).

Three annotations control the behavior:

argocd.argoproj.io/sync-wave: "N"orders resources within the Sync phase. Lower N applies first. Default is 0. Use negative values for things that need to happen very early.argocd.argoproj.io/hook: PreSync | Sync | PostSync | SyncFail | PostDelete | PreDeleteplaces a resource in a specific phase. PreSync runs before the main sync, PostSync runs after everything is Healthy, SyncFail runs only on failure, and the Delete variants run during app deletion.argocd.argoproj.io/hook-delete-policy: BeforeHookCreation | HookSucceeded | HookFailedcontrols when hook resources (usually Jobs) get cleaned up.HookSucceededis the common choice for migration Jobs.

Here’s a realistic rollout. A PreSync Job runs a SQL schema upgrade. Wave 1 puts up a maintenance page. Wave 2 deploys the backend. Wave 3 deploys the frontend. A PostSync Job takes the maintenance page down once the frontend is Healthy. Argo CD applied these in exactly that order during testing:

PHASE: PreSync

job/upgrade-sql-schema (hook: PreSync, HookSucceeded)

PHASE: Sync (wave 1)

deployment/maint-page-up (sync-wave: "1")

PHASE: Sync (wave 2)

deployment/backend (sync-wave: "2")

PHASE: Sync (wave 3)

deployment/frontend (sync-wave: "3")

PHASE: PostSync

job/maint-page-down (hook: PostSync, HookSucceeded)The PreSync Job must complete before the Sync phase starts. If it fails, the sync aborts and nothing else applies. The PostSync Job only runs once every Sync resource is Healthy, which is the signal you want for “the new version is serving traffic.” A concrete PreSync hook looks like this:

apiVersion: batch/v1

kind: Job

metadata:

name: upgrade-sql-schema

annotations:

argocd.argoproj.io/hook: PreSync

argocd.argoproj.io/hook-delete-policy: HookSucceeded

spec:

template:

spec:

restartPolicy: Never

containers:

- name: migrate

image: ghcr.io/example/migrate:v1.4.0

command: ["/migrate", "up"]A common production pattern is wave -1 for CRDs, wave 0 for namespaces, wave 1 for secrets and config, wave 2 for databases, wave 3 for application workloads, and wave 4 for Ingress. This prevents the classic “resource not found” errors where an Ingress references a Service that hasn’t been created yet.

Multi-Cluster GitOps: Add a Second EKS Cluster

One Argo CD instance can manage many clusters. This is a huge operational win over per-cluster deployment tooling because you keep a single source of truth, a single UI, a single RBAC model, and a single place to audit deploys. The fastest way to add a cluster is the CLI, which assumes your local kubeconfig has both cluster contexts.

argocd cluster add podid-lab-2 --yesThe command creates a ServiceAccount named argocd-manager on the target cluster, binds it to a ClusterRole with cluster-admin equivalent permissions, and writes the bearer token into a Secret on the Argo CD cluster. Real output from our test run:

ServiceAccount "argocd-manager" created in namespace "kube-system"

ClusterRole "argocd-manager-role" created

ClusterRoleBinding "argocd-manager-role-binding" created

Created bearer token secret "argocd-manager-long-lived-token" for ServiceAccount "argocd-manager"

Cluster 'https://A6347B26C0ECF9245A09CDB8839E9C3D.gr7.eu-west-1.eks.amazonaws.com' addedVerify both clusters show up:

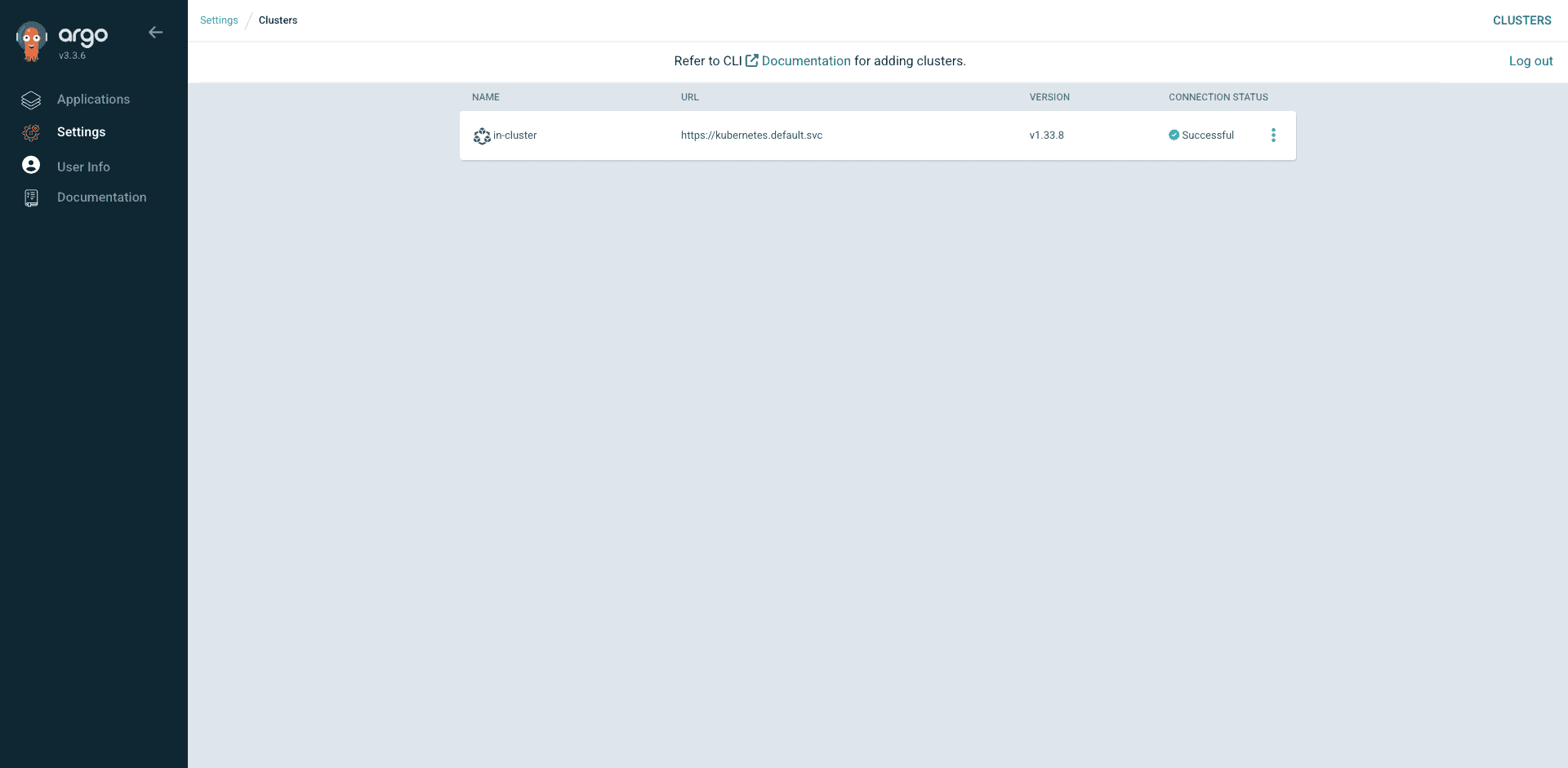

argocd cluster listThe in-cluster entry is always present (that’s the cluster Argo CD itself runs on), and the new cluster appears alongside it:

SERVER NAME VERSION STATUS MESSAGE PROJECT

https://A6347B26C0ECF9245A09CDB8839E9C3D.gr7.eu-west-1.eks.amazonaws.com podid-lab-2 v1.33.8 Successful

https://kubernetes.default.svc in-cluster v1.33.8 SuccessfulNow create an Application pointing at the second cluster by setting destination.server to its API endpoint. Open a new manifest:

vim guestbook-cluster2.yamlThe only difference from the first guestbook Application is the destination server URL. Everything else stays the same, which demonstrates Argo CD’s portability: the same manifest works against any registered cluster.

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: guestbook-cluster2

namespace: argocd

spec:

project: default

source:

repoURL: https://github.com/argoproj/argocd-example-apps.git

targetRevision: HEAD

path: guestbook

destination:

server: https://A6347B26C0ECF9245A09CDB8839E9C3D.gr7.eu-west-1.eks.amazonaws.com

namespace: guestbook-multicluster

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- CreateNamespace=trueApply it on cluster 1 (where Argo CD lives):

kubectl apply -f guestbook-cluster2.yamlNow switch your kubeconfig to cluster 2 and check where the pod actually runs:

kubectl --context podid-lab-2 -n guestbook-multicluster get podsThe guestbook pod is running on cluster 2 even though the Application lives on cluster 1:

NAME READY STATUS RESTARTS AGE

pod/guestbook-ui-85db984648-f888s 1/1 Running 0 61sThe Settings → Clusters page in the UI is where you’ll see every registered cluster, its connection status, and its Kubernetes version. The in-cluster entry is always there by default, representing the cluster Argo CD itself runs on. After argocd cluster add, the second cluster shows up alongside it with its own Successful status indicator.

This is the moment multi-cluster GitOps clicks. One control plane, many workload clusters, one Git repo as the source of truth. For EKS-to-EKS, the bearer token approach works but the cleaner production pattern is to use IRSA on the Argo CD cluster with an IAM role that can eks:DescribeCluster and a aws-auth mapping on the target cluster. The cluster Secret then uses awsAuthConfig instead of a bearer token, which rotates automatically via STS. See the official cluster management docs for the exact Secret format.

Sync Policies and Self-Heal

The sync policy block controls how aggressive Argo CD is about keeping the cluster in lockstep with Git. Three flags matter most.

- automated tells the controller to sync without a human clicking the Sync button. Without it, every change in Git waits for manual approval, which is useful for prod but painful for dev.

- prune tells Argo CD to delete resources that existed in Git and were removed. Without it, orphans pile up forever. Off by default because accidental deletion of a Deployment can take down production.

- selfHeal tells Argo CD to revert live edits. Someone runs

kubectl edit deployment? The controller undoes it within the reconciliation interval. This is the feature that ends drift for good.

The syncOptions list fine-tunes behavior further. CreateNamespace=true saves you from the “namespace not found” error on first sync. ServerSideApply=true uses Kubernetes 1.22+ server-side apply, which handles field ownership correctly when multiple controllers touch the same resource. ApplyOutOfSyncOnly=true only re-applies resources that drifted, saving API server load on large Applications. RespectIgnoreDifferences=true pairs with the ignoreDifferences field to stop self-heal from fighting an HPA that modifies replica counts.

The HPA conflict is worth calling out because it bites everyone once. If you enable self-heal and use HPA, Argo CD keeps reverting the replica count that HPA set, and HPA keeps setting it back. The fix is to tell Argo CD to ignore the replicas field:

spec:

ignoreDifferences:

- group: apps

kind: Deployment

jsonPointers:

- /spec/replicas

syncPolicy:

automated:

prune: true

selfHeal: true

syncOptions:

- RespectIgnoreDifferences=trueFor production, automated plus prune plus selfHeal plus the HPA ignore is the sensible baseline. For prod-critical workloads, consider leaving automated off and syncing manually so a human sees the diff before it ships.

RBAC and AppProjects for Team Isolation

One cluster, many teams, one Argo CD. Without RBAC, anyone with admin can deploy anything anywhere, which is fine for day one and terrible by day 30. The answer is AppProjects. Each team gets a project scoped to their allowed repos, destinations, and resource kinds. Inside the project, team-specific roles with JWT tokens power CI automation.

Everything in this section lives under the Settings menu in the UI. The Settings home page groups the admin surfaces: Repositories (connected Git repos), Repository certificates and known hosts, GnuPG keys, Clusters, Projects, Accounts, and Appearance. Most of your day-to-day AppProject work happens under Projects.

Open a file for the AppProject manifest:

vim team-alpha-project.yamlA realistic project restricts the team to one repo, one namespace prefix, and the common Kubernetes resource kinds. The roles block defines a CI-only role that can sync Applications but can’t create or delete them:

apiVersion: argoproj.io/v1alpha1

kind: AppProject

metadata:

name: team-alpha

namespace: argocd

spec:

description: Team Alpha applications

sourceRepos:

- https://github.com/example-org/team-alpha-gitops.git

destinations:

- server: https://kubernetes.default.svc

namespace: alpha-*

clusterResourceWhitelist:

- group: ""

kind: Namespace

namespaceResourceWhitelist:

- group: "*"

kind: "*"

roles:

- name: ci-sync

description: CI role allowed to sync but not modify apps

policies:

- p, proj:team-alpha:ci-sync, applications, sync, team-alpha/*, allow

- p, proj:team-alpha:ci-sync, applications, get, team-alpha/*, allowApply it and mint a JWT token for the CI role:

kubectl apply -f team-alpha-project.yaml

argocd proj role create-token team-alpha ci-sync --expires-in 8760hRefresh the Projects page in the UI and the new team-alpha project appears next to the default one. Every project shows its name, description, and a quick link into the details page.

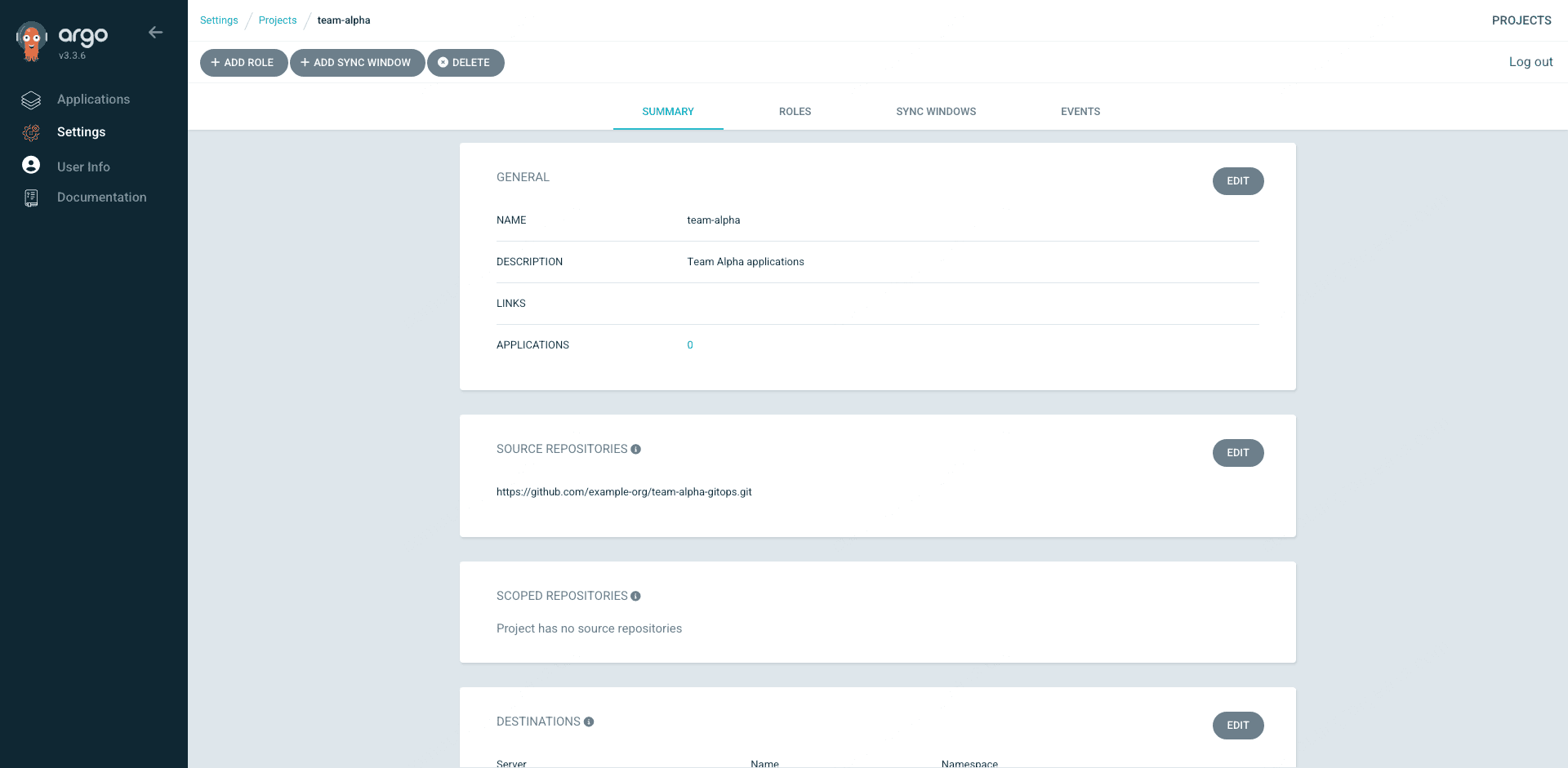

Click team-alpha to open the details view. The Summary tab shows the source repositories, destinations, resource whitelists, and any sync windows. The Roles tab lists the CI-only role we just defined, and the Sync Windows tab is where you’d schedule off-hours-only sync policies for change-frozen environments. Everything in the UI maps one-for-one to the YAML you applied, which is how you want it when you need to audit who can do what.

The printed token goes into your CI system as a secret and authenticates argocd app sync calls. Each team gets their own project, their own tokens, and no path to touch other teams’ apps. For server-wide RBAC (who can log in, who can create projects), edit the argocd-rbac-cm ConfigMap, which uses the same Casbin CSV format documented in the RBAC reference. A quick refresher on the Kubernetes side of this lives in the Kubernetes RBAC guide.

Notifications to Slack

Argo CD ships a notifications controller that pushes events to Slack, email, webhooks, Discord, Microsoft Teams, and a dozen other services. The Slack setup tripped a lot of people up in 2025 because token formats changed, so here’s a template that works on v3.3.6 with a real Slack webhook URL (use a real bot token or webhook from your workspace). Open the config:

vim argocd-notifications-cm.yamlThe ConfigMap has three parts: a service definition (where to send), a template (what the message looks like), and a trigger (when to fire). The template uses Go template syntax to pull fields from the Application object.

apiVersion: v1

kind: ConfigMap

metadata:

name: argocd-notifications-cm

namespace: argocd

data:

service.webhook.slack: |

url: https://hooks.slack.com/services/your-webhook-here

headers:

- name: Content-Type

value: application/json

template.app-sync-succeeded: |

webhook:

slack:

method: POST

body: |

{

"text": "Argo CD: {{.app.metadata.name}} synced successfully to {{.app.spec.destination.namespace}} on revision {{.app.status.sync.revision}}"

}

trigger.on-sync-succeeded: |

- when: app.status.operationState.phase in ['Succeeded']

send: [app-sync-succeeded]Apply the ConfigMap and subscribe an Application by annotating it. The subscription annotation format is notifications.argoproj.io/subscribe.<trigger>.<service>:

kubectl apply -f argocd-notifications-cm.yaml

kubectl -n argocd annotate application guestbook \

notifications.argoproj.io/subscribe.on-sync-succeeded.slack=platform-alertsTrigger a sync and you’ll see the message pop up in Slack within a few seconds. For the native Slack integration (not webhook) you’d use service.slack with a bot OAuth token stored in argocd-notifications-secret. The webhook pattern shown here is simpler and works without an app installation in Slack. The full list of built-in triggers (on-sync-succeeded, on-sync-failed, on-health-degraded, on-deployed, and others) is in the notifications docs.

Image Updater in Brief

Argo CD Image Updater (v1.1.1 at the time of writing) watches container registries and updates Application image tags when new versions appear. Two write-back methods exist. The default argocd method writes parameter overrides directly to the Application object, which is imperative and doesn’t survive an app delete. The git method commits changes back to the source repo, which keeps everything declarative and reviewable, and is the only choice for teams serious about GitOps.

For Helm applications with git write-back, the annotations target specific values file keys:

annotations:

argocd-image-updater.argoproj.io/image-list: myapp=ghcr.io/example/myapp

argocd-image-updater.argoproj.io/myapp.update-strategy: semver

argocd-image-updater.argoproj.io/myapp.allow-tags: 'regexp:^v[0-9]+\.[0-9]+\.[0-9]+$'

argocd-image-updater.argoproj.io/myapp.helm.image-name: image.repository

argocd-image-updater.argoproj.io/myapp.helm.image-tag: image.tag

argocd-image-updater.argoproj.io/write-back-method: git:secret:argocd/git-creds

argocd-image-updater.argoproj.io/write-back-target: helmvalues:/values.yaml

argocd-image-updater.argoproj.io/git-branch: mainInstall Image Updater as a Helm chart in the argocd namespace, provide it a Git credential Secret for the commit, and it picks up the annotations automatically. For ECR, Image Updater supports IRSA, so you can give its ServiceAccount an IAM role with ecr:DescribeImages and skip static AWS credentials entirely. A common gotcha: when the Application’s spec.source.repoURL is a Helm repo (not a Git repo), you must set writeBackConfig.gitConfig.repository explicitly or git write-back fails silently. Full reference at the Image Updater docs.

Troubleshooting

Exact error strings, captured from real incidents, mapped to their fixes. Search engines love these because people paste errors verbatim into Google.

Error: “Failed to load target state: failed to generate manifest for source 1 of 1”

The repo-server can’t render your source. Common causes: a private Git repo with no credentials configured, a Helm chart version that no longer exists on the upstream repo, a Kustomize overlay that references a missing base, or a syntax error in your YAML. Check the repo-server logs for the specific reason:

kubectl -n argocd logs deploy/argocd-repo-server --tail=100You’ll usually see a second line with the real failure. For private repos, add credentials via argocd repo add or a declarative repository Secret.

Error: “rpc error: code = Unavailable desc = no healthy upstream”

The argocd CLI is trying to speak gRPC to the server over a connection that doesn’t support HTTP/2 properly. This happens when you point the CLI at a plain-HTTP ALB without HTTP/2 annotations. Fix: either use kubectl port-forward for CLI operations (the pattern this guide recommends) or add alb.ingress.kubernetes.io/backend-protocol-version: HTTP2 and an HTTPS listener with an ACM certificate to the Ingress.

Application Stuck in OutOfSync

The app shows OutOfSync but looks fine. Three common causes. First, a mutating admission webhook is modifying resources after apply (common with Istio sidecar injection or cost-allocation labels), and Argo CD sees the drift on every reconcile. Fix with an ignoreDifferences block targeting the specific fields. Second, an HPA is adjusting replicas. Same fix, ignore /spec/replicas. Third, the live resource has annotations added by kubectl (like kubectl.kubernetes.io/last-applied-configuration) that Argo CD didn’t set. Server-side apply (ServerSideApply=true in syncOptions) usually resolves this cleanly.

Error: “server address unspecified”

The argocd CLI can’t find a server context. Your session expired or the context file got wiped. Re-run argocd login against whichever address you’re using. If you run CLI commands from a script, always set ARGOCD_SERVER and ARGOCD_AUTH_TOKEN explicitly instead of relying on the local context file.

Argo CD UI Blank Page Over Plain HTTP

The undocumented one that cost us real time. You set up the ALB Ingress, the health check passes, but loading the UI gives a blank page or an endless redirect loop. Argo CD’s server expects TLS by default and refuses to serve the UI correctly when it’s hit over plain HTTP. Fix: patch the deployment to add --insecure (shown in the Ingress section) and delete any argocd-server pods so they come back with the new args.

kubectl -n argocd rollout restart deployment argocd-serverThe UI loads correctly within seconds of the new pod being Ready.

Security Hardening Checklist

A default install is not production-ready. Ten items that turn it into something you’d trust.

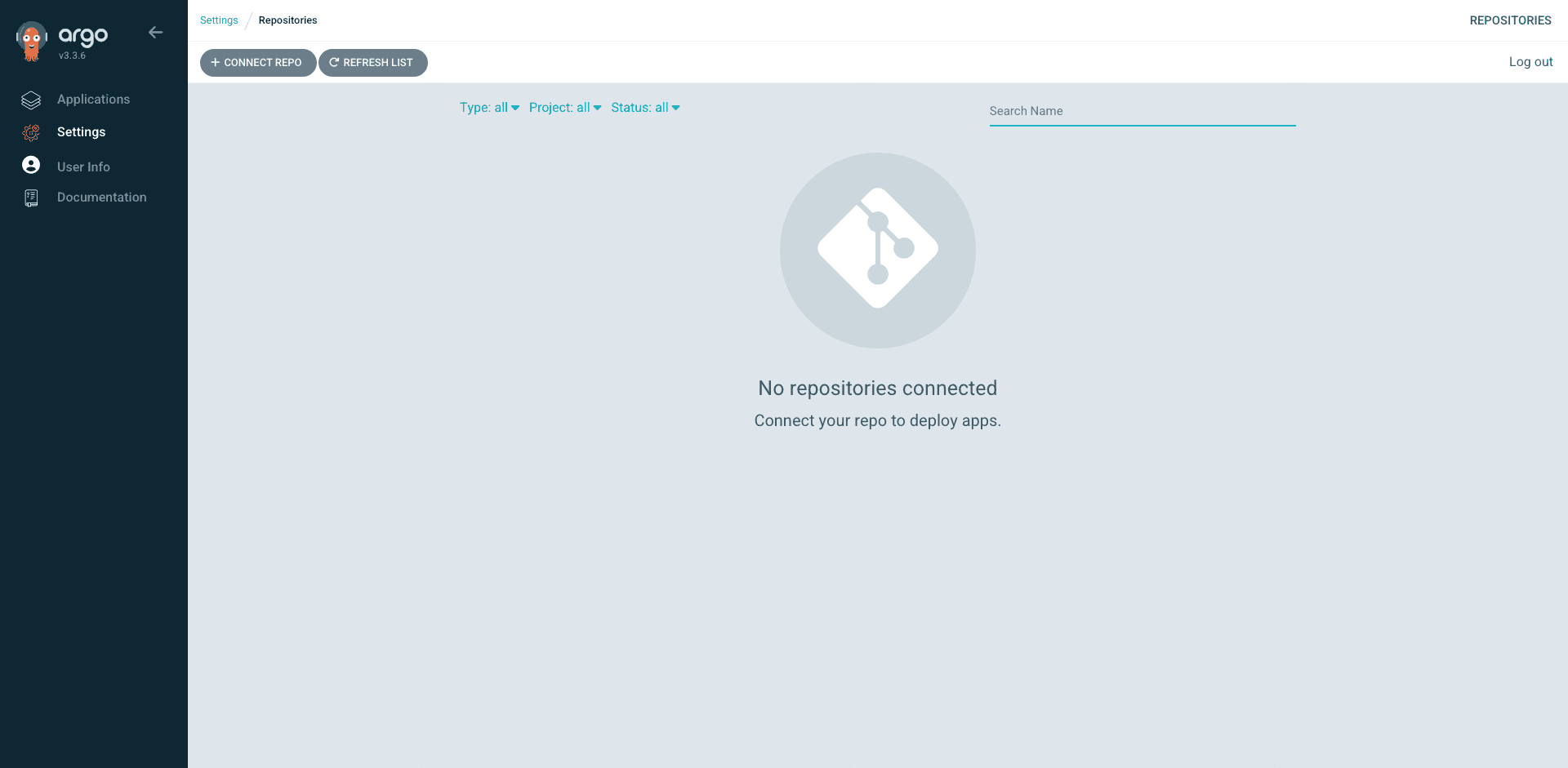

Before adding any repos that carry production code, visit Settings → Repositories. On a fresh install this is an empty page with a big Connect Repo button. For public demo repos (like the argocd-example-apps we used for guestbook) Argo CD doesn’t need credentials, so nothing shows up here even while those Applications work fine. For private repos, this is where you add the URL, credentials (SSH key, HTTPS with token, or GitHub App), and optional project scope.

- Rotate the initial admin password immediately after the first login. The random password in

argocd-initial-admin-secretis only meant for bootstrap. - Delete argocd-initial-admin-secret after the rotation. Argo CD does this automatically on password change, but verify.

- Configure SSO via OIDC (Cognito, Okta, Auth0, Keycloak, GitHub OAuth, any IdP that speaks OIDC). Disable the local admin account afterward by setting

admin.enabled: "false"inargocd-cm. - Use AppProjects to scope what each team can deploy, where, and from which repos. Never let teams share the

defaultproject in production. - Exclude sensitive CRDs from management via

resource.exclusionsinargocd-cm(for example, you probably don’t want Argo CD touchingcilium.ioCRs managed by the CNI). - Use ignoreDifferences for operational fields only, never to paper over a real drift problem. An HPA’s replica count is fine. Hiding a security context mismatch is not.

- Store Git credentials via External Secrets Operator instead of long-lived Kubernetes Secrets. ESO with IRSA pulls from AWS Secrets Manager and rotates tokens cleanly.

- Enable audit logging on argocd-server by setting

server.log.level: infoandserver.log.format: jsonin the chart values. Ship the logs to CloudWatch or your log aggregator. - Apply NetworkPolicies to the argocd namespace. Only argocd-server needs ingress from outside; the rest talk only to each other and the Kubernetes API.

- Stay on the v3.x release line. Argo CD v2.x reached EOL in 2025 and no longer receives security patches. Use chart v9.x for v3.3.x, and pin the version so upgrades are deliberate.

FAQ

What’s the difference between Argo CD and Flux?

Both are GitOps controllers that pull from Git and reconcile cluster state. Argo CD ships a rich web UI, has built-in multi-cluster management from a single control plane, and uses AppProjects for team isolation. Flux has no UI (Weave GitOps is a separate project), runs as a set of smaller controllers, and leans harder into Kustomize. Argo CD is more common in teams that want a visual dashboard and manage many clusters from one place. Flux is more common in teams that live in the CLI and value a lighter footprint.

Does Argo CD support Helm charts natively?

Yes. The argocd-repo-server renders Helm charts server-side and applies the resulting manifests. You can reference charts from HTTPS Helm repos, OCI registries, or embedded in Git. Values are provided either inline in the Application spec or via values files in a Git repo. Argo CD does not use helm install, it renders and applies, which means Helm’s release history is not maintained in the cluster. If you need helm rollback semantics, use Git revert instead.

How do I expose Argo CD on EKS?

Three options. Port-forward for quick testing. A LoadBalancer service for a dedicated ELB per Argo CD install. An Ingress managed by the AWS Load Balancer Controller for production, ideally sharing an ALB with other services. The production pattern needs an ACM certificate ARN on the Ingress annotations for HTTPS, plus the --insecure flag on argocd-server so it tolerates TLS termination at the ALB.

Can Argo CD manage multiple Kubernetes clusters?

Yes. A single Argo CD install can manage any number of clusters. Add them with argocd cluster add (which creates a ServiceAccount and bearer token on the target) or declaratively via a cluster Secret. For EKS-to-EKS, the production pattern is to use IRSA with awsAuthConfig in the cluster Secret so tokens rotate automatically via STS rather than using a long-lived bearer token.

What are sync waves and when should I use them?

Sync waves order resources within a sync. Resources with lower wave numbers apply first. Use them whenever one resource depends on another being ready, like applying CRDs before their custom resources, or applying a database migration before the backend that reads the new schema. Combine with sync hooks (PreSync, PostSync) for things that need to run before or after the main sync phase, like maintenance-mode toggles.

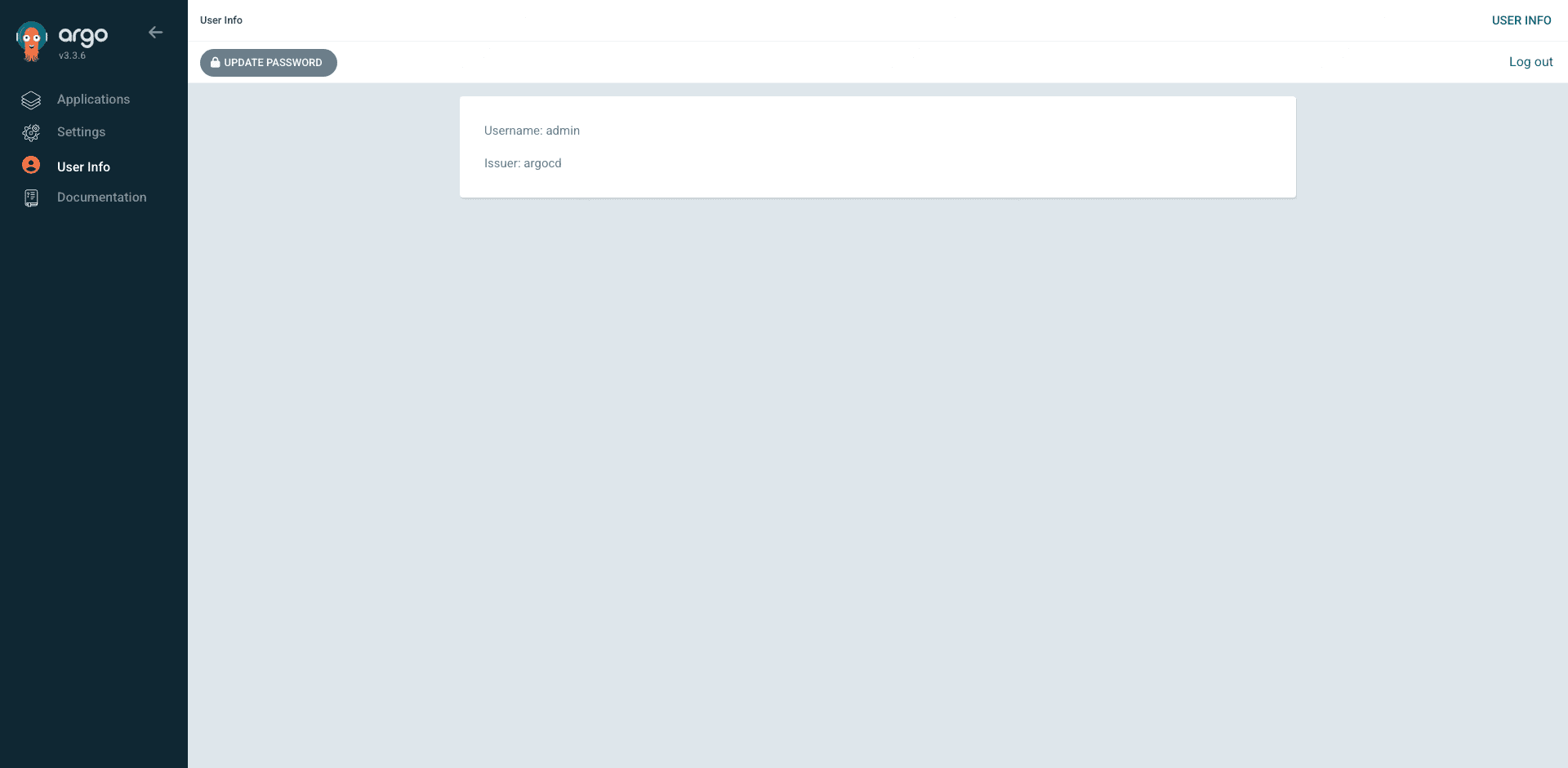

How do I rotate the initial admin password?

Log in with the initial password from argocd-initial-admin-secret, then navigate to User Info in the left sidebar. Click the Update Password button at the top, enter the current password and a new one, and confirm. Argo CD deletes the argocd-initial-admin-secret automatically after the first change, so don’t keep a copy thinking you’ll need it later. For SSO-based installs, disable the local admin account entirely by setting admin.enabled: "false" in argocd-cm so only OIDC-authenticated users can log in.

Does Argo CD work with AWS IRSA or Pod Identity?

Yes, both. Annotate the relevant Argo CD ServiceAccounts (argocd-application-controller, argocd-repo-server, argocd-image-updater) with an IAM role ARN and they can assume it transparently to pull ECR images, hit AWS Secrets Manager, or talk to other AWS services. Full setup is in the IRSA on EKS guide. Pod Identity is the newer AWS pattern that skips the OIDC setup and ships tokens via a local agent, and Argo CD works cleanly with both: see our EKS Pod Identity guide for the full setup and a side-by-side comparison with IRSA.

Production Checklist

What to do next, in order, to take this from a working lab into something you can put real workloads on.

- Switch the Helm install to the HA values profile (three argocd-server replicas, sharded application-controller, redis-ha)

- Terminate TLS at the ALB with an ACM certificate and set

alb.ingress.kubernetes.io/listen-portsto HTTPS 443 with HTTP to HTTPS redirect - Enable OIDC SSO against your identity provider and disable the local admin account

- Create one AppProject per team with tightly scoped source repos and destination namespaces

- Move from inline Helm values to values files in a dedicated Git repo so every value change is reviewable

- Turn on Slack or Teams notifications for

on-sync-failedandon-health-degradedso humans find out before users do - Add a second EKS cluster for staging or DR, register it with

awsAuthConfigusing IRSA, and deploy a canary Application to prove the multi-cluster plumbing works - Set up Argo CD Image Updater with git write-back to a dedicated

releasesbranch so tag promotions are visible in PR review - Write an App-of-Apps root that manages Argo CD itself, so the only thing outside GitOps is the initial

helm installthat bootstraps the cluster - Pin every chart version and every container image tag. “latest” is how you end up restarting production at 2am

The reference docs worth bookmarking: the Argo CD operator manual, the Argo CD GitHub repo for release notes, and the IRSA guide and kubectl cheat sheet for the EKS pieces that make all of this click together.