With the surge of information in this connectivity age, many applications require more data to describe clients, to feed AI, to come up with grand Data Projects and so on. In order to deal with the burgeoning petabyte scale of information; efficient, reliable and stable systems are required to enable fast processing, storage and retrieval of the data. There are some systems already built to handle such amounts of data at scale including Ceph, GlusterFS, HDFS, MinIO and SeawedFS. They are all brilliant in their nature and in the way they handle storage of objects and in this guide, we shall focus on SeawedFS. We shall get to see its features then immerse ourselves in getting it installed. Before everything else, let us get ourselves acquainted with SeawedFS.

SeaweedFS is a distributed object store and file system to store and serve billions of files fast! Object store has O(1) disk seek, transparent cloud integration. Filer supports cross-cluster active-active replication, Kubernetes, POSIX, S3 API, encryption, Erasure Coding for warm storage, FUSE mount, Hadoop, WebDAV. Source: SeawedFS GitHub Space

SeaweedFS started as an Object Store to handle small files efficiently. Instead of managing all file metadata in a central master, the central master only manages file volumes, and it lets these volume servers manage files and their metadata. This relieves concurrency pressure from the central master and spreads file metadata into volume servers, allowing faster file access (O(1), usually just one disk read operation. Source: SeawedFS GitHub Space

Seaweed has two objectives:

- To store billions of files!

- To serve the files fast!

Features of SeaweedFS

Seaweed has the following features to display to the world

- SeaweedFS can transparently integrate with the cloud: It can achieve both fast local access time and elastic cloud storage capacity, without any client side changes.

- SeaweedFS is so simple with O(1) disk reads that you are welcome to challenge the performance with your actual use cases. It is only 40 bytes of disk storage overhead for each file’s metadata.

- Can choose no replication or different replication levels, rack and data center aware.

- Automatic master servers failover – no single point of failure (SPOF).

- Automatic Gzip compression depending on file mime type.

- Automatic compaction to reclaim disk space after deletion or update.

- Automatic entry TTL expiration.

- Any server with some disk spaces can add to the total storage space.

- Adding/Removing servers does not cause any data re-balancing unless triggered by admin commands.

- Optional picture resizing.

Installation of SeaweedFS on Ubuntu

Now to the part where we put on our boots, gloves and get into the farm to get SeaweedFS installed, tilled and well watered on our Ubuntu server. Before we shove the shovel into the mud, follow the following steps to first install Go which is required by SeeweedFS.

Step 1: Prepare Your Server

This is a very important step since we shall install latest software and patches before proceeding to install SeaweedFS and Go. We shall also install tools we need here as well.

sudo apt update

sudo apt install vim curl wget zip git -y

sudo apt install build-essential autoconf automake gdb git libffi-dev zlib1g-dev libssl-dev -yStep 2: Fetch and install Go

You can use Golang available in the APT repository or pulling from source.

Method 1: Install from APT repository:

Run the commands below to install Golang on Ubuntu from APT repos.

sudo apt install golangMethod 2: Install Manually

Visit Go download page to fetch the latest Go tarball version available as follows:

cd ~

wget -c https://golang.org/dl/go1.22.2.linux-amd64.tar.gz -O - | sudo tar -xz -C /usr/localAfter that is done, we need to add “/usr/local/go/bin” directory to PATH environment variable so that the sever will find Go executable binaries. Do this by by appending the following line either to the /etc/profile file (for a system-wide installation) or the $HOME/.profile file (for the current user installation):

echo "export PATH=$PATH:/usr/local/go/bin" | sudo tee -a /etc/profile

#### For the current user installation

echo "export PATH=$PATH:/usr/local/go/bin" | tee -a $HOME/.profileDepending on the file you edited source the file, so that the new PATH environment variable can be loaded into the current shell session:

$ source ~/.profile

# Or

$ source /etc/profileStep 3: Checkout SeaweedFS Repository

In order to get SeaweedFS installed, we need to get the necessary files into our server. All sources are in GitHub and let us therefore clone the repository and proceed to install.

cd ~

git clone https://github.com/chrislusf/seaweedfs.gitStep 4: Download, compile, and install SeaweedFS

Once all the sources have been cloned, navigate into the new directory and install SeaweedFS project by executing the following command

$ cd ~/seaweedfs

$ make install

##Progress of the installation

$ go get -d ./weed/

go: downloading golang.org/x/sys v0.19.0

go: downloading github.com/aws/aws-sdk-go v1.51.30

go: downloading github.com/gorilla/mux v1.8.1

go: downloading github.com/hanwen/go-fuse/v2 v2.5.0

go: downloading github.com/hashicorp/raft v1.6.1

....Once this is done, you will find the executable “weed” in your $GOPATH/bin directory. Unfortunately when weed is installed, it creates $GOPATH under your current home directory. You will find weed here “~/go/bin/weed“. So to fix this issue, we shall copy SeaweedFS binaries to the earlier location where Go was installed in Step 2 like this:

sudo cp ~/go/bin/weed /usr/local/bin/Now “weed” command is in the PATH environment variable and we can now continue to configure SeaweedFS comfortably as illustrated in the following Steps.

$ weed version

version 30GB 3.65 linux amd64Step 5: Example Usage of SeaweedFS

In order to grasp the simple examples to be presented in this step, it will be great if we first wrap our minds fully about the working mechanism of SeaweedFS. The architecture is fairly simple. The actual data is stored in volumes on storage nodes(which can lie in same server or in different servers). One volume server can have multiple volumes, and can both support read and write access with basic authentication. Source:SeaweedFS Documentation

All volumes are managed by a master server which contains the volume id to volume server mapping.

Instead of managing chunks as distributed file systems do, SeaweedFS manages data volumes in the master server. Each data volume is 32GB or so in size, and can hold a lot of files. And each storage node can have many data volumes. So the master node only needs to store the metadata about the volumes, which is a fairly small amount of data and is generally stable. Source:SeaweedFS Documentation

By default, the master node runs on port 9333, and the volume nodes run on port 8080. To visualize this, we shall start one master node, and two volume nodes on port 8080 and 8081 respectively. Ideally as it had been mentioned, they should be started from different machines but we shall use one server as an example. If you intend to start the volumes on different servers, make sure that the -mserver IP address point to the master. Moreover the port on the master must be reachable from the volume servers/nodes.

SeaweedFS uses HTTP REST operations to read, write, and delete. The responses are in JSON or JSONP format.

Starting Master Server

As it has been made open, the master node runs on port 9333 by default. We can start the master server as follows:

Option 1: The manual way

$ weed master &

[1] 31754

root@noble:~/seaweedfs# I0505 22:46:31.312670 file_util.go:27 Folder /tmp Permission: -rwxrwxrwx

I0505 22:46:31.313657 master.go:269 current: 167.235.57.147:9333 peers:

I0505 22:46:31.313950 master_server.go:132 Volume Size Limit is 30000 MB

I0505 22:46:31.314587 master.go:150 Start Seaweed Master 30GB 3.65 at 167.235.57.147:9333

I0505 22:46:31.320478 raft_server.go:118 Starting RaftServer with 167.235.57.147:9333

I0505 22:46:31.323216 raft_server.go:167 current cluster leader:Option 2: Start master Using Systemd

You can start the master using Systemd by creating its unit file as follows:

sudo tee /etc/systemd/system/seaweedmaster.service<<EOF

[Unit]

Description=SeaweedFS Master

After=network.target

[Service]

Type=simple

User=root

Group=root

ExecStart=/usr/local/bin/weed master

WorkingDirectory=/usr/local/go/bin/

SyslogIdentifier=seaweedfs-master

[Install]

WantedBy=multi-user.target

EOFAfter updating the file, you need to reload the daemon and start the master as illustrated

sudo systemctl daemon-reload

sudo systemctl start seaweedmaster

sudo systemctl enable seaweedmasterThen check the its status

$ systemctl status seaweedmaster -l

● seaweedmaster.service - SeaweedFS Master

Loaded: loaded (/etc/systemd/system/seaweedmaster.service; enabled; preset: enabled)

Active: active (running) since Sun 2024-05-05 22:48:09 UTC; 6s ago

Main PID: 32423 (weed)

Tasks: 8 (limit: 2255)

Memory: 10.5M (peak: 10.6M)

CPU: 77ms

CGroup: /system.slice/seaweedmaster.service

└─32423 /usr/local/bin/weed master

May 05 22:48:09 noble systemd[1]: Started seaweedmaster.service - SeaweedFS Master.

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.050361 file_util.go:27 Folder /tmp Permission: -rwxrwxrwx

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.053820 master.go:269 current: 167.235.57.147:9333 peers:

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.054035 master_server.go:132 Volume Size Limit is 30000 MB

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.054460 master.go:150 Start Seaweed Master 30GB 3.65 at 167.235.57.147:9333

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.062097 raft_server.go:118 Starting RaftServer with 167.235.57.147:9333

May 05 22:48:10 noble seaweedfs-master[32423]: I0505 22:48:10.065201 raft_server.go:167 current cluster leader:

...Start Volume Servers

Once the master is ready and waiting for the volumes, we can now have the confidence to start the volumes using the commands below. We shall first create sample directories.

mkdir /tmp/{data1,data2,data3,data4}}Then let us create the first volumes as shown below. (The first is the command and what follows is the shell output)

Option 1: The manual way

$ weed volume -dir="/tmp/data1" -max=5 -mserver="localhost:9333" -port=8080 &

I1126 20:37:24 6595 disk_location.go:133] Store started on dir: /tmp/data1 with 0 volumes max 5

I1126 20:37:24 6595 disk_location.go:136] Store started on dir: /tmp/data1 with 0 ec shards

I1126 20:37:24 6595 volume.go:331] Start Seaweed volume server 30GB 2.12 a1021570 at 0.0.0.0:8080

I1126 20:37:24 6595 volume_grpc_client_to_master.go:52] Volume server start with seed master nodes: [localhost:9333]

I1126 20:37:24 6595 volume_grpc_client_to_master.go:114] Heartbeat to: localhost:9333

I1126 20:37:24 6507 node.go:278] topo adds child DefaultDataCenter

I1126 20:37:24 6507 node.go:278] topo:DefaultDataCenter adds child DefaultRack

I1126 20:37:24 6507 node.go:278] topo:DefaultDataCenter:DefaultRack adds child 172.22.3.196:8080

I1126 20:37:24 6507 master_grpc_server.go:73] added volume server 172.22.3.196:8080

I1126 20:37:24 6595 volume_grpc_client_to_master.go:135] Volume Server found a new master newLeader: 172.22.3.196:9333 instead of localhost:9333

W1126 20:37:24 6507 master_grpc_server.go:57] SendHeartbeat.Recv server 172.22.3.196:8080 : rpc error:

code = Canceled desc = context canceled

I1126 20:37:24 6507 node.go:294] topo:DefaultDataCenter:DefaultRack removes 172.22.3.196:8080

I1126 20:37:24 6507 master_grpc_server.go:29] unregister disconnected volume server 172.22.3.196:8080

I1126 20:37:27 6595 volume_grpc_client_to_master.go:114] Heartbeat to: 172.22.3.196:9333

I1126 20:37:27 6507 node.go:278] topo:DefaultDataCenter:DefaultRack adds child 172.22.3.196:8080

I1126 20:37:27 6507 master_grpc_server.go:73] added volume server 172.22.3.196:8080Then create the second one as again as follows. (The first is the command and what follows is the shell output)

$ weed volume -dir="/tmp/data2" -max=10 -mserver="localhost:9333" -port=8081 &

I1126 20:38:56 6612 disk_location.go:133] Store started on dir: /tmp/data2 with 0 volumes max 10

I1126 20:38:56 6612 disk_location.go:136] Store started on dir: /tmp/data2 with 0 ec shards

I1126 20:38:56 6612 volume_grpc_client_to_master.go:52] Volume server start with seed master nodes: [localhost:9333]

I1126 20:38:56 6612 volume.go:331] Start Seaweed volume server 30GB 2.12 a1021570 at 0.0.0.0:8081

I1126 20:38:56 6612 volume_grpc_client_to_master.go:114] Heartbeat to: localhost:9333

I1126 20:38:56 6507 node.go:278] topo:DefaultDataCenter:DefaultRack adds child 172.22.3.196:8081

I1126 20:38:56 6507 master_grpc_server.go:73] added volume server 172.22.3.196:8081

I1126 20:38:56 6612 volume_grpc_client_to_master.go:135] Volume Server found a new master newLeader: 172.22.3.196:9333 instead of localhost:9333

W1126 20:38:56 6507 master_grpc_server.go:57] SendHeartbeat.Recv server 172.22.3.196:8081 : rpc error:

code = Canceled desc = context canceled

I1126 20:38:56 6507 node.go:294] topo:DefaultDataCenter:DefaultRack removes 172.22.3.196:8081

I1126 20:38:56 6507 master_grpc_server.go:29] unregister disconnected volume server 172.22.3.196:8081

I1126 20:38:59 6612 volume_grpc_client_to_master.go:114] Heartbeat to: 172.22.3.196:9333

I1126 20:38:59 6507 node.go:278] topo:DefaultDataCenter:DefaultRack adds child 172.22.3.196:8081

I1126 20:38:59 6507 master_grpc_server.go:73] added volume server 172.22.3.196:8081Option 2: Using SystemD

To start the voules using Systemd, we will need to create two or more volume files in case you require more. It is as simple as follows:

For Volume1

$ sudo vim /etc/systemd/system/seaweedvolume1.service

[Unit]

Description=SeaweedFS Volume

After=network.target

[Service]

Type=simple

User=root

Group=root

ExecStart=/usr/local/go/bin/weed volume -dir="/tmp/data2" -max=10 -mserver="172.22.3.196:9333" -port=8081

WorkingDirectory=/usr/local/go/bin/

SyslogIdentifier=seaweedfs-volume

[Install]

WantedBy=multi-user.targetReplace volume path with your correct value, then start and enable.

sudo systemctl daemon-reload

sudo systemctl start seaweedvolume1.service

sudo systemctl enable seaweedvolume1.service

Check status:

$ systemctl status seaweedvolume1

● seaweedvolume1.service - SeaweedFS Volume

Loaded: loaded (/etc/systemd/system/seaweedvolume1.service; disabled; vendor preset: enabled)

Active: active (running) since Mon 2024-11-30 08:24:43 UTC; 3s ago

Main PID: 2063 (weed)

Tasks: 9 (limit: 2204)

Memory: 9.8M

CGroup: /system.slice/seaweedvolume1.service

└─2063 /usr/local/go/bin/weed volume -dir=/tmp/data3 -max=10 -mserver=localhost:9333 -port=8081 -ip=172.22.3.196For Volume2

$ sudo vim /etc/systemd/system/seaweedvolume2.service

[Unit]

Description=SeaweedFS Volume

After=network.target

[Service]

Type=simple

User=root

Group=root

ExecStart=/usr/local/go/bin/weed volume -dir="/tmp/data1" -max=5 -mserver="172.22.3.196:9333" -port=8080

WorkingDirectory=/usr/local/go/bin/

SyslogIdentifier=seaweedfs-volume2

[Install]

WantedBy=multi-user.targetAfter updating the files, we will have to reload the daemon as illustrated

sudo systemctl daemon-reload

sudo systemctl start seaweedvolume2

sudo systemctl enable seaweedvolume2Then check their statuses

$ sudo systemctl status seaweedvolume2

● seaweedvolume2.service - SeaweedFS Volume

Loaded: loaded (/etc/systemd/system/seaweedvolume2.service; disabled; vendor preset: enabled)

Active: active (running) since Mon 2020-11-30 08:29:22 UTC; 5s ago

Main PID: 2103 (weed)

Tasks: 10 (limit: 2204)

Memory: 10.3M

CGroup: /system.slice/seaweedvolume2.service

└─2103 /usr/local/go/bin/weed volume -dir=/tmp/data4 -max=5 -mserver=localhost:9333 -port=8080 -ip=172.22.3.196Write a sample File

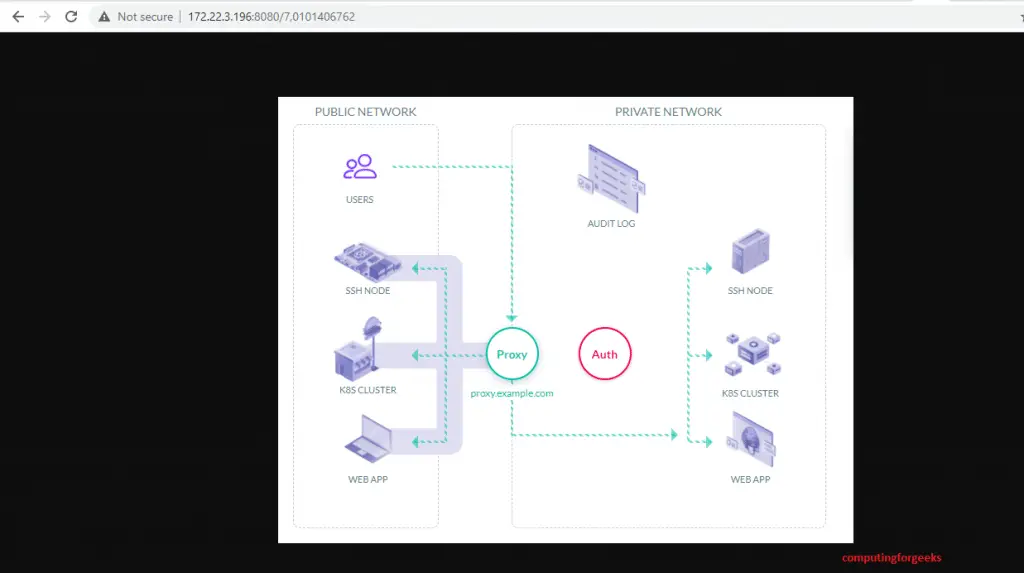

Uploading a file to the SeaweedFS object store is interesting. We first have to send a HTTP POST, PUT, or GET request to /dir/assign to get a File ID (fid) and a volume server url:

$ curl http://localhost:9333/dir/assign

{"fid":"7,0101406762","url":"172.22.3.196:8080","publicUrl":"172.22.3.196:8080","count":1}After we have those details as it has been shown above the next step is to store the file content. To do this, we will have to send a HTTP multi-part POST request to url + ‘/’ + File ID (fid) from the response. Our fid is 7,0101406762, url is 172.22.3.196:8080. Let us send the request like this. You will get a response as shown below it.

$ curl -F file=@/home/tech/teleport-logo.png http://172.22.3.196:8080/7,0101406762

{"name":"teleport-logo.png","size":70974,"eTag":"ef8deb64899176d3de492f2fa9951e14"}Update a file already sent to the Object Store

Updating is simpler than you can imagine. You simpley need yo send the same command as above but now with the new file you intend to replace the existing one with. You will maintain the fid and url.

curl -F file=@/home/tech/teleport-logo-updated.png http://172.22.3.196:8080/7,0101406762Deleting a file from the Object Store

In order to get rid of a file that you had already stored in SeaweedFS, you simply have to send an HTTP DELETE request to the same url + ‘/’ + File ID (fid) URL:

curl -X DELETE http://172.22.3.196:8080/7,0101406762Reading a saved File

Once you have your files stired, you can read them quite easily. You first take a look up the volume server’s URLs by the file’s volumeId as shown in this example:

$ curl http://http://172.22.3.196:9333/dir/lookup?volumeId=7

{"volumeId":"7","locations":[{"url":"172.22.3.196:8080","publicUrl":"172.22.3.196:8080"}]}Because volumes do not move quite often, you can cache the results most of the time to increase speed and performance of your unique implementation. Depending on the replication type, one volume can have multiple replica locations. Just randomly pick one location to read.

Now open up your browser or application you prefer to use to view the file you stored in SeaweedFS object store and point it to the url we got above. In case you have a firewall running, allow the port you will be accessing

sudo ufw allow 8080http://172.22.3.196:8080/7,0101406762

A screenshot of the file is shared below in this example

If you want a nicer URL, you can use one of these alternative URL formats:

http://172.22.3.196:8080/7/0101406762/your_preferred_name.jpg

http://172.22.3.196:8080/7/0101406762.jpg

http://172.22.3.196:8080/7,0101406762.jpg

http://172.22.3.196:8080/7/0101406762

http://172.22.3.196:8080/7,0101406762If you would wish to get a scaled version of an image, you can add some parameters. Examples are shared below:

http://172.22.3.196:8080/7/0101406762.jpg?height=200&width=200

http://172.22.3.196:8080/7/0101406762.jpg?height=200&width=200&mode=fit

http://172.22.3.196:8080/7/0101406762.jpg?height=200&width=200&mode=fillThere is so much more that Seaweed is able to do such as accomplishing No point of failure by the use of multiple servers and multiple volumes. Check out SeaweedFS Documentation on GitHub to find our more about this wonderful Object Storage tool.

Closing Remarks

SeaweedFS can turn out to be pretty invaluable in your projects especially if it involves storage and retrieval of large amounts of data in form of objects. In case you have applications that require fetching photos and such kind of data, then SeaweedFS is a good place to settle in. Give it a shot! Meanwhile, we continue to appreciate your time on the blog and the relentless support you have exuded thus far. You can read other similar guides shared below.