We live in a world where most of the things that we use and interact with on a daily basis are run by computers deployed somewhere in the globe. Be it your social media, your online payments, your mobile money transactions or even voice communication, computers make all these possible. Bearing this in mind, there are very important transactions that happen in these computers that the interested parties need to visualize and subsequently use for their other exploits. A good example is visualizing and processing financial transactions for the benefit of finding out more opportunities or solving problems. So how are we able to capture such data in an efficient and reliable way even in the future? Well, there are technological solutions developed to handle that and one of them is Apache Kafka.

For Ubuntu: Install and Configure Apache Kafka on Ubuntu

Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications.Source: Apache Kafka. Let us break down what all of that means step by step. An event records the fact that “something took place” in the world or in your business and was recorded digitally.

Event streaming is the practice of capturing data in real-time from event sources (producers) like databases, sensors, mobile devices, cloud services, and software applications in the form of streams of events; storing these event streams durably for later retrieval; manipulating, processing, and reacting to the event streams in real-time as well as retrospectively; and routing the event streams to different destination technologies (consumers) as needed. Source: Apache Kafka.

The producer is the program/application or entity that sends data to the Kafka cluster. The consumer sits on the other side and receives data from the Kafka cluster. The Kafka cluster can consist of one or more Kafka brokers which sit on different servers.

“Life is really simple, but we insist on making it complicated.”

—Confucius(孔子)

Definition of other terms

- Topic: A topic is a common name used to store and publish a particular stream of data. For example if you would wish to store all the data about a page being clicked, you can give the Topic a name such as “Clicked Page“.

- Partition: Every topic is split up into partitions (“buckets“). When a topic is created, the number of partitions need to be specified but can be increased later as need arises. Each message gets stored into partitions with an incremental id known as its Offset value.

- Kafka Broker: Every server with Kafka installed in it is known as a broker. It is a container holding several topics having their partitions.

Apache Kafka Use Cases

The following are some of the applications that you can take advantage of Apache Kafka:

- Message Broking: In comparison to most messaging systems Kafka has better throughput, built-in partitioning, replication, and fault-tolerance which makes it a good solution for large scale message processing applications

- Website Activity Tracking

- Log Aggregation: Kafka abstracts away the details of files and gives a cleaner abstraction of log or event data as a stream of messages.

- Stream Processing: capturing data in real-time from event sources; storing these event streams durably for later retrieval; and routing the event streams to different destination technologies as needed

- Event Sourcing: This is a style of application design where state changes are logged as a time-ordered sequence of records.

- Commit Log: Kafka can serve as a kind of external commit-log for a distributed system. The log helps replicate data between nodes and acts as a re-syncing mechanism for failed nodes to restore their data.

- Metrics: This involves aggregating statistics from distributed applications to produce centralized feeds of operational data.

Installing Kafka on CentOS 8/Rocky Linux 8

Apache Kafka requires Java for it to run.

Step 1: Preparing our Server

So we shall begin by updating our CentOS / Rocky Linux server and getting Java installed. Luckily, the default OS repositories include the latest two major Java 8 and Java 11 LTS versions.This is illustrated below:

sudo dnf update

sudo dnf install java-11-openjdk-devel vim wget git unzip -yStep 2: Fetch Kafka on CentOS 8/Rocky Linux 8

After Java is well installed, let us now fetch Kafka sources. Head over to Downloads and look for the latest release and get the sources under Binary downloads. Click on the one that is recommended by Kafka and you will be redirected to a page that has a link you can use to fetch it.

cd ~

wget https://downloads.apache.org/kafka/3.1.0/kafka_2.13-3.1.0.tgz

sudo mkdir /usr/local/kafka-server && cd /usr/local/kafka-server

sudo tar -xvzf ~/kafka_2.13-3.1.0.tgz --strip 1Archive’s contents will be extracted into /usr/local/kafka-server/ due to –strip 1 flag set.

Step 3: Create Kafka and Zookeeper Systemd Unit Files

Systemd unit files for Kafka and Zookeeper will pretty much help in performing common service actions such as starting, stopping, and restarting Kafka. This makes it adapt to how other services are started, stopped, and restarted which is beneficial and consistent.

Let us begin with Zookeeper service:

And by the way Zookeeper manages Kafka’s cluster state and configurations.

sudo tee /etc/systemd/system/zookeeper.service<<EOF

[Unit]

Description=Apache Zookeeper Server

Requires=network.target remote-fs.target

After=network.target remote-fs.target

[Service]

Type=simple

ExecStart=/usr/local/kafka-server/bin/zookeeper-server-start.sh /usr/local/kafka-server/config/zookeeper.properties

ExecStop=/usr/local/kafka-server/bin/zookeeper-server-stop.sh

Restart=on-abnormal

[Install]

WantedBy=multi-user.target

EOFThen for Kafka service

sudo tee /etc/systemd/system/kafka.service<<EOF

[Unit]

Description=Apache Kafka Server

Documentation=http://kafka.apache.org/documentation.html

Requires=zookeeper.service

After=zookeeper.service

[Service]

Type=simple

Environment="JAVA_HOME=/usr/lib/jvm/jre-11-openjdk"

ExecStart=/usr/local/kafka-server/bin/kafka-server-start.sh /usr/local/kafka-server/config/server.properties

ExecStop=/usr/local/kafka-server/bin/kafka-server-stop.sh

Restart=on-abnormal

[Install]

WantedBy=multi-user.target

EOFAfter you are done adding the configuratons, reload the systemd daemon to apply changes and then start the servives.

sudo systemctl daemon-reload

sudo systemctl enable --now zookeeper

sudo systemctl enable --now kafkaConfirm status of the services added

systemctl status zookeeper kafkaStep 4: Install Cluster Manager for Apache Kafka (CMAK) | Kafka Manager

CMAK (previously known as Kafka Manager) is an opensource tool for managing Apache Kafka clusters developed by Yahoo.

cd ~

git clone https://github.com/yahoo/CMAK.gitStep 5: Configure CMAK on CentOS 8 / Rocky Linux 8

The minimum configuration is the zookeeper hosts which are to be used for CMAK (pka kafka manager) state. This can be found in the application.conf file in conf directory. Change cmak.zkhosts=”my.zookeeper.host.com:2181″ and you can also specify multiple zookeeper hosts by comma delimiting them, like so: cmak.zkhosts=”my.zookeeper.host.com:2181,other.zookeeper.host.com:2181″

$ vim ~/CMAK/conf/application.conf

cmak.zkhosts="localhost:2181After you are done adding your zookeeper hosts, the command below will create a zip file which can be used to deploy the application. You should see a lot of output on your terminal as files are downloaded. Give it time to finish and compile.

cd ~/CMAK/

./sbt clean distWhen all is done, you should see a message like below:

[info] Your package is ready in /home/tech/CMAK/target/universal/cmak-3.0.0.6.zipChange into the directory where the zip file is located and unzip it:

cd ~/CMAK/target/universal

unzip cmak-*.zip

cd cmak-*/Step 5: Starting the service and Accessing it

After extracting the produced zipfile, and changing the working directory to it as done in Step 4, you can run the service like this:

cd ~/CMAK/target/universal/cmak-*/

bin/cmakBy default, it will choose port 9000, so open your favorite browser and point it to http://ip-or-domain-name-of-server:9000. In case your firewall is running, kindly aloow the port to be accessed.

sudo firewall-cmd --permanent --add-port=9000/tcp

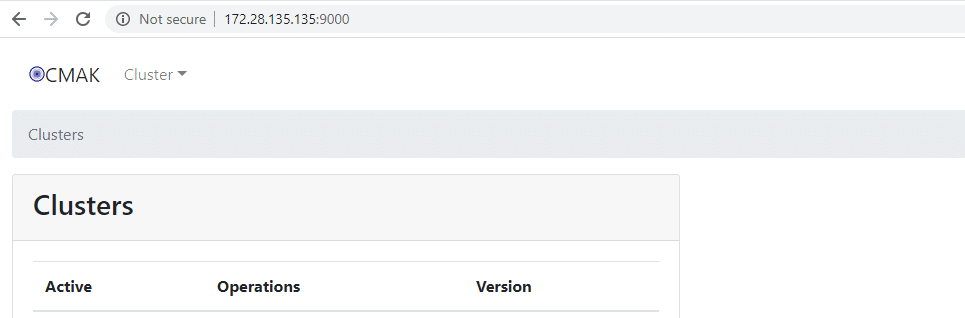

sudo firewall-cmd --reloadYou should see an interface as shown below once everything is okay:

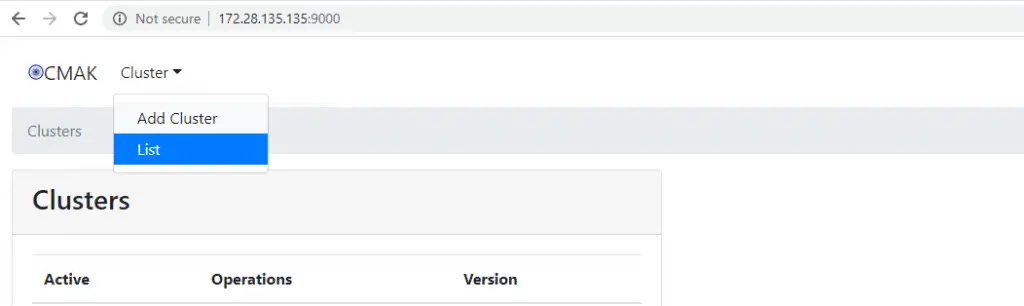

You will realize that there is no cluster available when we first get into the interface as shown above. Therefore, we shall proceed to create a new cluster. Click on the “Cluster” drop-down list and then hit “Add Cluster“.

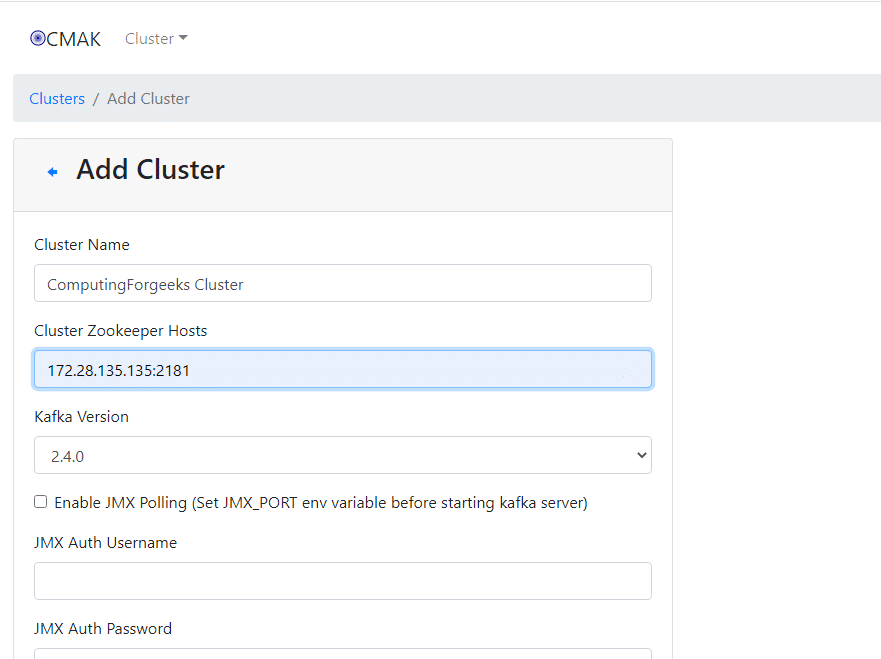

A form will be presented for you to fill as shown below. Give your cluster a name, add Zookeeper host(s), if you have several, add them delimited by a comma. You can fill in the other details depending on your needs.

After everything is well filled to your satisfaction, scroll down and hit “Save“.

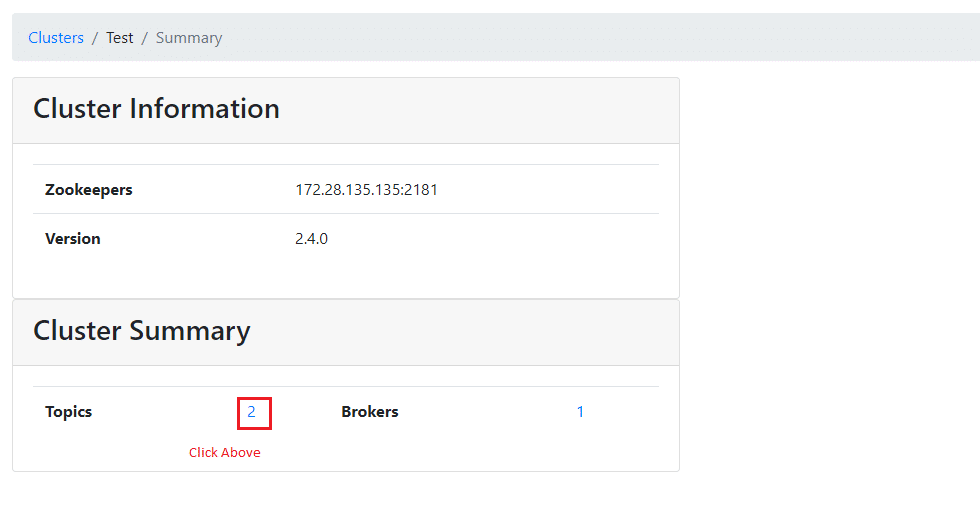

And you shall have added your cluster to CMAK interface/manager.

Step 6: Adding Sample Topic

Apache Kafka provides multiple shell scripts to work with. Let us first create a sample topic called “ComputingForGeeksTopic” with a single partition with single replica. Open a new terminal leaving CMAK running and issue the command below:

cd /usr/local/kafka-server

bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic ComputingForGeeksTopic

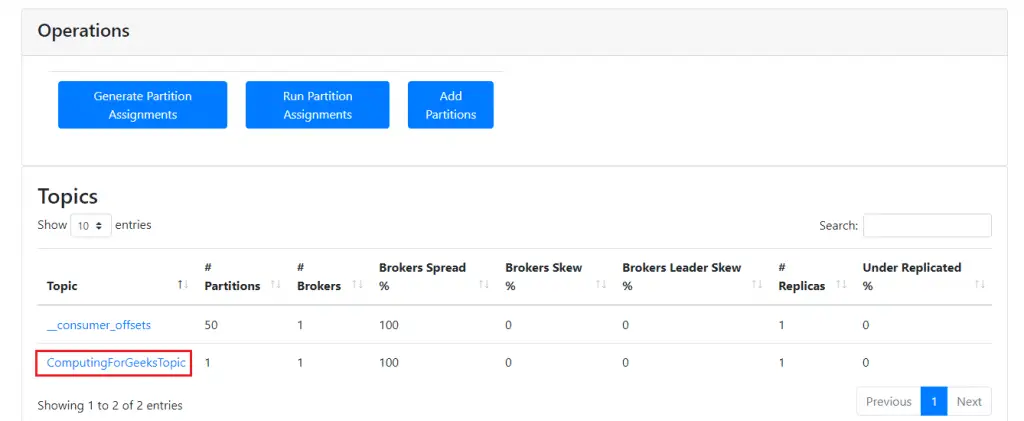

Created topic ComputingForGeeksTopic.Confirm whether the topic is updated in CMAK interface: Under Topics, click on the number.

You should be able to see the new Topic we added as below:

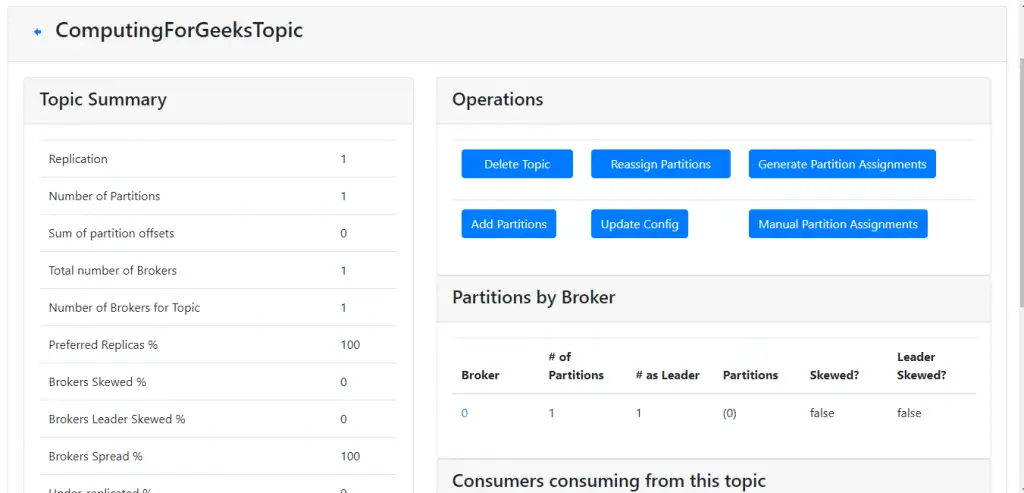

Click on it to view its other details such as Partitions etc.

Step 7: Create Topic in CMAK interface

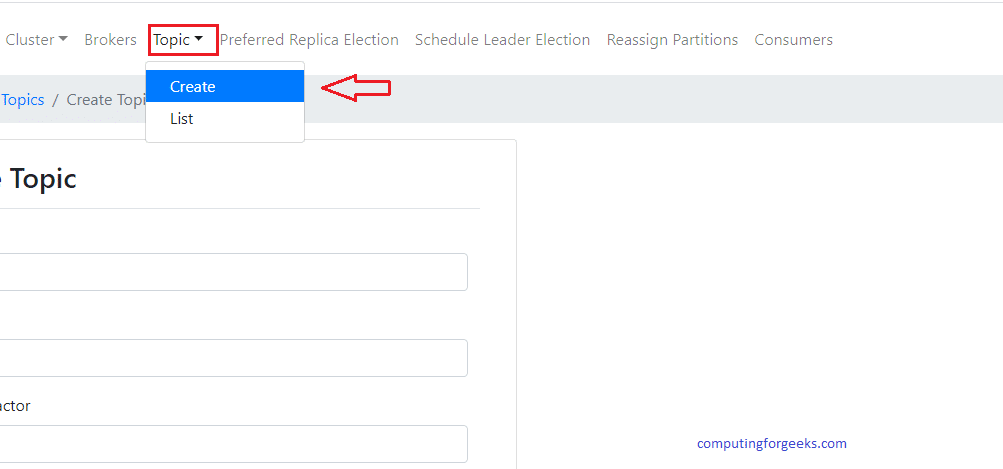

Another simpler way of creating a Topic is via the CMAK web interface. Simply click on “Topic” drop-down list and click on “Create“. This is illustrated below.

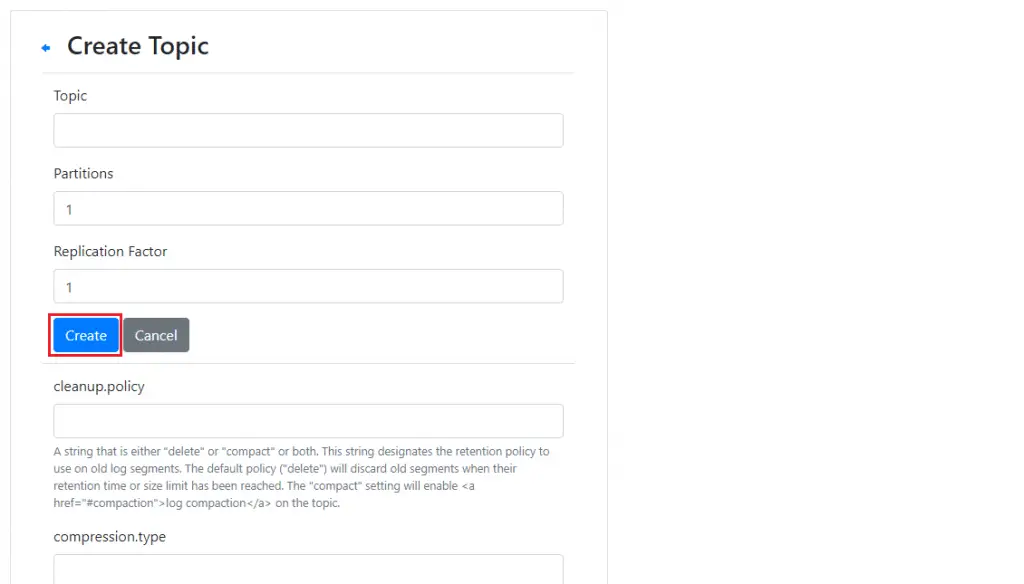

You will be required to input all the details you need about the new Topic (Replication Factor, Partitions and others). Fill in the form then click “Create” below it.

Conclusion

Apache Kafka is now installed on CentOS 8/Rocky Linux 8 server. It should be noted that it is possible to install Kafka on multi-servers to create a cluster. Otherwise, thank you for visiting and staying tuned till the end. We appreciate the support that you continue to give us.

Find out more about Apache Kafka

Find out more about Cluster Manager for Apache Kafka

Find other amazing guides below: