For Ubuntu, check:

KVM (Kernel-based Virtual Machine) is the hypervisor for OpenNebula’s Open Cloud Architecture. KVM is a complete virtualization system for Linux. It offers full virtualization, where each Virtual Machine interacts with its own virtualized hardware.

OpenNebula KVM Node Installation on CentOS – Requirements

The Hosts will need a CPU with Intel VT or AMD’s AMD-V features, in order to support virtualization. KVM’s Preparing to use KVM guide will clarify any doubts you may have regarding if your hardware supports KVM.

Step 1: OpenNebula KVM Node Installation – Preparation

There are a few things you need to make sure are in place before you can install the packages and start the services required.

Disable SELinux:

OpenNebula doesn’t work well with SELinux in enforcing mode. Let’s disable it.

sudo setenforce 0

sudo sed -i 's/^SELINUX=.*/SELINUX=permissive/g' /etc/selinux/config

cat /etc/selinux/configAdd OpenNebula and epel repositories

Run the following commands to add epel and OpenNebula repositories on CentOS 7 / CentOS 8:

sudo yum -y install epel-releasePlease check the recent version of OpenNebula as you install it.

Do system update:

sudo makecache fast

sudo yum -y update

sudo systemctl rebootStep 2. Installing OpenNebula KVM Node on CentOS 7 / CentOS 8

Execute the following commands to install the node package and restart libvirt to use the OpenNebula provided configuration file:

sudo yum install opennebula-node-kvmCheck more details about the package:

rpm -qi opennebula-node-kvmFor further configuration, check the specific guide: KVM.

You should have these line under /etc/libvirt/libvirtd.conf for oneadmin to work well with KVM.

unix_sock_group = "oneadmin"

unix_sock_rw_perms = "0777"Always restart libvirtd when you make a change.

sudo systemctl restart libvirtdStep 3: Configure Passwordless SSH

OpenNebula Front-end connects to the hypervisor Hosts using SSH. You must distribute the public key of the useroneadmin from all machines to the file /var/lib/one/.ssh/authorized_keyson all the machines. When the package was installed in the Front-end, an SSH key was generated and the authorized_keys populated. We need to create a known_hosts file and sync it as well to the nodes. To create the known_hosts file, we have to execute this command as user oneadmin in the Front-end with all the node names and the Front-end name as parameters:

sudo su - oneadmin

ssh-keyscan <frontend> <node1> <node2> <nodex> >> /var/lib/one/.ssh/known_hostsNow we need to copy the directory /var/lib/one/.ssh to all the nodes. You can reset the password for the oneadmin user on all nodes.

passwd oneadminThen on Front-end node, run

$ scp -rp /var/lib/one/.ssh <node1>:/var/lib/one/

$ scp -rp /var/lib/one/.ssh <node2>:/var/lib/one/Test ssh from Front-end, you should not be prompted for a password

ssh <node1>

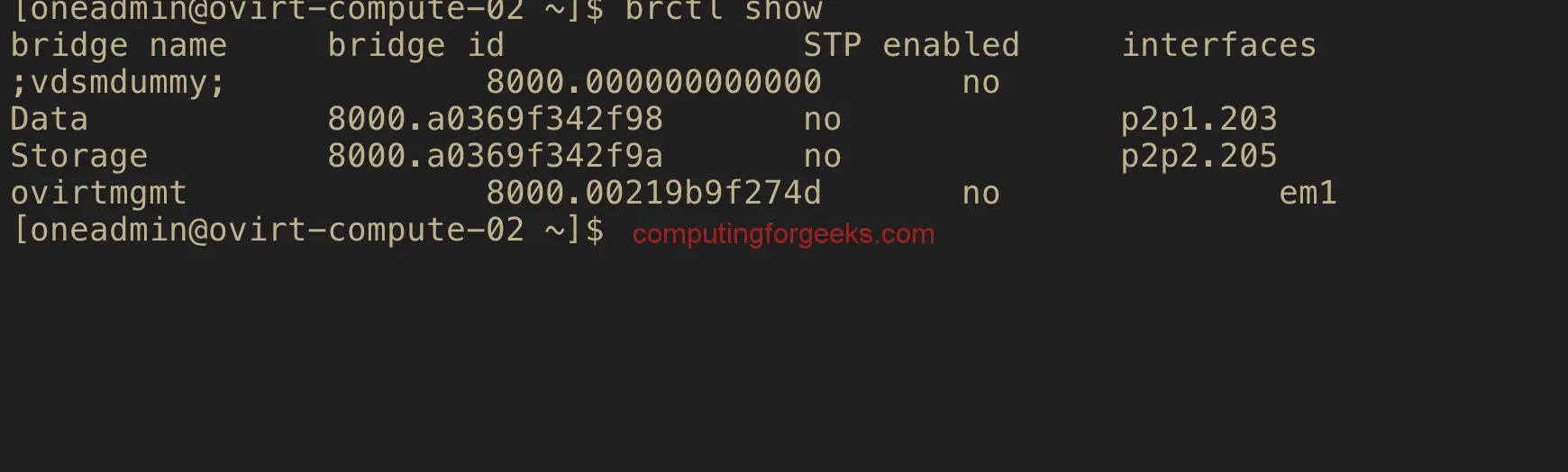

ssh <frontend>Step 4: Configure Host Networking

A network connection is needed by the OpenNebula Front-end daemons to access the hosts to manage and monitor the Hosts, and to transfer the Image files. It is highly recommended to use a dedicated network for management purposes.

OpenNebula supports four different networking modes:

OpenNebula supports four different networking modes:

- Bridged. The Virtual Machine is directly attached to an existing bridge in the hypervisor. This mode can be configured to use security groups and network isolation.

- VLAN. Virtual Networks are implemented through 802.1Q VLAN tagging.

- VXLAN. Virtual Networks implements VLANs using the VXLAN protocol that relies on a UDP encapsulation and IP multicast.

- Open vSwitch. Similar to the VLAN mode but using an openvswitch instead of a Linux bridge.

Documentation for each is provided on the links. My setup uses Bridged networking. I have three bridges on compute hosts, for storage, a private network, and public data.

For storage configurations, visit Open Cloud Storage

Step 5: Adding a Host to OpenNebula

The final step is Adding a Host to OpenNebula. The node is being registered on the OpenNebula Front-end so that OpenNebula can launch VMs on it. This step can be done in the CLI or in Sunstone, the graphical user interface. Follow just one method, not both, as they accomplish the same.

Adding a Host through Sunstone

Open the Sunstone > Infrastructure -> Hosts. Click on the + button. Select KVM for type field.

The fill in the fqdn or IP address of the node in the Hostname field.

Go back to the hosts’ section and confirm it’s in ON state.

If the host turns to the stateerr instead of,on check./var/log/one/oned.log Chances are it’s a problem with the SSH!

Adding a Host through the CLI

To add a node to the cloud, run this command as oneadmin in the Front-end:

$ onehost create <node01> -i kvm -v kvm

$ onehost list

ID NAME CLUSTER RVM ALLOCATED_CPU ALLOCATED_MEM STAT

1 localhost default 0 - - init

# After some time - around 2 minutes

$ onehost list

ID NAME CLUSTER RVM ALLOCATED_CPU ALLOCATED_MEM STAT

0 192.168.70.82 default 0 0 / 1600 (0%) 0K / 94.2G (0%) on This is the end of OpenNebula KVM Node Installation on CentOS 7 / CentOS 8. In the next guide, we cover Virtual configurations and Storage.