Network engineers preparing for CCNA, CCNP, or JNCIP labs need somewhere to break things safely. Physical hardware is expensive, Packet Tracer has limits, and spinning up a dozen real routers on a desk isn’t practical. GNS3 has been the answer for over a decade because it runs real IOS, IOS-XE, IOS-XR, JunOS, and Arista images inside your Linux host and wires them together with virtual cables.

This guide walks through the GNS3 server install on Ubuntu 24.04 LTS via the official PPA, adds Dynamips, ubridge, and QEMU/KVM backends, then connects to the bundled Web-UI so you can drive the lab from a browser. The same commands work on Ubuntu 26.04 LTS and Debian 13 with minor repository differences noted inline.

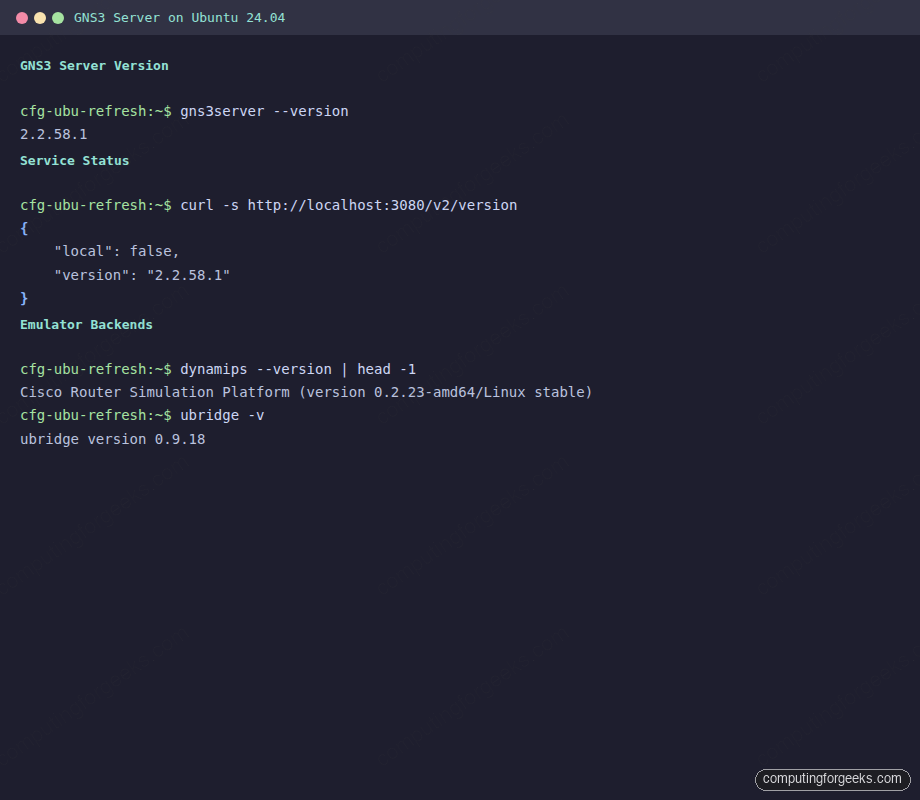

Tested April 2026 on Ubuntu 24.04.4 LTS with GNS3 server 2.2.58.1, Dynamips 0.2.23, ubridge 0.9.18, Python 3.12.3

Why GNS3 on Ubuntu

GNS3 on Ubuntu runs Cisco, Juniper, and Arista images at near line rate because Dynamips, QEMU/KVM, and Docker containers run natively on the Linux kernel. Windows and macOS users end up running GNS3 through a VM anyway, which adds a hypervisor layer between the lab and the host. Installing directly on Ubuntu skips that layer and lets you allocate every bit of RAM and every CPU core to the emulated routers.

The GNS3 Web-UI also matters here. Versions from 2.2.58 onwards bundle a browser client at /static/web-ui/, so you can manage projects, add nodes, and wire topologies without installing the Qt desktop client. That makes a headless Ubuntu 24.04 server running in a rack or cloud VM a first-class GNS3 host, not just a compute node for a remote GUI.

Prerequisites

- Ubuntu 24.04 LTS (or Ubuntu 26.04 LTS / Debian 13)

- At least 4 GB RAM and 20 GB free disk for Cisco and Juniper images

- CPU with VT-x or AMD-V for QEMU/KVM acceleration

- A user with

sudoprivileges - Network access to Launchpad PPAs and the Ubuntu archive

For cloud-based labs, a 4 vCPU / 8 GB VPS comfortably runs a 6-router topology with VPCS endpoints. DigitalOcean and Hetzner Cloud both have images that boot straight into a usable Ubuntu 24.04 server in under a minute.

Verify KVM acceleration is available before installing QEMU:

grep -c -E 'vmx|svm' /proc/cpuinfoA number greater than zero means the CPU supports hardware virtualization. On bare-metal Ubuntu 24.04 or a nested-virt-enabled VM, the output matches the core count.

Step 1: Update the system and enable universe

GNS3’s dependencies pull from Ubuntu’s universe repository, which is enabled by default on 24.04 but double-check before continuing:

sudo apt update && sudo apt upgrade -y

sudo add-apt-repository -y universeInstall the tooling that lets add-apt-repository pull from external PPAs:

sudo apt install -y software-properties-common curlWith add-apt-repository available, the GNS3 PPA can be added in one command.

Step 2: Add the official GNS3 PPA

The GNS3 team publishes ppa:gns3/ppa with builds for every supported Ubuntu release. Add it and refresh the package index:

sudo add-apt-repository -y ppa:gns3/ppa

sudo apt updateThe confirmation lists the PPA and the packages it makes available for your release:

Repository: 'Types: deb

URIs: https://ppa.launchpadcontent.net/gns3/ppa/ubuntu/

Suites: noble

Components: main

'

Description:

PPA for GNS3 and Supporting Packages.On Debian 13, the PPA doesn’t ship a bookworm suite, so add the GNS3 APT source manually by pointing at noble and accepting that you’re mixing repositories. Most engineers on Debian install GNS3 from source or through pip inside a virtualenv, which the project documents at docs.gns3.com.

Step 3: Install the GNS3 server

For a headless install (no desktop, no Qt client on the same box), pull only the server package:

sudo apt install -y --no-install-recommends gns3-serverConfirm the version, which tells you which Web-UI bundle the server ships:

gns3server --versionExpected output on current Ubuntu 24.04 with the official PPA:

2.2.58.1If you want the Qt desktop client as well (useful on an Ubuntu workstation, not a server), install the bundle:

sudo apt install -y gns3-gui gns3-serverThe desktop client starts from the Activities menu or from a terminal with gns3. On a headless server, skip gns3-gui entirely and reach the server from a browser or from the GNS3 Qt client running on your laptop.

Step 4: Install emulator backends

The GNS3 server is a thin orchestrator. The actual emulation happens in separate binaries: Dynamips for Cisco IOS, ubridge for virtual Ethernet bridging, QEMU for full VMs, and Docker for container-based appliances like VyOS or FRRouting.

Install the core three first:

sudo apt install -y dynamips ubridgeVerify Dynamips is on the expected stable branch:

dynamips --version | head -1The output confirms the build date and architecture:

Cisco Router Simulation Platform (version 0.2.23-amd64/Linux stable)Add QEMU and libvirt for full VMs (IOS-XRv, Juniper vMX, Arista vEOS, and custom images):

sudo apt install -y qemu-system qemu-kvm libvirt-daemon-systemIf you already run KVM for other virtualization work on this host, the full walkthrough for bridging, storage pools, and libvirt networking is in the dedicated Install KVM on Ubuntu 24.04 guide. For a pure GNS3 host that won’t serve non-GNS3 VMs, the default libvirt install is enough.

For Docker-based appliances (FRRouting, VyOS, Open vSwitch images), install Docker CE from the upstream repository. The standalone walkthrough is here: Install Docker CE on Ubuntu. The short version:

curl -fsSL https://get.docker.com | sudo sh

sudo systemctl enable --now dockerDocker Engine plus the GNS3 server is enough to run container-based appliances, but the user account driving GNS3 still needs the right group memberships to actually launch emulators with full privileges.

Step 5: Fix user permissions for KVM, libvirt, and ubridge

GNS3 runs emulators as whatever user starts gns3server. That user needs membership in three groups to launch QEMU with KVM acceleration and to let ubridge touch raw sockets:

sudo usermod -aG kvm,libvirt,ubridge $USERLog out and back in so the new group membership takes effect, then verify:

id | tr ',' '\n' | grep -E 'kvm|libvirt|ubridge'All three group names should appear. Without kvm, QEMU falls back to software emulation and throughput drops by an order of magnitude. Without ubridge, ARP between nodes silently fails because ubridge can’t open raw packet sockets.

Step 6: Start the GNS3 server

Start the server in the foreground first to confirm it binds cleanly to port 3080:

gns3server --host 0.0.0.0The startup log prints the version, Python runtime, listen address, and the initial controller bootstrap:

INFO run.py:218 GNS3 server version 2.2.58.1

INFO run.py:220 Copyright (c) 2007-2026 GNS3 Technologies Inc.

INFO run.py:242 Running with Python 3.12.3 and has PID 48692

INFO run.py:248 Using system certificate store for SSL connections

INFO web_server.py:349 Starting server on 0.0.0.0:3080

INFO __init__.py:70 Load controller configuration file /root/.config/GNS3/2.2/gns3_controller.conf

INFO __init__.py:329 Installing base configs in '/root/GNS3/configs'Binding to 0.0.0.0 accepts connections from any interface, which is what you want for a remote GUI client. If you only intend to drive the server from localhost, drop the flag.

In a second terminal, hit the version endpoint:

curl -s http://localhost:3080/v2/versionA healthy server returns JSON:

{

"local": false,

"version": "2.2.58.1"

}Stop the foreground process with Ctrl+C and continue to the systemd unit.

Step 7: Run GNS3 server as a systemd service

Running gns3server in a terminal works for quick tests, but every reboot kills the lab. A systemd unit fixes that. Create the unit file:

sudo vi /etc/systemd/system/gns3server.servicePaste the following, substituting your own username for ubuntu:

[Unit]

Description=GNS3 server

After=network-online.target

Wants=network-online.target

[Service]

Type=simple

User=ubuntu

Group=ubuntu

ExecStart=/usr/bin/gns3server --host 0.0.0.0

Restart=on-failure

RestartSec=5

LimitNOFILE=16384

[Install]

WantedBy=multi-user.targetReload systemd and start the unit:

sudo systemctl daemon-reload

sudo systemctl enable --now gns3serverConfirm the service is active and listening:

sudo systemctl status gns3server --no-pager

ss -tlnp | grep 3080With the service active and a listener on 3080, the bundled Web-UI is reachable from any browser on the network.

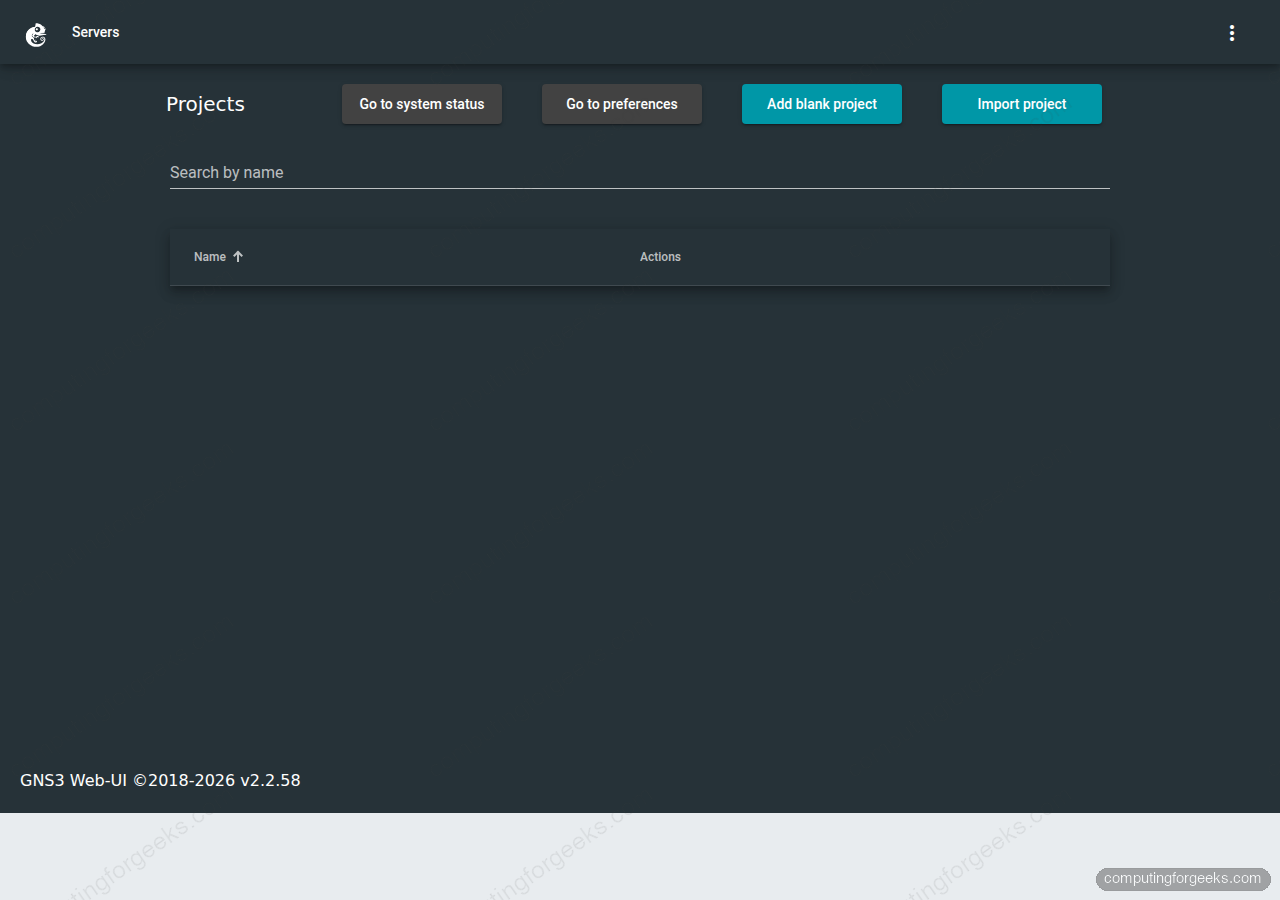

Step 8: Access the GNS3 Web-UI

From any machine on the same network, point a browser at the server’s IP on port 3080. The bundled client loads at /static/web-ui/bundled, and the server root redirects there automatically:

http://10.0.1.50:3080/The Projects page appears first with an empty list, a search field, and buttons for system status, preferences, blank projects, and imports. The footer shows which Web-UI bundle shipped with this server version.

Click Add blank project, give it a name, and open it to reach the topology canvas. From there you can drag in node templates (VPCS, Ethernet switch, Cloud, or any uploaded appliance), draw links between them, and start the topology with the green play button.

Verifying from the terminal is a useful second check when the browser can’t reach the server:

The browser Web-UI works for most day-to-day topology work, but some operations (appliance uploads, complex labs with many nodes) remain faster in the Qt desktop client pointed at the same remote server.

Step 9: Connect the GNS3 Qt desktop client to the server

The Qt client running on your Ubuntu, macOS, or Windows laptop can drive the remote server instead of running its own local compute. This is the common pattern for team labs: one beefy Ubuntu VPS hosts the emulators, every engineer runs the GUI locally.

On the laptop, install gns3-gui (same PPA on Ubuntu) and launch it. Under Edit → Preferences → Server → Main server, set:

- Host binding: the public or LAN IP of your Ubuntu server

- Port:

3080 - Protocol: HTTP (or HTTPS if you wired up certificates in

/etc/gns3/gns3_server.conf) - Uncheck Enable local server so all compute is remote

Click Apply. The GUI reconnects and the server version appears in the status bar.

Step 10: Firewall and security notes

Port 3080 on a publicly reachable Ubuntu server is a bad default because the server has no built-in authentication in the 2.2.x line. Two realistic options:

LAN-only: open port 3080 to the subnet that holds your engineers and nothing else. On Ubuntu, ufw handles this:

sudo ufw allow from 10.0.1.0/24 to any port 3080 proto tcp

sudo ufw enableSubstitute your own CIDR. Verify with sudo ufw status numbered.

Reverse proxy with auth: put Nginx in front of GNS3 with HTTP basic auth or a proper OIDC plugin. The Nginx block listens on 443, terminates TLS, challenges the user, then proxies to 127.0.0.1:3080. Keep GNS3 bound to localhost in that setup.

Credentials for the basic-auth file belong in a password manager, not hand-typed into an SSH session. For team labs, a shared secrets vault like 1Password stores the GNS3 admin password, the router enable secret, and the VPN pre-shared keys without the copy-paste churn.

Troubleshooting

Cannot connect to compute ‘local’: Connect call failed 127.0.0.1:3080

This line appears in the startup log before the controller finishes bootstrap. It’s cosmetic on the first run because the controller tries to self-register before the HTTP server has fully bound. The subsequent INFO compute.py:369 Connecting to compute 'local' line should succeed a second later. If the error persists, something else is holding port 3080: check with ss -tlnp | grep 3080.

gns3server: command not found after apt install

The GNS3 PPA installs the binary under /usr/bin/gns3server, which is in every login shell’s PATH. If you used pip install gns3-server in the past, Python’s user site-packages bin might shadow the package binary. Run which gns3server and remove the stale pip install with pip uninstall gns3-server, then re-install from the PPA.

KVM acceleration not enabled in nested VMs

On a Proxmox or libvirt host, the guest VM needs CPU type host and nested virtualization enabled on the hypervisor. Verify inside the Ubuntu guest with kvm-ok from the cpu-checker package. If it reports KVM acceleration is missing, QEMU still runs but every emulated router slows to a crawl. For Proxmox specifically, set CPU → Type → host on the VM and reboot.

Routers and devices fail to start: ubridge permission error

Adding your user to the ubridge group isn’t always enough. The ubridge binary itself needs CAP_NET_ADMIN and CAP_NET_RAW to open raw packet sockets. The PPA package sets these in its postinst, but a pip/source install, or an upgrade that replaced the binary, leaves it without the caps and every device fails to boot. Check what’s currently set:

getcap /usr/bin/ubridgeIf the output is empty, apply the capabilities manually and restart the server:

sudo setcap cap_net_admin,cap_net_raw=ep /usr/bin/ubridge

sudo systemctl restart gns3serverRe-run getcap to confirm the line reads /usr/bin/ubridge cap_net_admin,cap_net_raw=ep, then start a device from the Web-UI. Thanks to André in the comments for flagging this one.

Going further

The server is running, the Web-UI is reachable, and the backends are wired. Natural next steps depending on what you’re building:

- For bridge-networked labs that need layer-2 connectivity into your host LAN, set up a Linux bridge with KVM bridged networking with Netplan and point the GNS3 Cloud node at that bridge

- For packet-level traffic analysis on links between GNS3 nodes, right-click a link and capture directly into Wireshark: the Wireshark install guide covers the client side

- For mixed VM + GNS3 labs where KVM guests talk to emulated routers, the KVM hypervisor install on Ubuntu covers bridge and NAT networking that plugs into GNS3 Cloud nodes

- For AI-assisted lab automation (asking an LLM to generate IOS configs or parse show-command output), Ollama on Ubuntu runs local models next to the lab without shipping commands to a cloud API

If the lab is headed toward a CCNP or JNCIP study plan, budget disk for IOS-XRv (~2 GB), Junos vMX (~600 MB), and Arista vEOS (~500 MB) on top of the base GNS3 install. A 20 GB root volume fills up fast once you’ve pulled three or four appliance images.

Need a well-built Ubuntu server, an isolated lab VPC, or a hardened reverse proxy in front of your GNS3 server? Get in touch via ComputingForGeeks Contact and we’ll come back with a scope and plan.

Want future networking and Linux guides in your inbox? Subscribe to the ComputingForGeeks newsletter for weekly deep-dives on cloud, Kubernetes, and self-hosted infrastructure.

did not work

Hi. What’s the issue?, any errors?

I am having issues with the network devices

I have installed vJunos Switch and VJunos router and neither stay up when I turn them on.

vJunos devices require significant memory to boot fully. Make sure you have allocated at least 4 GB RAM per vJunos instance in the GNS3 template settings. Also check that KVM/QEMU is properly configured (not running in TCG mode) by verifying

kvm-okoutput. If the device powers on but drops after a few seconds, the console log (right-click > Console) will show the specific failure, usually a memory or CPU check error.Thank you. It works fine for me.

I have to set some permissions:

setcap cap_net_admin,cap_net_raw=ep /usr/bin/ubridge

Than Router and other devices start to boot.

Good catch. The gns3-server PPA package normally sets those caps via its postinst, so this fix is usually needed after a pip/source install or an upgrade that replaced the binary without re-applying caps. Verify with getcap /usr/bin/ubridge.