Anthropic shipped Claude Opus 4.7 on April 16, 2026, and it lands where coding work is getting done: Claude Code, Claude apps, GitHub Copilot, Amazon Bedrock, Google Vertex AI, and Microsoft Foundry. The headline is the benchmark jump. Opus 4.7 posts 87.6% on SWE-bench Verified, 64.3% on SWE-bench Pro, 70% on the Cursor coding benchmark, and 90.9% on Harvey’s BigLaw Bench at high effort, pulling ahead of GPT-5.4 and Gemini 3.1 Pro on agentic software engineering and knowledge work.

The model keeps the same pricing as Opus 4.6 ($5 per million input tokens, $25 per million output) and the full 1M token context at standard rates. This guide covers what’s new in Claude Opus 4.7, the benchmark numbers you should actually care about, how to switch to claude-opus-4-7 inside Claude Code, the new xhigh effort level, the /ultrareview command, and when Opus 4.7 is worth its price tag versus Sonnet or Haiku.

Published April 16, 2026 on release day. Tested with Claude Code 2.1.x on macOS Sequoia with claude-opus-4-7 at xhigh and max effort levels.

What’s new in Claude Opus 4.7

Opus 4.7 is a coding and agent-reasoning upgrade first, a general knowledge bump second. Anthropic’s own framing is that the model handles long-horizon, multi-step work with more rigor, pays sharper attention to instructions, and actively verifies its own outputs before reporting back. In practice that means fewer “I’ve implemented the change” replies that turn out to be wrong at review time.

- SWE-bench Verified: 87.6% (Opus 4.6: 80.8%). Real issue resolution on real open-source repos.

- SWE-bench Pro: 64.3% (Opus 4.6: 53.4%). Harder, less contaminated subset.

- Cursor coding benchmark: 70% (Opus 4.6: 58%). Autonomous multi-file edits inside an IDE.

- Rakuten-SWE-Bench: 3x more production tasks resolved versus Opus 4.6, with double-digit gains in code and test quality.

- BigLaw Bench (Harvey): 90.9% at high effort on legal-reasoning workflows.

- OfficeQA Pro (Databricks): 21% fewer errors than Opus 4.6 on enterprise data questions.

- GDPval-AA: state-of-the-art on Anthropic’s benchmark for economically valuable knowledge work.

- New

xhigheffort level betweenhighandmax. Claude Code raised its default toxhighfor all plans on release day. - Vision: 2,576 px on the long edge (~3.75 MP). Roughly 3x the pixels of previous Claude vision models, with an optional downsampling mode for workloads that don’t need the extra detail.

- New tokenizer. The same text maps to up to 1.35x more tokens than Opus 4.6, so rate limits were adjusted upward to compensate.

/ultrareviewcommand in Claude Code for parallel multi-agent PR reviews (3 free trials on Pro and Max).- Auto mode on Opus 4.7 rolled out to Max, Teams, and Enterprise subscribers.

- Task budgets (public beta) cap how many tokens a long run can spend before checking in.

- File system memory carries notes across multi-session work, reducing the context readers paste into each new session.

Opus 4.7 also takes instructions more literally than 4.6. If your system prompt or CLAUDE.md was tuned for Opus 4.6’s looser behavior, expect to retune. This is the most common complaint on X this week, and it’s not a regression, it’s a deliberate change toward stricter adherence.

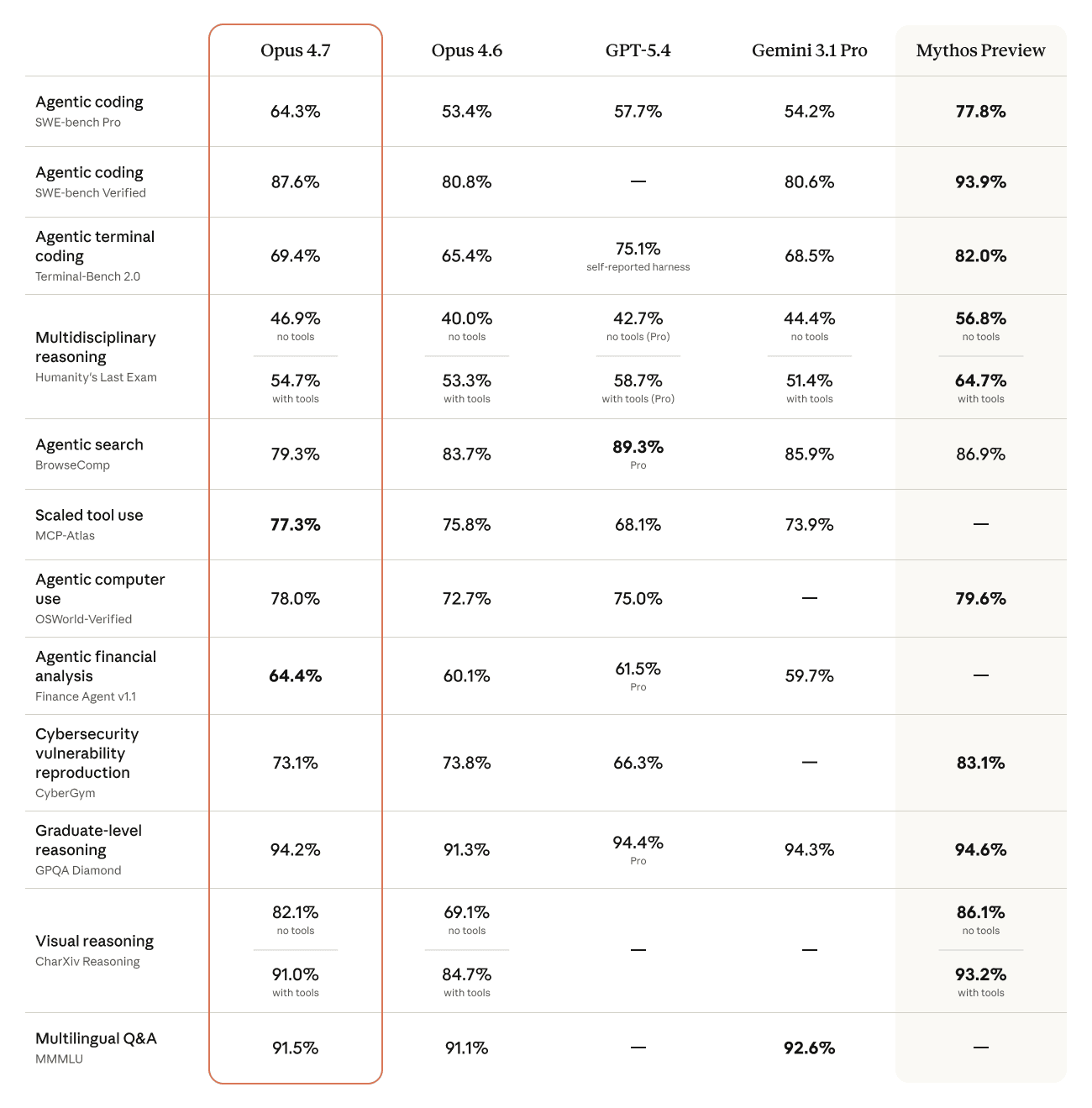

Opus 4.7 benchmarks vs Opus 4.6, GPT-5.4, and Gemini 3.1 Pro

Anthropic’s own comparison chart shows Opus 4.7 leading on agentic coding (SWE-bench Pro and Verified), scaled tool use (MCP-Atlas), agentic computer use (OSWorld-Verified), and agentic financial analysis (Finance Agent v1.1). GPT-5.4 still wins on BrowseComp (agentic search) and Gemini 3.1 Pro edges it on multilingual Q&A.

Here’s the same data as a table you can cite without the image:

| Benchmark | Opus 4.7 | Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-bench Verified (coding) | 87.6% | 80.8% | — | 80.6% |

| SWE-bench Pro (coding) | 64.3% | 53.4% | 57.7% | 54.2% |

| Terminal-Bench 2.0 | 69.4% | 65.4% | 75.1% | 68.5% |

| Humanity’s Last Exam (with tools) | 54.7% | 53.3% | 58.7% | 51.4% |

| MCP-Atlas (scaled tool use) | 77.3% | 75.8% | 68.1% | 73.9% |

| OSWorld-Verified (computer use) | 78.0% | 72.7% | 75.0% | — |

| Finance Agent v1.1 | 64.4% | 60.1% | 61.5% | 59.7% |

| GPQA Diamond (graduate reasoning) | 94.2% | 91.3% | 94.4% | 94.3% |

| CharXiv Reasoning (vision, with tools) | 91.0% | 84.7% | — | — |

The agentic coding numbers are where daily workflow improvements actually show up. A +6.8-point jump on SWE-bench Verified and +12 points on Cursor means fewer wrong-turn commits, fewer retries, and fewer “undo that” moments across a long session. On production-grade tasks (Rakuten-SWE) Anthropic reports triple the resolution rate versus 4.6, which is the number experienced Claude Code users are most likely to feel day-to-day.

Pricing, context window, and availability

Pricing is unchanged from Opus 4.6. That’s unusual for a frontier upgrade and the single biggest reason to adopt Opus 4.7 immediately:

| Item | Claude Opus 4.7 |

|---|---|

| API model ID | claude-opus-4-7 |

| Input pricing | $5 / million tokens |

| Output pricing | $25 / million tokens |

| Context window | 1,000,000 tokens (standard pricing across the full window) |

| Vision max resolution | 2,576 px on long edge (~3.75 MP) |

| Effort levels | low, medium, high, xhigh, max |

| Platforms | Claude.ai, Anthropic API, AWS Bedrock, Google Vertex AI, Microsoft Foundry, GitHub Copilot |

Because the new tokenizer can produce up to 1.35x more tokens for the same text, effective cost per real-world task can creep up even at identical per-token rates. Anthropic preemptively raised subscription rate limits and reset the 5-hour and weekly windows on launch day to compensate. If you’re still hitting the old limits, upgrade to the latest Claude Code; subscription plans read the new limits from the client.

Upgrade Claude Code and switch to Opus 4.7

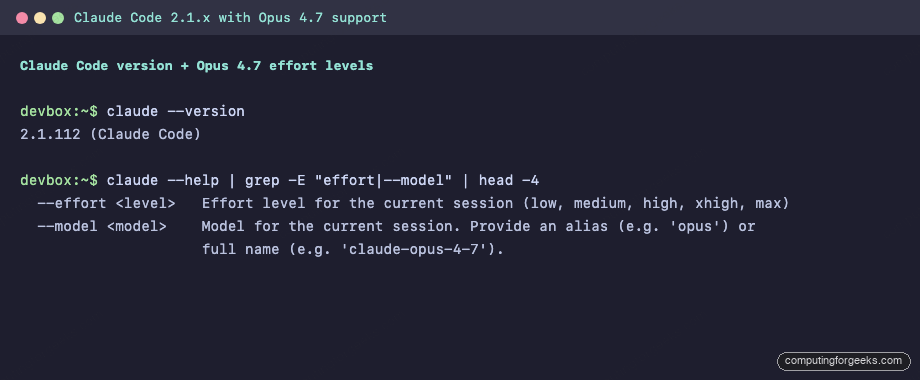

Opus 4.7 needs a Claude Code on the 2.1.x line. The first release that added xhigh and the claude-opus-4-7 model was followed by hotfixes within hours of launch, so pin nothing: just run the upgrade.

claude update

claude --versionThe output should be on the 2.1.x line or newer. Anything older will not see Opus 4.7 in the model picker:

Confirm the new effort levels are exposed. If xhigh appears in the help output, Opus 4.7 is wired up:

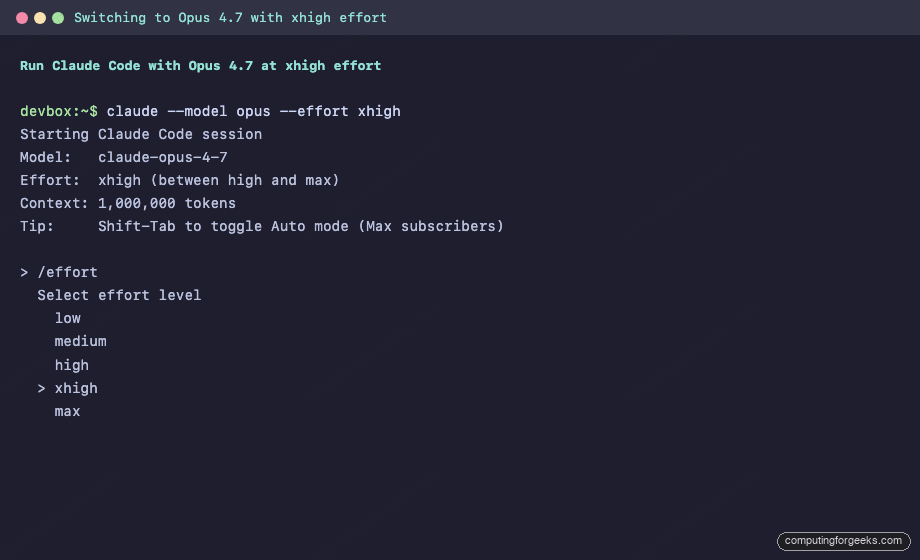

claude --help | grep -E "effort|--model"To start a session on Opus 4.7 from the command line, pass both flags:

claude --model claude-opus-4-7 --effort xhighInside an existing session, use the slash commands instead. Both are sticky across restarts:

/model opus

/effort xhighThe session header redraws with the active model, the chosen effort level, and the context window Opus 4.7 is running under. It also prints a hint for Shift-Tab, which toggles Auto mode for Max subscribers:

Calling /effort with no argument opens an interactive slider you can navigate with arrow keys. For API users, the model alias opus routes to claude-opus-4-7; pass thinking: { type: "extended", budget_tokens: <n> } or leave it on adaptive mode depending on your SDK.

The new xhigh effort level

Opus 4.6 had four public effort levels: low, medium, high, and max. Opus 4.7 slots xhigh between high and max. The intent is simple: max was often overkill (expensive, slow) for tasks that still needed more thinking than high provided. xhigh is that middle gear.

On launch day Anthropic raised Claude Code’s default effort to xhigh for every plan, so if you ran claude update you’re already on the new default whether you set /effort yourself or not. To go back to the previous behaviour, set /effort high explicitly; it sticks across sessions.

| Effort | Use for |

|---|---|

low | Tight follow-ups, chat, one-line edits |

medium | Routine refactors, single-file changes, test generation |

high | Multi-file edits, debugging, writing a non-trivial function |

xhigh | Design decisions, tricky refactors across modules, gnarly bugs |

max | The hardest problem of the week. Reserved because it’s slow and expensive |

Unlike older “thinking budgets” which let you cap token spend, Opus 4.7 uses adaptive thinking. The model decides how many reasoning tokens to spend, and the effort knob just biases that decision. low and medium stay fast and cheap; xhigh and max let the model go deeper when the task is actually hard.

Auto mode: stop babysitting Claude Code

Auto mode routes permission prompts to a classifier model that decides whether a proposed command is safe to auto-approve. Safe commands run without asking; risky ones still hit the prompt. It is the middle ground between cautious approve-every-step mode and --dangerously-skip-permissions.

Availability at launch: Max, Teams, and Enterprise subscribers on Opus 4.7. Pro users keep standard permission prompts. Enable it with Shift-Tab inside the CLI or from the dropdown in Claude Desktop and the VS Code extension. The big behavioral change: you can start a long Opus 4.7 task, switch to another session, and the first one keeps cooking without paging you. If you already use our Claude Code Routines for scheduled work, Auto mode complements them: Routines run without a session open; Auto mode unblocks the sessions you do run interactively.

If you prefer tuning permissions by hand, the new /less-permission-prompts skill scans your session history, identifies common read-only bash and MCP calls, and proposes additions to .claude/settings.json. Works without Auto mode, and is the recommended path for Pro users or for anyone who wants deterministic rules over a classifier.

The /ultrareview command

Opus 4.7 shipped alongside /ultrareview, a cloud-run code review that fans out across multiple parallel agents and reports back a consolidated critique. It replaces the ad-hoc “review my branch” prompt that everyone has been copy-pasting for a year.

/ultrareview

/ultrareview 4212Without arguments it reviews the current branch against the base. With a PR number it pulls that PR from GitHub and reviews it end to end. Claude Pro and Max subscribers get three free /ultrareview runs; beyond that it is a paid add-on. The review runs in Anthropic’s cloud so your terminal stays free for other work.

Other Claude Code 2.1.x changes worth knowing

The same Claude Code line that unlocked Opus 4.7 also brought a handful of quality-of-life changes that deserve to not be buried in the release notes:

- Recaps. Short summaries of what the agent did and what’s next, shown when you return to a long session. Specifically built for the kind of multi-hour runs Opus 4.7 is good at.

- Focus mode. Toggled with

/focus. Hides intermediate tool calls and only shows final results. Only useful if you trust the model, which is a much shorter list with 4.7 than it was with 4.6. - The “avoid creating new files” rule became a preference. Opus 4.7 is now allowed to create new files when the task justifies it, instead of always trying to jam everything into existing ones.

- Stricter skill invocation. Skills only activate on exact listed names or an explicit user-typed

/name, which prevents accidental auto-activation from vague prompt wording. - Auto theme.

/themehas a new “match terminal” option that follows your terminal’s light/dark mode. - Read-only bash with globs no longer prompts.

ls *.tsand friends, pluscd <project> && ..., stop asking for permission. - Task budgets (public beta). Guide how many tokens Opus 4.7 is allowed to spend on a long run before stopping to check in.

- File system memory. Better retention across multi-session work, the kind of thing that matters when an Opus 4.7 agent runs for hours.

If you want the full picture of what changed across this release train, the GitHub releases page is the source of truth.

Opus 4.7 in Claude apps, Copilot, and cloud providers

Opus 4.7 is not a Claude Code exclusive. The model ID claude-opus-4-7 is live on:

- Claude.ai web app and Claude Desktop. The model picker now shows Opus 4.7 as the default for Pro and Max users. On Claude Desktop (Mac and Windows), the multi-session sidebar redesign from April 14 pairs naturally with Opus 4.7: one session can run a long Opus 4.7 refactor while you chat with Haiku in a side pane.

- GitHub Copilot. Opus 4.7 rolled out as a selectable model in Copilot Chat and inline agentic workflows on launch day.

- Amazon Bedrock. Model ID

anthropic.claude-opus-4-7-v1:0, available in multiple regions. - Google Vertex AI. Model ID

claude-opus-4-7@20260416on the Anthropic partner publisher. - Microsoft Foundry. Available via the Azure AI Foundry catalog.

Pricing is consistent across direct API and managed cloud providers. The cloud providers add a margin on top for enterprise guarantees (region pinning, cross-region failover, contractual SLAs), so direct API remains the cheapest way to call Opus 4.7.

Safety, migration, and the Cyber Verification Program

Anthropic positions Opus 4.7 as similar in overall safety profile to Opus 4.6: low rates of deception and sycophancy, better honesty and resistance to prompt-injection attacks, and slightly weaker on over-detailed harm-reduction answers for controlled substances. The unreleased Mythos Preview remains Anthropic’s best-aligned model, but Opus 4.7 is the frontier model you can actually run in production.

Cyber capability was the single biggest deliberate tradeoff this release. Opus 4.7 auto-detects and blocks high-risk cyber requests, and Anthropic scaled its offensive-security headroom below what Mythos Preview can do. To keep legitimate security research unblocked, Anthropic launched a Cyber Verification Program that vets individual researchers and companies and lifts the extra guardrails for them. If you run pen-tests or red-team exercises with Claude and you’re hitting refusals you didn’t used to hit, the verification program is the intended path, not prompt gymnastics.

For migration, Anthropic published a short guide covering the instruction-literalness change and the tokenizer bump. The two things that actually trip up existing systems are: (1) prompts that relied on Opus 4.6 inferring intent from loose phrasing now need explicit instructions, and (2) output budgets set in tokens need to be recalculated because the same text generates more tokens. The Claude platform docs have the full migration details and the current rate-limit table; for the full release announcement and benchmark chart, Anthropic’s Introducing Claude Opus 4.7 post is the source of record.

When to use Opus 4.7 vs Sonnet 4.6 vs Haiku 4.5

Opus is not always the right answer. Pricing for the current Anthropic lineup, as of this writing:

| Model | Input $/MTok | Output $/MTok | Context | Best for |

|---|---|---|---|---|

| Opus 4.7 | $5 | $25 | 1M | Long agentic runs, hard refactors, design decisions, deep code review |

| Sonnet 4.6 | $3 | $15 | 1M | Most daily coding, PR drafts, routine refactors, documentation |

| Haiku 4.5 | $1 | $5 | 200K | Chat, classification, fast one-liners, high-volume background jobs |

Rough rule of thumb that has held up in our own use: if a task takes a human engineer under 10 minutes of real thought, Haiku 4.5 or Sonnet 4.6 does it faster and cheaper. If it takes 30+ minutes of sustained reasoning, Opus 4.7 with xhigh pays for itself in retries avoided. For teams running parallel Claude Code sessions under Auto mode, the math tips further in Opus 4.7’s favor because the human bottleneck is “pick the winner,” not “babysit each run.”

If you’re still picking between Claude Code, Cursor, OpenCode, or Windsurf for daily driver, see our OpenCode vs Claude Code vs Cursor comparison and the Cursor vs Windsurf vs Kiro breakdown. For getting real infra work out of Claude Code specifically, the Claude Code for DevOps engineers guide and the Claude Code cheat sheet both assume you’re on the current release train. The .claude directory conventions that Opus 4.7 reads on startup are covered in the .claude directory guide, and if you want Opus 4.7 fired from GitHub webhooks, the GitHub Actions integration walkthrough still applies.

FAQ

What is the API model ID for Claude Opus 4.7?

The Anthropic API model ID is claude-opus-4-7. On Claude Code you can also use the alias opus. On Amazon Bedrock the ID is anthropic.claude-opus-4-7-v1:0. On Google Vertex AI it’s claude-opus-4-7@20260416.

Is Claude Opus 4.7 more expensive than Opus 4.6?

Per-token pricing is identical: $5 per million input tokens and $25 per million output tokens. Real-world cost per task can be slightly higher because Opus 4.7’s new tokenizer produces up to 1.35x more tokens for the same text, and because xhigh and max effort levels spend more reasoning tokens. Anthropic raised subscription rate limits on launch day to offset this.

How do I switch to Opus 4.7 in Claude Code?

Run claude update first, then inside the CLI type /model opus and optionally /effort xhigh. For a one-shot launch, run claude --model claude-opus-4-7 --effort xhigh. Both the model and effort settings are sticky, so subsequent sessions keep the same configuration.

What is the xhigh effort level in Claude Opus 4.7?

xhigh is a new adaptive thinking level in Opus 4.7 that sits between high and max. It instructs the model to spend more reasoning tokens than high without reaching for the expensive ceiling of max. It’s the recommended default for multi-file refactors, gnarly debugging, and design decisions.

Does Opus 4.7 support the 1M token context window?

Yes. Opus 4.7 ships with the full 1,000,000 token context window at standard pricing. A 900K-token request is billed at the same per-token rate as a 9K-token request, which was not always the case for frontier models on other providers.

Is Auto mode available to all Claude Code users?

At launch, Auto mode on Opus 4.7 is rolling out to Max, Teams, and Enterprise subscribers. Pro users keep standard permission prompts and can use the new /less-permission-prompts skill to reduce prompt noise without a classifier. Toggle Auto mode with Shift-Tab in the CLI or via the dropdown in Claude Desktop and the VS Code extension.

Does Opus 4.7 replace Opus 4.6 automatically?

No. Opus 4.6 stays available under its own model ID so existing prompts and applications continue to work unchanged. Claude.ai, Claude Desktop, and the Claude Code model picker now default to Opus 4.7, but API callers must explicitly switch to claude-opus-4-7 to use the new model.

Should you switch today?

Yes, for agentic coding work. The SWE-bench gains are real, the price didn’t move, and the rate-limit bump cancels most of the tokenizer’s overhead. The one caveat is stricter instruction-following. Prompts and CLAUDE.md files tuned for Opus 4.6’s more forgiving behavior may need a retune, especially if you relied on the old model inferring intent from vague phrasing. Budget an afternoon for that, then keep xhigh as your default effort and let Opus 4.7 chew through the backlog Sonnet could never close out.