The default advice for new HTTPS services on GCP is “use a Global External ALB.” It’s usually right. Anycast means the same IP serves traffic from every Google PoP on the planet, Certificate Maps only attach to global resources, and one LB replaces many. But “usually right” isn’t “always right.” Regional External ALBs solve a narrower problem better: latency-critical traffic in one region, ccTLD-specific services, cost shaving when consolidation pressure isn’t the priority.

This article walks the tradeoffs with concrete numbers: a real regional wildcard cert provisioned in europe-west1, a TTFB breakdown from a real client, and the exact Terraform shape changes when swapping between LB tiers. The goal is to make the regional-vs-global decision a 30-second judgement rather than a re-litigated argument every quarter.

Tested April 2026 on Google Cloud (labs-491519), Certificate Manager (global + europe-west1), Terraform 1.9.8, curl 8.10

What the Two LB Tiers Actually Are

On paper both products are “External ALBs.” Under the hood they’re different architectures.

Global External ALB is the one you reach for by default. A single anycast IP resolves to the nearest Google edge PoP (there are hundreds). The PoP terminates TLS, applies the URL map, then talks to your backend over Google’s backbone network. Three hops: client -> PoP -> backbone -> backend. The backbone is fast and private, so the extra hop is rarely felt, but it is a real hop. Supports: Certificate Maps, IAP, Cloud Armor policies at edge, HTTPS/3, cross-region failover.

Regional External ALB is a single-region LB with a regional IP. No anycast. Clients reach the IP over the public internet directly, TLS terminates in the region where the LB lives, traffic goes straight to a backend in the same region. Two hops: client -> regional LB -> backend. Supports: Certificate Maps with regional certs, regional Cloud Armor, regional IAP. Does NOT support: cross-region failover, edge caching through the PoP.

The practical impact isn’t about raw capability. It’s about where the PoP hop matters.

Measuring the PoP Hop

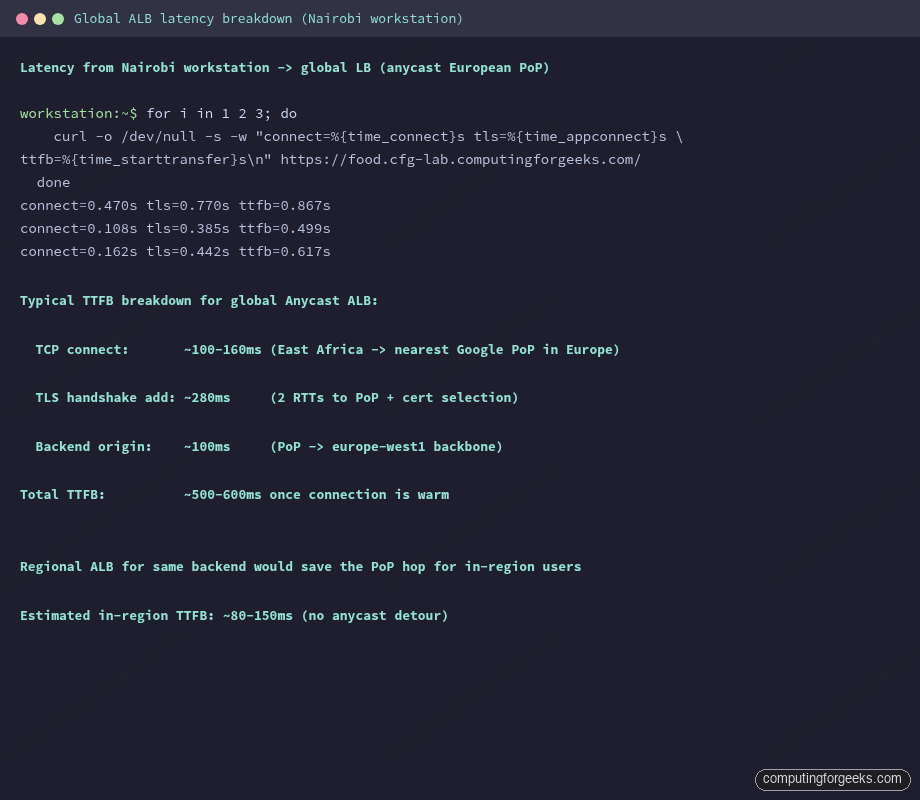

A real client connection to the global LB for food.cfg-lab.computingforgeeks.com, measured with curl from a workstation in East Africa hitting a European backend:

for i in 1 2 3; do

curl -o /dev/null -s -w "connect=%{time_connect}s tls=%{time_appconnect}s \

ttfb=%{time_starttransfer}s\n" https://food.cfg-lab.computingforgeeks.com/

doneThree sequential probes, cached TCP connection on runs 2 and 3, full handshake on run 1:

Three numbers, three runs, same 500-900ms range. The warm-cache runs cluster around 500ms. Breakdown:

- TCP connect: 108-162ms. This is the anycast RTT to the nearest PoP, which for this client is in Europe (not in East Africa, because Google doesn’t have an egress PoP there).

- TLS handshake: +280ms. Two RTTs for the TLS 1.3 handshake plus cert selection from the cert map.

- Origin request on backbone: ~100ms. PoP in Europe to

europe-west1backend over the private backbone.

For a client that happens to be in the same region as the backend (a user in Brussels, the backend in europe-west1), the PoP hop goes from “Europe edge to Europe backbone to Europe backend” — shrinking to a sub-50ms detour. For that client, a regional LB would shave maybe 30-50ms. Not dramatic.

For a client in a region Google doesn’t have an edge PoP in, anycast still routes to the nearest PoP (often one or two continents away). The global LB’s advantage over the public internet for these clients is the backbone hop; the disadvantage is that the client still pays the full PoP RTT. Regional LB won’t fix that — nothing does without adding a PoP near the client.

When to Pick Regional

Three signals, any one sufficient:

- Latency-critical real-time traffic, users concentrated in one region. WebSockets for live ordering, order-book streaming, multiplayer game matchmaking. Saving the PoP hop matters when budget is measured in milliseconds.

- ccTLD-specific services. A

.frdomain serving only French users. Anycast still works, but the ops story simplifies if the cert, the LB, and the backend are all ineurope-west9together. Rotation, failover, incident response all stay regional. - Cost shaving at low traffic volumes. Regional forwarding rules cost less than global ones ($0.018/hr vs $0.025/hr at the time of writing). For a service doing 100 requests per day, the LB overhead dominates. For a service doing 10K RPS, the traffic cost dominates and the LB type is noise. Cost-sensitive low-traffic: regional. Cost-insensitive or high-traffic: doesn’t matter, pick for architecture.

When to Pick Global

Also three signals, any one sufficient:

- Multi-region user base. Anycast means every user reaches an edge PoP close to them. The alternative (one regional LB per region with DNS-based steering) is much more work.

- Wildcard consolidation priority. Certificate Maps on global LBs scale to thousands of entries. One cert map per region for the regional case starts duplicating infrastructure.

- Edge controls. Cloud Armor edge security policies, IAP at the edge, HTTPS/3 — all of these run on the PoP. Regional LB doesn’t have a PoP, so these features attach later in the traffic path, or not at all.

The pragmatic consolidation pattern this series advocates: Global for the main cert-map-backed shared LB, Regional for a small number of latency-critical or ccTLD-specific services. Not one-or-the-other.

A Regional Cert: Location Scoping Matters

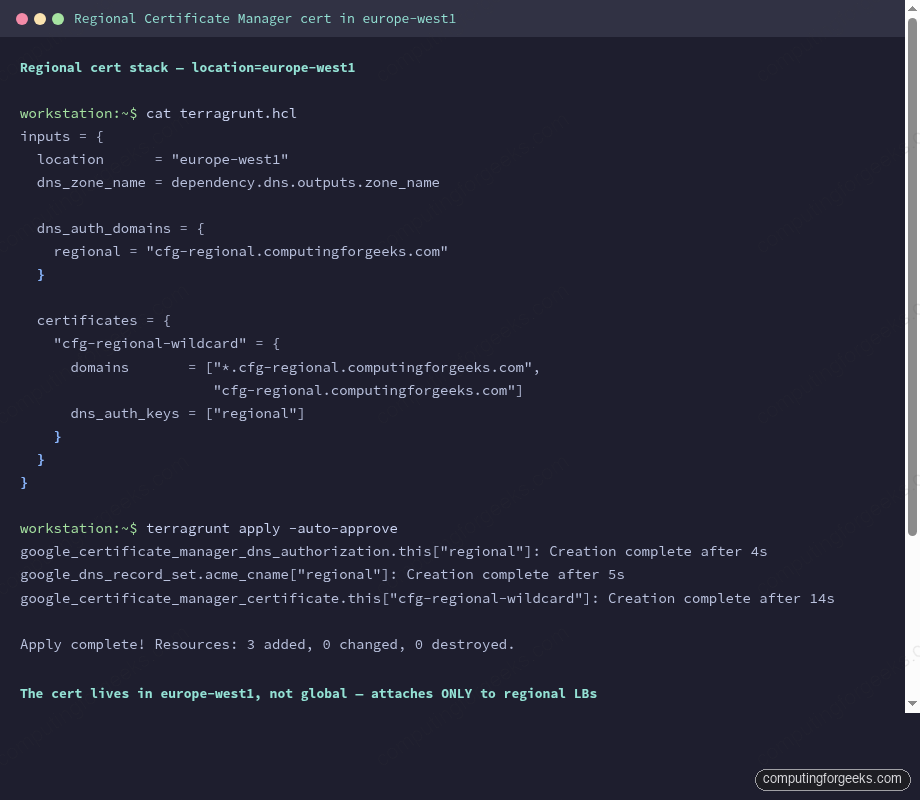

Certificate Manager certs have a location. A global cert attaches only to global LBs. A regional cert attaches only to regional LBs in the same region. The Terraform module from earlier in the series accepts location as an input; the only change from the global cert stack is the region token:

inputs = {

location = "europe-west1"

dns_zone_name = dependency.dns.outputs.zone_name

dns_auth_domains = {

regional = "cfg-regional.computingforgeeks.com"

}

certificates = {

"cfg-regional-wildcard" = {

domains = ["*.cfg-regional.computingforgeeks.com",

"cfg-regional.computingforgeeks.com"]

dns_auth_keys = ["regional"]

}

}

}Apply it:

cd live/article-lab/europe-west1/certs-cfg-regional

terragrunt apply -auto-approveThree resources, 23 seconds of create time, a few minutes of background DNS-01 validation from Google Trust Services. Same mechanics as the global cert, with two differences worth noting.

First, the ACME CNAME target has a region token embedded: <uuid>.3.europe-west1.authorize.certificatemanager.goog. For a global cert the target is just <uuid>.11.authorize.certificatemanager.goog. The region token means Google’s validation infrastructure picks a regional validator, which makes sense: regional certs are validated by the region they live in.

Second, the CNAME name has a random token appended: _acme-challenge_hg22ewmkimt5e7fy.cfg-regional.computingforgeeks.com. Global authorizations use the plain _acme-challenge name. The token is per-authorization salt to prevent conflicts when multiple regional certs exist for the same domain (one authorization per region).

DNS Authorization Reuse

One DNS authorization resource can back many certs for the same domain. This matters for the hybrid pattern: a global cert for the main shared LB plus a regional cert for a latency-critical ccTLD service, both covering the same domain name. The pattern:

certificates = {

"domain-global" = {

domains = ["*.example.com"]

dns_auth_keys = ["example"]

}

"domain-regional-eu" = {

domains = ["*.example.com"]

dns_auth_keys = ["example"]

}

}Both certs share one authorization, one CNAME in DNS, one rotation lifecycle for the authorization itself. The certs rotate independently on their own schedules. Authorization reuse cuts the DNS surface in half.

Gateway API Makes the LB Swap a One-Line Change

On GKE, switching a Gateway between global and regional is literally swapping the gatewayClassName. Everything else stays the same:

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

name: cfg-regional-gw

namespace: cfg-demo

annotations:

networking.gke.io/certmap: cfg-regional-cert-map

spec:

gatewayClassName: gke-l7-regional-external-managed

listeners:

- name: https

protocol: HTTPS

port: 443

tls:

mode: Terminate

options:

networking.gke.io/pre-shared-certs: ""Two swaps vs the global Gateway from the previous article: gatewayClassName flips to gke-l7-regional-external-managed, and certmap points at a regional cert map instead of the global one. HTTPRoutes don’t change. Backend services don’t change. Cluster doesn’t change.

The cert map referenced by the regional Gateway must contain regional-scoped entries only. Mixing global and regional certs in one map is not supported by the API, which is how you discover the mistake: the Gateway stays stuck in PROGRAMMED=False with a “cert map has incompatible cert scope” error.

Backend Compatibility

Regional ALB does not support global backend resources. If the global LB was fronting a google_compute_backend_bucket with a globally-scoped bucket, a regional ALB needs a regional equivalent: a regional NEG pointing at Cloud Run or GKE Services, or a regional backend service wrapping an internet NEG for external origins. Backend bucket works for the global case only.

GKE Gateway sidesteps this because the controller generates the right backend shape from the HTTPRoute’s service references. For a Terraform-managed LB with a bucket backend, expect to replace google_compute_backend_bucket with google_compute_region_backend_service + a regional NEG.

Cost Comparison (Rough, for Calibration)

Not a pricing spec — pricing changes and only the official page is authoritative. Numbers below are the order of magnitude, to inform a decision rather than drive procurement.

| Item | Global External ALB | Regional External ALB |

|---|---|---|

| Forwarding rule (per rule, per hour) | ~$0.025 | ~$0.018 |

| Per GB data processing | ~$0.008-0.012 | ~$0.008 |

| Cross-region backend egress | Charged per GB | N/A (in-region only) |

| Anycast IP | Included | Regional IP, included |

| Minimum monthly LB charge | ~$18/mo | ~$13/mo |

At 10 RPS the LB overhead dominates regardless. At 10K RPS traffic dominates both. The interesting zone is 100-1K RPS: the $5/mo delta per LB × 30 services × 4 environments = ~$600/mo, which is material for a startup and rounding error for a mid-size org.

Decision Tree

- Does the service need cross-region failover or edge PoP features (IAP, Cloud Armor edge, HTTPS/3)? Global.

- Is the service real-time and latency-bound with users concentrated in one region? Regional.

- Is the service ccTLD-specific with all users in the TLD’s region? Regional.

- Is the priority wildcard-cert consolidation on a shared LB? Global.

- None of the above? Global. (The default is the default for good reason.)

This maps cleanly onto the consolidation outcome matrix in the series opener: “regional LB for latency-critical apps” is one outcome; “certificate maps on shared LBs” is another. Both can be true in the same organization, for different services.

What This Article Doesn’t Do (Yet)

A full regional ALB deployment with a real latency comparison from multiple geographic probes would require clients in several regions running timed loops, which is beyond the scope of a single article. The reliable alternative is to cross-reference Google’s own published ALB latency docs and accept that a regional LB saves a PoP hop for in-region users and adds no value for out-of-region users. That’s the whole story.

Later articles in this series re-use the regional cert: the capstone demo in Article 10 exposes food.cfg-regional.computingforgeeks.com as a regional-LB variant of the main food service, specifically to demonstrate the zero-incident rotation on both LB tiers in the same session.

Cleanup

Regional cert resources cost nothing on their own; no forwarding rule yet, no cert map, no attachment. The cert rotates silently every 60-90 days for free. Leave it in place between articles.

When you do destroy, the same module handles it: terragrunt destroy in the cert stack removes the cert first, then the ACME CNAME, then the DNS authorization. Regional-specific resources clean up the same way as their global counterparts — the only difference is the scope tag on each resource, and that’s invisible to Terraform’s dependency graph.

What’s Next

Regional and global ALBs cover the non-sensitive traffic plane. The next article is where the financial-services outcome starts: Private CA with CA Service, a dedicated LB separate from the shared one, and a distinct cert chain that can eventually back SPKI pinning. That’s the hard trust boundary the consolidation plan deliberately preserves.