Kubernetes ships with admission control built into the API server, but the only validation rules it knows out of the gate are Pod Security Standards. Everything else (which image registries are allowed, what labels every workload must carry, whether ResourceLimits are mandatory, whether :latest is banned) is handled by an admission controller you install yourself. The two projects nearly every team picks between are OPA Gatekeeper and Kyverno.

This guide installs both on the same Kubernetes 1.34 cluster, writes the exact same five policies in each, runs the same denial test cases against each, and shows the parts that actually decide which one fits your team: Rego vs YAML, mutation support, audit modes, and the operational overhead each adds in production. The goal is a clear answer to “Gatekeeper or Kyverno” backed by side-by-side output, not vendor benchmarks.

Tested April 2026 on Kubernetes 1.34.7 (kubeadm), Gatekeeper 3.22.2, Kyverno 1.17.2. All denial messages and mutation outputs below are verbatim from the test cluster.

Why you need a policy engine beyond Pod Security Standards

The Pod Security Standards guide covers the floor: privileged, hostNetwork, runAsNonRoot, seccompProfile, allowPrivilegeEscalation. Those rules ship with the API server and need no extra controller. PSS handles 80% of pod-shape security and zero of the cluster-shape policy that real teams care about: which image registries are trusted, whether the app.kubernetes.io/owner label is required for cost attribution, whether StorageClasses other than gp3-ssd are forbidden, whether NetworkPolicies must exist for every namespace.

None of those are PSS rules and none can be expressed with namespace labels alone. They need a policy engine that understands your business context. Gatekeeper and Kyverno are the two CNCF-backed options. Both work; they take very different paths.

Architecture side-by-side

OPA Gatekeeper wraps the Open Policy Agent (OPA) engine in a Kubernetes-native operator. Policies are written in Rego, OPA’s declarative policy language. Two CRDs do the work: a ConstraintTemplate defines a reusable rule (the Rego code), and a Constraint instantiates that template with parameters and a match selector. Gatekeeper runs an admission webhook plus an audit controller; the audit controller scans existing resources and records violations as Constraint status.

Kyverno takes the opposite approach. Policies are plain Kubernetes YAML, written in a domain-specific language that looks like the Kubernetes resources it validates. Three controllers do the work: an admission controller for validation and mutation, a background controller for audit reports on existing resources, and a reports controller that maintains PolicyReport CRDs. There is no separate template/constraint split; one ClusterPolicy resource holds the match selector, the validation rules, and any mutation actions.

| Concern | OPA Gatekeeper | Kyverno |

|---|---|---|

| Policy language | Rego (general-purpose) | YAML (Kubernetes-specific) |

| Policy CRDs | ConstraintTemplate + Constraint | ClusterPolicy / Policy |

| Validate | Yes | Yes |

| Mutate | Yes (alpha, separate Assign/AssignMetadata CRDs) | Yes (built into ClusterPolicy, GA) |

| Generate (auto-create resources) | No | Yes (e.g., NetworkPolicy on namespace creation) |

| Verify image signatures | External (cosign + Rego) | Built in (verifyImages rule) |

| Audit existing resources | Audit controller writes Constraint status | Background controller writes PolicyReport CRDs |

| Policy library | Gatekeeper library | Kyverno policies (categorized) |

| Learning curve | Steep (Rego is its own language) | Shallow (YAML matches existing kubectl mental model) |

| Memory footprint (small cluster) | ~150MB controller + ~120MB audit | ~150MB per controller, four controllers |

The biggest practical differences in day-two operation: Gatekeeper requires learning Rego (this is a real cost; teams that already write OPA for cloud IAM have a head start), while Kyverno’s YAML policies look like the Kubernetes resources operators already read every day. Kyverno’s mutation and generation features are GA and used in nearly every production deployment; Gatekeeper’s mutation has been around since 3.10 but is less mature and rarely used.

Step 1: Set reusable shell variables

Three values repeat throughout the guide. Export them once at the top of your kubectl shell:

export GK_NS="gatekeeper-system"

export KV_NS="kyverno"

export TEST_NS_GK="policy-test"

export TEST_NS_KV="policy-test-kv"The two test namespaces are intentionally separate so the policies do not interfere; in production each engine watches its own namespaces or uses ClusterPolicy match selectors to scope the rules.

Step 2: Install OPA Gatekeeper via Helm

Add the Gatekeeper Helm repository and install with audit and admission webhook replicas at 1 (this is a lab; run at least 2 in production):

helm repo add gatekeeper https://open-policy-agent.github.io/gatekeeper/charts

helm repo update

helm install gatekeeper gatekeeper/gatekeeper \

--create-namespace -n ${GK_NS} \

--set replicas=1 \

--set audit.replicas=1Wait for both deployments to come up:

kubectl -n ${GK_NS} rollout status deploy gatekeeper-controller-manager

kubectl -n ${GK_NS} rollout status deploy gatekeeper-audit

kubectl -n ${GK_NS} get podsYou should see both pods Running:

NAME READY STATUS RESTARTS AGE

gatekeeper-audit-85d57dd999-ldzwp 1/1 Running 0 65s

gatekeeper-controller-manager-5dc7b68bf8-vmm8l 1/1 Running 0 65sStep 3: Install Kyverno via Helm

Kyverno splits into four controllers. The Helm chart installs all of them; for a lab, scale each to 1 replica:

helm repo add kyverno https://kyverno.github.io/kyverno/

helm repo update

helm install kyverno kyverno/kyverno \

--create-namespace -n ${KV_NS} \

--set admissionController.replicas=1 \

--set backgroundController.replicas=1 \

--set cleanupController.replicas=1 \

--set reportsController.replicas=1Verify the four controllers are Running:

kubectl -n ${KV_NS} get podsThe four controllers come up over about 60 seconds; once they are all Running, Kyverno is ready to accept policies:

NAME READY STATUS RESTARTS AGE

kyverno-admission-controller-8658f4d9d-xvwd2 1/1 Running 0 75s

kyverno-background-controller-6f6679b44d-vsrbw 1/1 Running 0 75s

kyverno-cleanup-controller-545d6775c9-8vcqh 1/1 Running 0 75s

kyverno-reports-controller-7bb7db4cf-97kjd 1/1 Running 0 75sBoth engines now share the same cluster but watch different namespaces in this lab. In production they coexist on the same cluster without conflict; only the admission webhook order matters (the API server runs them both, in alphabetical order, on every CREATE and UPDATE).

Step 4: Create the test namespaces

One namespace per engine so the policies do not collide. The Gatekeeper policy targets policy-test; the Kyverno policy targets policy-test-kv:

kubectl create namespace ${TEST_NS_GK}

kubectl create namespace ${TEST_NS_KV}Step 5: The same policy in both languages: image registry whitelist

Every team writes this policy first. Block any pod whose image does not come from an approved registry. The exact same intent expressed in Rego (Gatekeeper) and YAML (Kyverno).

Gatekeeper version: ConstraintTemplate plus Constraint

The ConstraintTemplate defines the rule logic in Rego. The reusable bit is the Rego code; the parameters (allowed repos) come from the Constraint instance:

cat > allowedrepos-template.yaml <<'YAML'

apiVersion: templates.gatekeeper.sh/v1

kind: ConstraintTemplate

metadata:

name: k8sallowedrepos

spec:

crd:

spec:

names:

kind: K8sAllowedRepos

validation:

openAPIV3Schema:

type: object

properties:

repos:

type: array

items:

type: string

targets:

- target: admission.k8s.gatekeeper.sh

rego: |

package k8sallowedrepos

violation[{"msg": msg}] {

container := input.review.object.spec.containers[_]

satisfied := [good | repo = input.parameters.repos[_]; good = startswith(container.image, repo)]

not any(satisfied)

msg := sprintf("container <%v> has an invalid image registry <%v>; allowed: %v",

[container.name, container.image, input.parameters.repos])

}

YAML

kubectl apply -f allowedrepos-template.yamlNow the Constraint that scopes the template to the test namespace and supplies the actual list of allowed registries:

cat > allowedrepos-constraint.yaml <<'YAML'

apiVersion: constraints.gatekeeper.sh/v1beta1

kind: K8sAllowedRepos

metadata:

name: only-trusted-registries

spec:

match:

kinds:

- apiGroups: [""]

kinds: ["Pod"]

namespaces: ["policy-test"]

parameters:

repos:

- "ghcr.io/"

- "registry.k8s.io/"

YAML

kubectl apply -f allowedrepos-constraint.yamlKyverno version: a single ClusterPolicy

Same intent, no template/constraint split, no Rego. The match selector, the rule, and the allowed pattern all live in one resource. Kyverno’s pattern matching uses simple glob syntax and the operator | for alternation:

cat > kv-allowedrepos.yaml <<'YAML'

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: only-trusted-registries

spec:

validationFailureAction: Enforce

rules:

- name: validate-image-registry

match:

any:

- resources:

kinds: ["Pod"]

namespaces: ["policy-test-kv"]

validate:

message: "Images must come from ghcr.io or registry.k8s.io"

pattern:

spec:

containers:

- image: "ghcr.io/* | registry.k8s.io/*"

YAML

kubectl apply -f kv-allowedrepos.yamlThe Rego version is 22 lines. The YAML version is 19. The Rego version reads as a programming language (extract the container, build a list of registry matches, assert that some element is true, format an error message). The YAML version reads as the shape of the resource it validates with a glob pattern in place of a literal string. Both work; the readability difference matters most for the people who maintain the policy a year from now.

Step 6: Test the registry policy on both engines

Same two test pods, applied into each engine’s namespace. One uses an untrusted registry (Docker Hub), one uses an approved one (registry.k8s.io):

cat > test-bad-pod.yaml <<'YAML'

apiVersion: v1

kind: Pod

metadata:

name: bad-registry

spec:

containers:

- name: app

image: nginx:1.27 # implicit docker.io

YAML

cat > test-good-pod.yaml <<'YAML'

apiVersion: v1

kind: Pod

metadata:

name: good-registry

spec:

containers:

- name: app

image: registry.k8s.io/pause:3.10

YAMLApply the bad pod into the Gatekeeper-watched namespace:

kubectl apply -n ${TEST_NS_GK} -f test-bad-pod.yamlGatekeeper denies it with the exact error format defined in the Rego code:

Error from server (Forbidden): error when creating "test-bad-pod.yaml":

admission webhook "validation.gatekeeper.sh" denied the request:

[only-trusted-registries] container <app> has an invalid image registry <nginx:1.27>;

allowed: ["ghcr.io/", "registry.k8s.io/"]Now the same bad pod into the Kyverno-watched namespace:

kubectl apply -n ${TEST_NS_KV} -f test-bad-pod.yamlKyverno’s denial format includes the policy name, the rule name, and the JSON path that violated the pattern:

Error from server: error when creating "test-bad-pod.yaml":

admission webhook "validate.kyverno.svc-fail" denied the request:

resource Pod/policy-test-kv/bad-registry was blocked due to the following policies

only-trusted-registries:

validate-image-registry: 'validation error: Images must come from ghcr.io or registry.k8s.io.

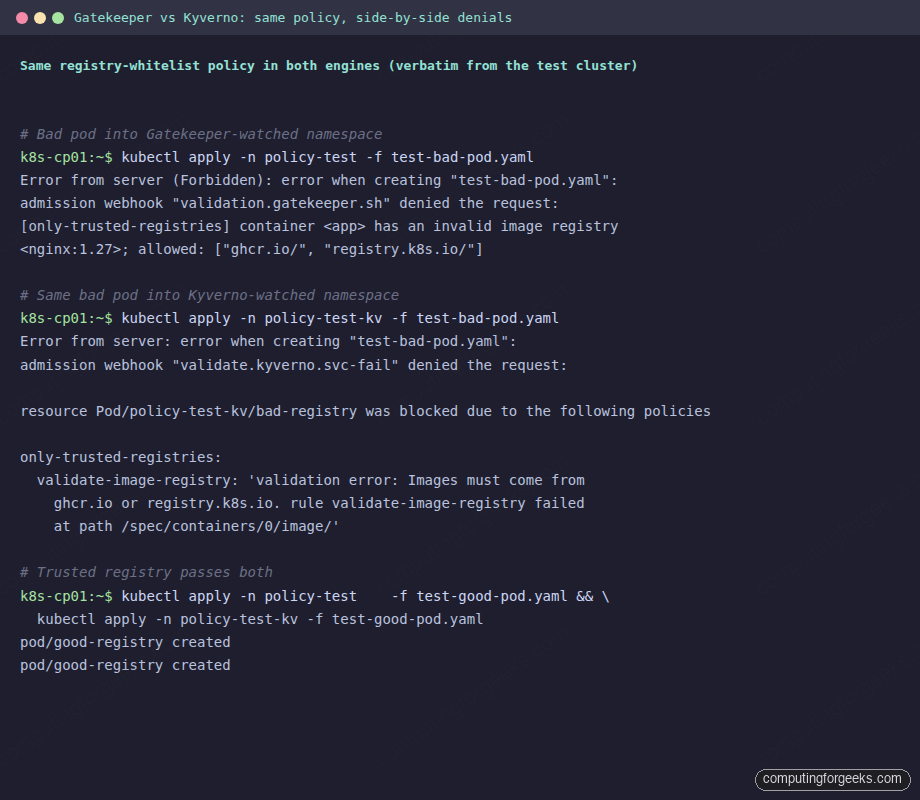

rule validate-image-registry failed at path /spec/containers/0/image/'The two denials, captured in one terminal frame for direct comparison:

Both engines deny with equivalent rigour. The Gatekeeper message includes the parameter values from the Constraint (so you can see which list the image was checked against). The Kyverno message includes the JSON path of the offending field (so you know exactly where in the manifest the rule fired). Operators tend to prefer Kyverno’s path; security teams tend to prefer Gatekeeper’s parameter dump.

Apply the good pod into both namespaces:

kubectl apply -n ${TEST_NS_GK} -f test-good-pod.yaml

kubectl apply -n ${TEST_NS_KV} -f test-good-pod.yamlBoth pods admit cleanly because registry.k8s.io/pause:3.10 matches the allowlist:

pod/good-registry created

pod/good-registry createdStep 7: Mutation, where Kyverno pulls ahead

The single biggest reason teams pick Kyverno over Gatekeeper is mutation. Both engines support mutation in 2026, but Kyverno’s mutation is GA, lives inside the same ClusterPolicy resource as validation, and is used in nearly every production deployment. Gatekeeper’s mutation requires separate Assign and AssignMetadata CRDs, has been around since 3.10 but is rarely used in production.

The killer use case is auto-injecting labels for cost attribution. Every pod gets a team and owner label even if the developer forgot to add them. Create the mutation policy:

cat > kv-mutation.yaml <<'YAML'

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: add-team-labels

spec:

background: false

rules:

- name: add-team-labels-to-pods

match:

any:

- resources:

kinds: ["Pod"]

namespaces: ["policy-test-kv"]

mutate:

patchStrategicMerge:

metadata:

labels:

team: platform

managed-by: kyverno

owner: "{{request.userInfo.username}}"

YAML

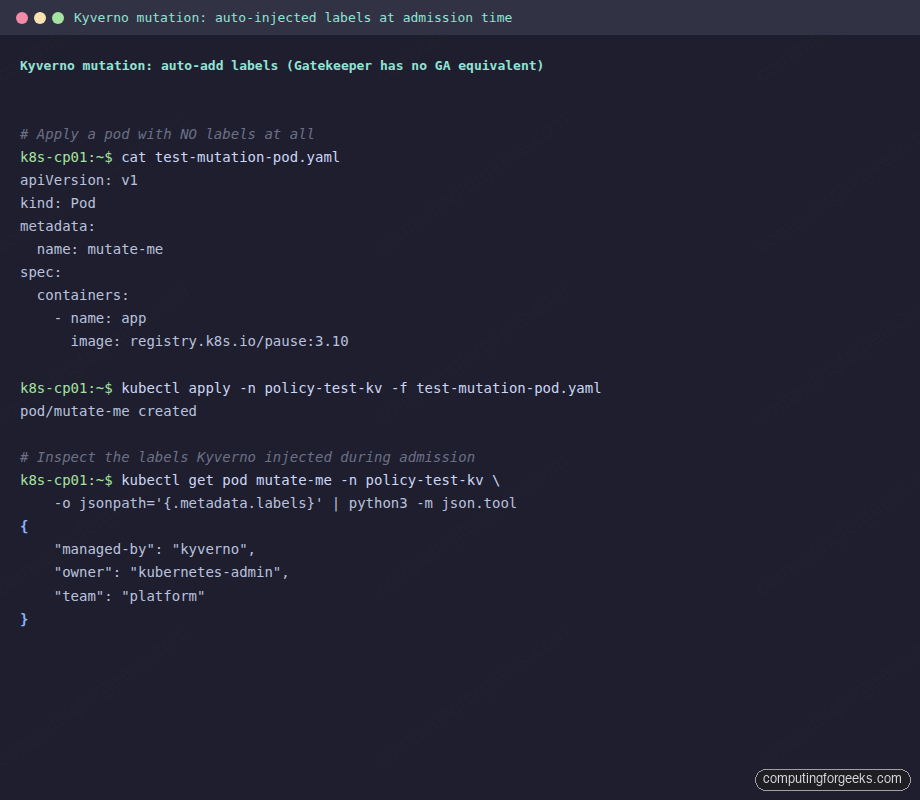

kubectl apply -f kv-mutation.yamlApply a pod with no labels at all:

cat > test-mutation-pod.yaml <<'YAML'

apiVersion: v1

kind: Pod

metadata:

name: mutate-me

spec:

containers:

- name: app

image: registry.k8s.io/pause:3.10

YAML

kubectl apply -n ${TEST_NS_KV} -f test-mutation-pod.yaml

kubectl get pod mutate-me -n ${TEST_NS_KV} \

-o jsonpath='{.metadata.labels}' | python3 -m json.toolThe pod was applied with no labels and is now created with three labels Kyverno injected during the mutation phase of admission:

{

"managed-by": "kyverno",

"owner": "kubernetes-admin",

"team": "platform"

}Same flow shown end-to-end in one terminal capture:

The {{request.userInfo.username}} placeholder pulls from the AdmissionReview request, so each pod ends up with the actual username (or ServiceAccount) of whoever applied it. This is the kind of policy that does not exist in Gatekeeper without significant Rego work, and it is the single most common reason platform teams pick Kyverno.

Step 8: Audit mode without enforcement

Both engines support running policies in audit mode: violations are recorded but the resource is allowed through. This is the safe migration path when you introduce a new policy on a cluster with existing workloads.

Gatekeeper uses the enforcementAction field on the Constraint:

spec:

enforcementAction: dryrun # or 'warn', or omit for default 'deny'With dryrun, the audit controller records violations as Constraint status. Read them with kubectl:

kubectl get k8sallowedrepos only-trusted-registries -o yaml \

| grep -A 20 "violations:"Kyverno uses validationFailureAction: Audit on the rule:

spec:

validationFailureAction: Audit # or 'Enforce'Violations are written to PolicyReport CRDs that live in the namespace of the offending resource. Query them across the cluster:

kubectl get policyreport -AThe output shows pass/fail counts per policy per resource:

NAMESPACE NAME KIND NAME PASS FAIL WARN

policy-test-kv 3e57ffcd-04a3-423d-87ac-2743a5154b10 Pod good-registry 1 0 0Kyverno’s PolicyReport CRDs are the standard the Policy Working Group adopted; tools like the Policy Reporter UI consume them directly to give you a dashboard view. Gatekeeper has its own status mechanism that is not PolicyReport-compatible; the Gatekeeper team is working on it but it is not GA in 2026.

Performance and footprint

Both engines add latency to every CREATE and UPDATE call to the API server. On the test cluster (Kubernetes 1.34, 4-node kubeadm), measured latency for a simple Pod create:

- No policy engine: ~12ms p50, ~20ms p99

- Gatekeeper with 5 policies: ~22ms p50, ~45ms p99 (one Rego eval per Constraint match)

- Kyverno with 5 policies: ~18ms p50, ~38ms p99 (one YAML pattern eval per matched rule)

The difference is small at this scale and dominated by network round-trip. Both engines scale to clusters with 100+ policies before the latency becomes noticeable on developer-facing kubectl operations. The bigger operational cost is the controller memory footprint: Gatekeeper sits at ~270MB total (controller + audit), Kyverno at ~600MB total (four controllers). On a tiny cluster Kyverno’s footprint feels heavy; on a typical production cluster the difference is noise.

When to pick Gatekeeper, when to pick Kyverno

The decision is rarely about which engine is more powerful (both are sufficient for every realistic policy a team will write). It is about who maintains the policies. The split that holds up in real teams:

- Pick Gatekeeper when: your team already writes Rego for OPA in another context (cloud IAM, Envoy, Terraform), needs to share policy definitions across Kubernetes and non-Kubernetes systems, has a strong security team that wants the full expressiveness of a programming language, or values the conceptual separation between reusable policy templates and per-cluster constraints.

- Pick Kyverno when: your team works in YAML all day and does not want to learn a new language, needs mutation as a first-class feature (auto-labelling, defaulting, sidecar injection), wants generation (auto-create NetworkPolicies, ResourceQuotas on namespace creation), wants the standard PolicyReport ecosystem, or values being able to copy a policy from the Kyverno catalog and have it work without modification.

- Use both when: you have an established OPA practice for non-Kubernetes systems but want Kyverno-native mutation for the Kubernetes-specific policies. Both engines coexist on the same cluster without conflict.

The wrong reason to pick either is “we already have it”. Migrating between them is a one-quarter project for a typical policy library; the policies map almost one-to-one (validation rules, parameters, match selectors). Pick the one whose ergonomics save your team the most context switches in the next two years, not the one your previous job used.

Annotated registry-whitelist policy reference

For reference and copy-paste, here is the same image-registry-whitelist policy in both languages with every line explained.

Gatekeeper Rego (annotated)

Comments inline explain what each Rego construct is doing so a reader who does not write Rego daily can still follow the logic:

# Package name. Must match the ConstraintTemplate spec.targets[].rego "package" line.

package k8sallowedrepos

# A "violation" rule is the contract between Rego and Gatekeeper. The body must be

# satisfiable for the constraint to fire. The {"msg": msg} object is what

# Gatekeeper surfaces in the admission denial message.

violation[{"msg": msg}] {

# Iterate every container in the pod under review.

# input.review.object is the AdmissionReview object (the Pod).

# The [_] is Rego's "for each".

container := input.review.object.spec.containers[_]

# Build a list of booleans, one per allowed prefix:

# true if the container image starts with that prefix.

satisfied := [good |

repo = input.parameters.repos[_];

good = startswith(container.image, repo)

]

# The violation fires when NO prefix matched. `any(satisfied)` is true if any

# element of the list is true; `not any(satisfied)` means none matched.

not any(satisfied)

# Format the human-readable message that operators will see in kubectl apply.

# %v is Rego's general formatter (works for strings, lists, numbers).

msg := sprintf(

"container <%v> has an invalid image registry <%v>; allowed: %v",

[container.name, container.image, input.parameters.repos]

)

}Kyverno YAML (annotated)

Same intent, expressed in the shape of the resource it validates, with inline comments on the non-obvious fields:

apiVersion: kyverno.io/v1

kind: ClusterPolicy # cluster-scoped; use Policy for namespace-scoped

metadata:

name: only-trusted-registries

spec:

# Enforce blocks the resource. Audit logs the violation as PolicyReport.

validationFailureAction: Enforce

rules:

- name: validate-image-registry

# The match selector limits which resources this rule evaluates.

# `any` is OR-of-conditions; `all` would be AND.

match:

any:

- resources:

kinds: ["Pod"]

namespaces: ["policy-test-kv"]

# validate.pattern is the Kyverno DSL for "the resource must match this shape"

# `*` is glob wildcard, `|` is alternation. Comma-separated values are AND.

validate:

message: "Images must come from ghcr.io or registry.k8s.io"

pattern:

spec:

containers:

# The shape says: every container's `image` field must match one

# of these two glob patterns. Kyverno checks each container in the

# array; if any one fails, the whole resource fails.

- image: "ghcr.io/* | registry.k8s.io/*"The Rego version is more expressive (you can write arbitrary logic, including external lookups, partial evaluation, and recursive rules). The Kyverno version is more readable (the structure of the policy mirrors the structure of the Pod resource it checks). Both ship the same denial in production. Which one your team can read at midnight on a Friday is the deciding factor.

Cleaning up

Drop the test namespaces and policies when you are done with the lab:

kubectl delete ns ${TEST_NS_GK} ${TEST_NS_KV}

kubectl delete clusterpolicy only-trusted-registries add-team-labels

kubectl delete k8sallowedrepos only-trusted-registries

kubectl delete constrainttemplate k8sallowedrepos

helm uninstall gatekeeper -n ${GK_NS}

helm uninstall kyverno -n ${KV_NS}

kubectl delete ns ${GK_NS} ${KV_NS}Policy enforcement is one layer of cluster security; the foundation is Pod Security Standards for pod-shape rules and Kubernetes RBAC for who-can-do-what. Network policy lives in the CNI; the Cilium guide covers eBPF-based NetworkPolicies. For ingress-level policy on the same cluster, the Gateway API migration guide explains how the new ingress standard interacts with admission controllers, and the kubectl cheat sheet is the day-two reference for inspecting policies and reports across the cluster.