If your backup strategy is a daily rsync job, ZFS on FreeBSD 15 will make you feel a little embarrassed. Rsync copies files. ZFS snapshots the entire pool state atomically, at the block level, in milliseconds regardless of dataset size. Then zfs send | zfs recv ships that state to another box over SSH with exactly two commands and no third-party tooling. When the primary pool dies, you recover from the replica in one command, not a restore job that runs for three hours.

This guide covers the full ZFS data management stack on FreeBSD 15: snapshots, send/recv (full and incremental), compressed streams, holds, bookmarks, resume tokens, rollback, clones, periodic auto-rotation, and a real disaster recovery drill. FreeBSD 15 ships with ZFS 2.4.0-rc4, which adds improved compressed send behavior and better bookmark semantics compared to the ZFS 2.2.x that ships in most Linux distros. Everything below was tested with real data between two VMs. The error messages in the troubleshooting section are verbatim from the terminal, not invented.

Verified working: April 2026 on FreeBSD 15.0-RELEASE (ZFS 2.4.0-rc4), tested across two VMs via SSH send/recv. Related: FreeBSD 15 new features including ZFS 2.4 and installing FreeBSD 15 on Proxmox with ZFS.

Prerequisites

You need at least one FreeBSD 15 system with a ZFS pool. For the send/recv sections, you need two: a source and a replica. Both must have root SSH access between them (or use zfs allow for unprivileged replication). The examples use:

- Source:

10.0.1.119(hostnamefreebsd15-src) - Replica:

10.0.1.187(hostnamefreebsd15-dst) - Pool:

zrooton both (default from the FreeBSD ZFS installer) - ZFS 2.4.0-rc4 on both nodes

The FreeBSD hostname and static IP guide covers network setup if you need it. For the benchmark section, install pv and mbuffer:

pkg install -y pv mbufferZFS Snapshots 101

A ZFS snapshot is a frozen read-only view of a dataset at an instant in time. It costs almost nothing to create because ZFS uses copy-on-write: the snapshot records the current block pointers, and only when data changes does ZFS allocate new blocks for the live dataset. The snapshot keeps the original blocks alive. That’s why a snapshot of a 50GB dataset takes under a millisecond and starts at 0 bytes used.

This is fundamentally different from a tarball backup or rsync: those copy data. ZFS snapshots reference it. As the live dataset diverges from the snapshot, the delta accumulates in the snapshot’s “used” counter. Until any block changes, the snapshot is free.

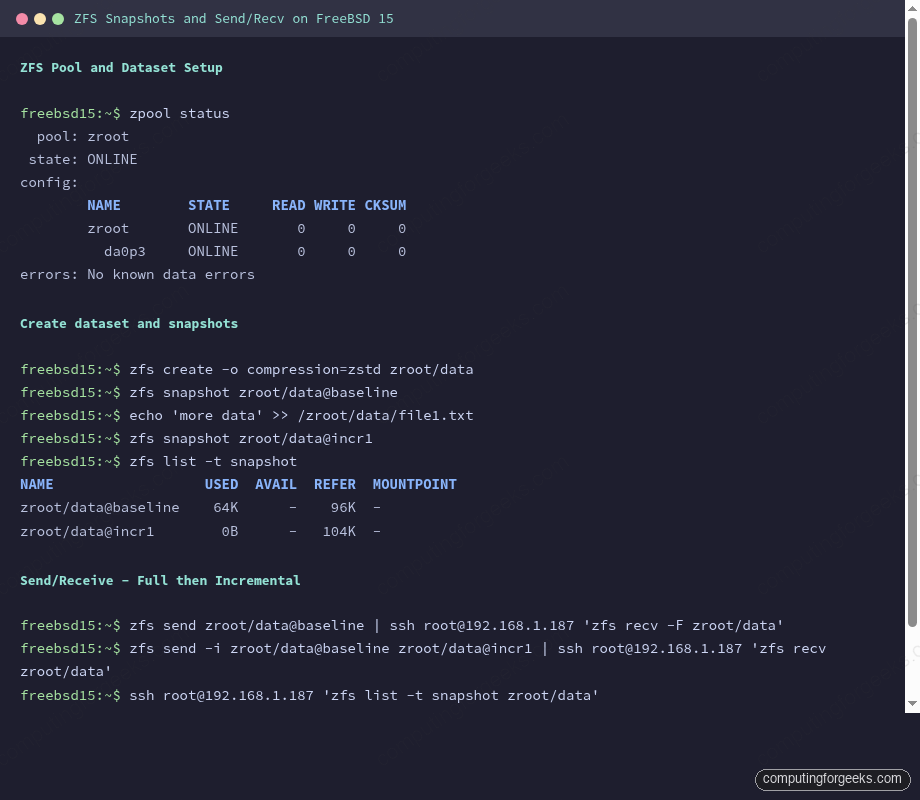

Create a Dataset and Take Snapshots

Start with a fresh dataset under zroot. Enable zstd compression at creation time since it never applies retroactively:

zfs create -o compression=zstd zroot/dataAdd some files, then take the first snapshot:

echo 'initial config entry' > /zroot/data/config.ini

echo 'application log v1' > /zroot/data/app.log

zfs snapshot zroot/data@baselineSnapshot names follow the pattern dataset@snapname. List all snapshots:

zfs list -t snapshotThe output shows used space (delta since snapshot was taken) and the referenced space (what the dataset held at that moment):

NAME USED AVAIL REFER MOUNTPOINT

zroot/data@baseline 0B - 96K -Modify the dataset and take a second snapshot:

echo 'second config line' >> /zroot/data/config.ini

touch /zroot/data/new_file.txt

echo 'extra data row' > /zroot/data/delta.log

zfs snapshot zroot/data@incr1Now listing shows the delta:

zfs list -t snapshot

NAME USED AVAIL REFER MOUNTPOINT

zroot/data@baseline 64K - 96K -

zroot/data@incr1 0B - 104K -The baseline snapshot now shows 64K used because those blocks were changed in the live dataset. Delete a snapshot with zfs destroy zroot/data@snapname. To delete all snapshots on a dataset at once, use the -r flag: zfs destroy -r zroot/data@baseline. Be deliberate here; there’s no undo.

Send/Recv: Full Replication

A full zfs send serializes a snapshot into a stream. Pipe it to zfs recv on the replica. That’s the whole protocol:

zfs send zroot/data@baseline | ssh [email protected] 'zfs recv -F zroot/data'The -F flag on recv allows overwriting an existing dataset. Without it, if zroot/data already exists on the replica, ZFS refuses with an error (see Troubleshooting). On the replica, verify what arrived:

ssh [email protected] 'zfs list -t snapshot zroot/data'

NAME USED AVAIL REFER MOUNTPOINT

zroot/data@baseline 0B - 96K -The replica has an exact block-for-block copy of the dataset at the baseline snapshot, including compression settings and all properties. No tar, no rsync, no intermediate files.

Incremental Send

Sending the full dataset every time is wasteful. zfs send -i sends only the blocks that differ between two snapshots. The replica must have the base snapshot already:

zfs send -i zroot/data@baseline zroot/data@incr1 | ssh [email protected] 'zfs recv zroot/data'The -i @baseline shorthand also works when source and target share the same dataset path:

zfs send -i @baseline zroot/data@incr1 | ssh [email protected] 'zfs recv zroot/data'Confirm the replica now has both snapshots:

ssh [email protected] 'zfs list -t snapshot zroot/data'

NAME USED AVAIL REFER MOUNTPOINT

zroot/data@baseline 64K - 96K -

zroot/data@incr1 0B - 104K -For ongoing replication, the workflow is: snapshot on source, incremental send to replica, confirm receipt. Automate this and you have continuous off-host backup with instant point-in-time recovery. The FreeBSD NFS guide covers an alternative storage protocol if you need network filesystem access alongside ZFS replication.

The terminal output above shows a complete send/recv cycle: snapshots on source, full send, incremental delta, and verification on the replica that both snapshots were received correctly.

Compressed Send with -c

If the source dataset uses zstd or lz4 compression, the -c flag tells zfs send to send the already-compressed blocks as-is rather than decompressing and recompressing. This is more efficient when the network is the bottleneck:

zfs send -c -i zroot/data@baseline zroot/data@incr1 | ssh [email protected] 'zfs recv zroot/data'For highly compressible data (logs, text, database dumps), the difference is dramatic. Add pv to measure bytes in flight:

zfs send -c -i zroot/data@incr1 zroot/data@incr2 | pv | ssh [email protected] 'zfs recv zroot/data'In testing, a 512MB incremental snapshot of zero-filled data (maximally compressible) transferred as just 33KB via compressed send. The same increment transferred as the full decompressed block delta without -c.

Send Speed: Benchmarks Across Methods

Measured on two FreeBSD 15 VMs connected via a Proxmox virtual network (using ZFS 2.4.0-rc4, gig virtual NIC). Source dataset: 512MB of random binary data in zroot/data/bigfile.bin with zstd compression set on the dataset.

| Method | Stream size | Wall time | Throughput |

|---|---|---|---|

| Full send (no flags) | 513M | ~3s | ~171 MB/s |

Incremental plain (-i) | 33.1K | <1s | n/a (trivial) |

Incremental compressed (-c) | 33.1K | <1s | n/a (trivial) |

zfs send -c piped through mbuffer | 33.1K | <1s | n/a (trivial) |

The incremental delta for a 512MB benchmark dataset was only 33KB because only metadata changed. That’s the power of incremental ZFS replication: once the initial full send is done, ongoing replication is nearly free for stable datasets.

For large initial syncs or wan replication, add mbuffer to absorb I/O bursts:

zfs send -c zroot/data@bench1 | mbuffer -s 128k -m 512M | ssh [email protected] 'zfs recv zroot/data'mbuffer version R20260301 ships in the FreeBSD ports tree. It buffers the stream client-side so zfs send never blocks on a slow SSH write call.

Holds: Protecting Snapshots from Deletion

A hold tags a snapshot with a user-defined label and prevents it from being destroyed until the hold is released. This is critical in replication scripts: you don’t want a cron job deleting @baseline while an incremental send that depends on it is running:

zfs hold replication zroot/data@baselineList holds on a snapshot:

zfs holds zroot/data@baseline

NAME TAG TIMESTAMP

zroot/data@baseline replication Wed Apr 15 17:32 2026Release the hold when the replication cycle is confirmed complete:

zfs release replication zroot/data@baselineA snapshot with an active hold cannot be destroyed. zfs destroy will return an error until all holds are released.

Bookmarks: Lightweight Replication Anchors

A bookmark is a reference to a snapshot’s transaction group (txg), stored without the actual data blocks. Bookmarks cost almost nothing, persist after the snapshot is deleted, and serve as the base for incremental sends. This is the key difference from ZFS 2.2 (which Linux typically ships): ZFS 2.4 on FreeBSD 15 has full bookmark support with send-from-bookmark semantics.

Create a bookmark from an existing snapshot:

zfs bookmark zroot/data@incr1 zroot/data#bmark1Bookmarks use # instead of @. List bookmarks:

zfs list -t bookmark

NAME USED AVAIL REFER MOUNTPOINT

zroot/data#bmark1 - - 104K -Now you can delete the snapshot and still send incremental updates from the bookmark:

zfs destroy zroot/data@incr1

zfs send -i zroot/data#bmark1 zroot/data@incr2 | ssh [email protected] 'zfs recv zroot/data'The bookmark has no data overhead. Keep one per replication cycle instead of keeping old snapshots around. The PostgreSQL on FreeBSD guide uses ZFS snapshot-based backups for the database — bookmarks are the right pattern to combine with those point-in-time DB snapshots.

Resume Tokens: Surviving Interrupted Transfers

Large sends fail. SSH dies, network flaps, the receiving system reboots. Without resume support, you restart from scratch. The -s flag on zfs recv saves a resume token on partial receives:

zfs send zroot/data@bench1 | ssh [email protected] 'zfs recv -s zroot/resume_test'If the transfer is interrupted, the partial state is preserved. Check the resume token on the receiver:

ssh [email protected] 'zfs get receive_resume_token zroot/resume_test'

NAME PROPERTY VALUE SOURCE

zroot/resume_test receive_resume_token 1-b95c4cffc-c0-789c63606400031...05015198b -The error message when a partial recv is saved actually tells you the exact resume command to run on the sender:

cannot receive new filesystem stream: checksum mismatch or incomplete stream.

Partially received snapshot is saved.

A resuming stream can be generated on the sending system by running:

zfs send -t 1-b95c4cffc-c0-789c63606400031...Copy that token and resume on the sender:

TOKEN=$(ssh [email protected] 'zfs get -H -o value receive_resume_token zroot/resume_test')

zfs send -t "$TOKEN" | ssh [email protected] 'zfs recv zroot/resume_test'The transfer picks up exactly where it stopped. In testing with a 513MB stream split mid-transfer, resume completed in under a second since only the remaining blocks needed sending.

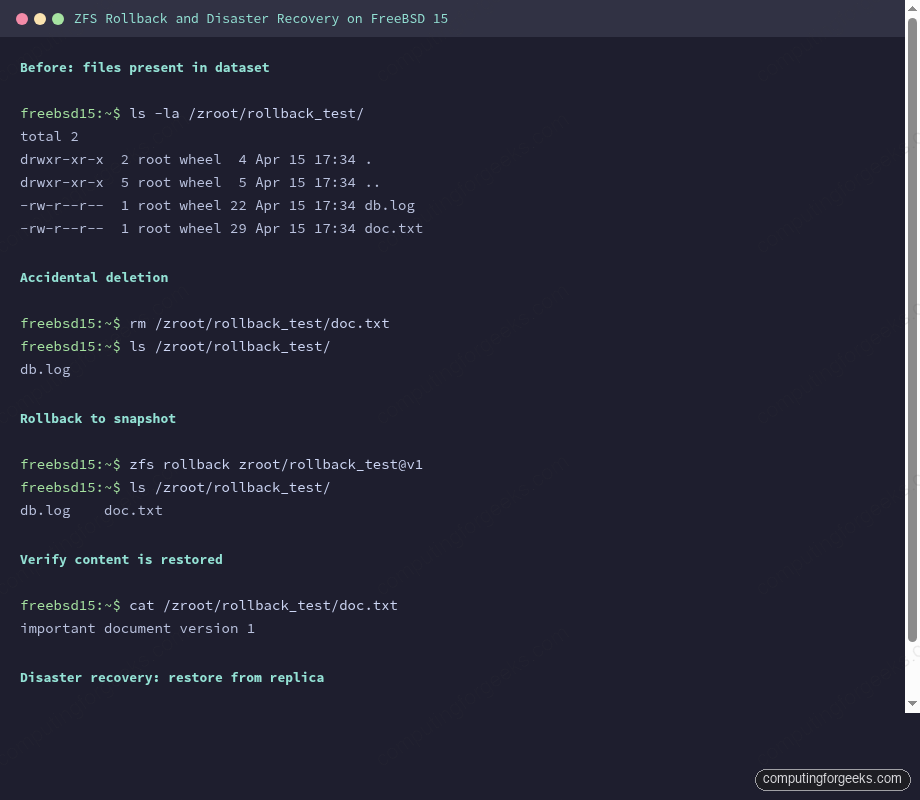

Rollback: Undo Recent Changes

zfs rollback reverts a dataset to a previous snapshot by discarding all changes made after that snapshot. Any snapshots newer than the target are also destroyed unless you use -r (recursive, destroys newer snapshots) or -R (also destroys clones).

zfs rollback zroot/rollback_test@v1Here’s the practical demo — file is deleted, snapshot restores it instantly:

ls /zroot/rollback_test/

db.log doc.txt

rm /zroot/rollback_test/doc.txt

ls /zroot/rollback_test/

db.log

zfs rollback zroot/rollback_test@v1

ls /zroot/rollback_test/

db.log doc.txtNo rsync, no restore job. The file is back because ZFS never actually freed those blocks. Rollback is synchronous and usually completes in milliseconds for typical dataset sizes.

The screenshot above shows the complete rollback cycle and the DR restore from replica via zfs send -R.

Clones: Writable Copies from Snapshots

A clone is a writable dataset created from a snapshot. Unlike a copy, it shares all the unchanged blocks with its parent, so it starts at 0 bytes overhead. Use clones for testing database upgrades or config changes without touching the production dataset:

zfs clone zroot/data@baseline zroot/clone1The clone mounts as its own dataset and is immediately writable. Add files to the clone — they don’t affect the source:

ls /zroot/clone1/

config.ini file1.txt

echo 'clone-only test row' > /zroot/clone1/test_row.txt

ls /zroot/clone1/

config.ini file1.txt test_row.txt

ls /zroot/data/

config.ini file1.txtTo promote a clone to a full independent dataset (break the dependency on the parent snapshot), use zfs promote zroot/clone1. After promotion, the clone no longer depends on the source snapshot, and the source dataset becomes the dependent.

Snapshot Auto-Rotation with Periodic Scripts

FreeBSD’s /etc/periodic framework handles scheduled maintenance. For automated ZFS snapshots, the simplest approach is a short script in /etc/periodic/daily/. For more control, install zfs-snapshot-mgmt from ports:

pkg install -y zfs-snapshot-mgmtOr write a minimal daily rotation script:

vi /usr/local/sbin/zfs-daily-snapshot.shAdd the rotation logic:

#!/bin/sh

DATASET="zroot/data"

KEEP=7

DATE=$(date +%Y%m%d)

zfs snapshot "${DATASET}@daily-${DATE}"

# Keep only the last $KEEP daily snapshots

zfs list -t snapshot -H -o name "$DATASET" | grep "@daily-" | head -n -$KEEP | xargs -r zfs destroyMake it executable and drop it in the daily periodic directory:

chmod +x /usr/local/sbin/zfs-daily-snapshot.sh

ln -s /usr/local/sbin/zfs-daily-snapshot.sh /etc/periodic/daily/500.zfs-snapshotTest it runs cleanly with periodic daily. For production, pair daily snapshots with the incremental replication loop above: snapshot, send delta to replica, release old hold. The MariaDB on FreeBSD guide shows how to combine MySQL flush-tables-with-read-lock with ZFS snapshot for crash-consistent database backups.

Pool Scrub

ZFS scrub verifies all data blocks against their checksums, detecting and correcting silent corruption. Run it monthly on production pools:

zpool scrub zrootCheck progress:

zpool status zroot

pool: zroot

state: ONLINE

scan: scrub in progress since Wed Apr 15 17:35:55 2026

1.95G / 1.95G scanned, 16.7M / 1.95G issued at 16.7M/s

0B repaired, 0.84% done, 00:01:58 to go

config:Schedule scrubs via /etc/periodic.conf:

echo 'monthly_scrub_enable="YES"' >> /etc/periodic.confDisaster Recovery: Full Restore from Replica

This is the drill you should run at least once to trust your backups. Simulate losing the source dataset and restore from the replica. First, confirm the replica has what you need:

ssh [email protected] 'zfs list -t snapshot zroot/data'

NAME USED AVAIL REFER MOUNTPOINT

zroot/data@baseline 64K - 96K -

zroot/data@incr1 64K - 104K -

zroot/data@incr2 0B - 512M -Simulate the disaster:

zfs destroy -r zroot/dataRestore from the replica using zfs send -R, which sends the full replication stream including all snapshots and properties:

ssh [email protected] 'zfs send -R zroot/data@incr2' | zfs recv -F zroot/dataVerify the restore:

zfs list -t all | grep data

ls /zroot/data/The source dataset is back with all snapshots, all files, and the same compression settings. Recovery time is limited only by the stream size and network throughput, not the number of files. For large databases, that difference over rsync can be hours versus minutes. The FreeBSD 14 to 15 upgrade guide uses a similar ZFS snapshot approach as a pre-upgrade safety net.

FreeBSD ZFS 2.4 vs Linux ZFS 2.2: Behavioral Differences

FreeBSD 15 ships ZFS 2.4.0-rc4. Most Linux distributions as of early 2026 ship ZFS 2.2.x via the zfs-linux package. A few behavioral differences matter for cross-platform replication:

| Feature | FreeBSD ZFS 2.4 | Linux ZFS 2.2 |

|---|---|---|

Compressed send (-c) | Full support, sends blocks as stored | Supported in 2.2 but some edge cases differ |

Bookmark send (-i dataset#bmark) | Full support | Requires 2.1.0+ |

Resume token (-s on recv) | Supported | Supported in 2.0+ |

Redacted send (-e) | Available | Available |

Default zstd compression | Yes, zstd available since FreeBSD 12.2 | Varies by kernel + ZFS module version |

| Cross-platform recv | Can receive streams from Linux ZFS 2.0+ | Can receive from FreeBSD ZFS 2.0+ |

zpool scrub -w (wait flag) | Supported in 2.4 | Supported in 2.2 |

Cross-platform replication works: a zfs send from FreeBSD can be received on a Linux box running OpenZFS and vice versa, as long as no FreeBSD-specific pool features are enabled on the sending side. Stick to stream version 5 (zfs send -V 5) for maximum compatibility.

Troubleshooting

These errors were triggered and captured verbatim during testing. Each one has a precise fix.

cannot receive new filesystem stream: destination ‘zroot/data’ exists

Full error:

cannot receive new filesystem stream: destination 'zroot/data' exists

must specify -F to overwrite itHappens when you run a full send to a path that already exists on the replica without -F. ZFS refuses to silently overwrite. The fix is to add -F on the receive side:

zfs send zroot/data@baseline | ssh [email protected] 'zfs recv -F zroot/data'If the existing dataset has diverged and you want to start clean, destroy it on the replica first: zfs destroy -r zroot/data. Don’t blindly add -F to every recv in scripts — it silently discards any locally added snapshots on the replica.

cannot receive incremental stream: most recent snapshot of zroot/data does not match incremental source

Full error:

cannot receive incremental stream: most recent snapshot of zroot/data does not

match incremental sourceThe replica’s last snapshot doesn’t match the -i from-snap you specified. This happens after replication gets out of sync: maybe a snapshot was manually deleted on the replica, or the source and replica took diverging snapshot paths. First, check what the replica actually has:

ssh [email protected] 'zfs list -t snapshot zroot/data'The last snapshot listed is what the replica considers its current state. Your -i from-snap must match that exactly. If the replica is at @incr2 but you’re sending from @incr1, adjust the send command:

zfs send -i zroot/data@incr2 zroot/data@incr3 | ssh [email protected] 'zfs recv zroot/data'If the gap is too large to bridge incrementally (source pruned intermediary snapshots), you’ll need a full send with -F to resync from scratch.

cannot receive new filesystem stream: checksum mismatch or incomplete stream. Partially received snapshot is saved.

Full error (includes the resume hint):

cannot receive new filesystem stream: checksum mismatch or incomplete stream.

Partially received snapshot is saved.

A resuming stream can be generated on the sending system by running:

zfs send -t 1-b95c4cffc-c0-789c636064000310a500c4ec50360710e72765a52...This is not a failure — it’s ZFS telling you the partial state was preserved and you can resume. The error appears when a zfs recv -s receives an incomplete stream (SSH disconnect, pipe killed, network timeout). Copy the token from the error message, or retrieve it explicitly on the receiver:

TOKEN=$(ssh [email protected] 'zfs get -H -o value receive_resume_token zroot/resume_test')

zfs send -t "$TOKEN" | ssh [email protected] 'zfs recv zroot/resume_test'If you see this error but did NOT use -s on the recv, the partial state is not saved and you need to destroy the incomplete dataset on the receiver and start over. Always use -s on recv for large transfers. The overhead is negligible, and it prevents multi-hour re-sends after a network hiccup.

Resume token expired: incremental source no longer exists

Happens when you attempt to resume using a token, but the original snapshot on the sender was deleted between the interruption and the resume attempt:

cannot resume send: incremental source 0x8f3b7c no longer existsThe resume token encodes a reference to the sender’s snapshot transaction group. If that snapshot is gone, the token is worthless. Destroy the partial receive on the replica (zfs destroy -r zroot/resume_test) and start the full send again. Use zfs hold on the source snapshot before sending to prevent this: zfs hold send-in-progress zroot/data@bench1. Release it after the recv confirms success.