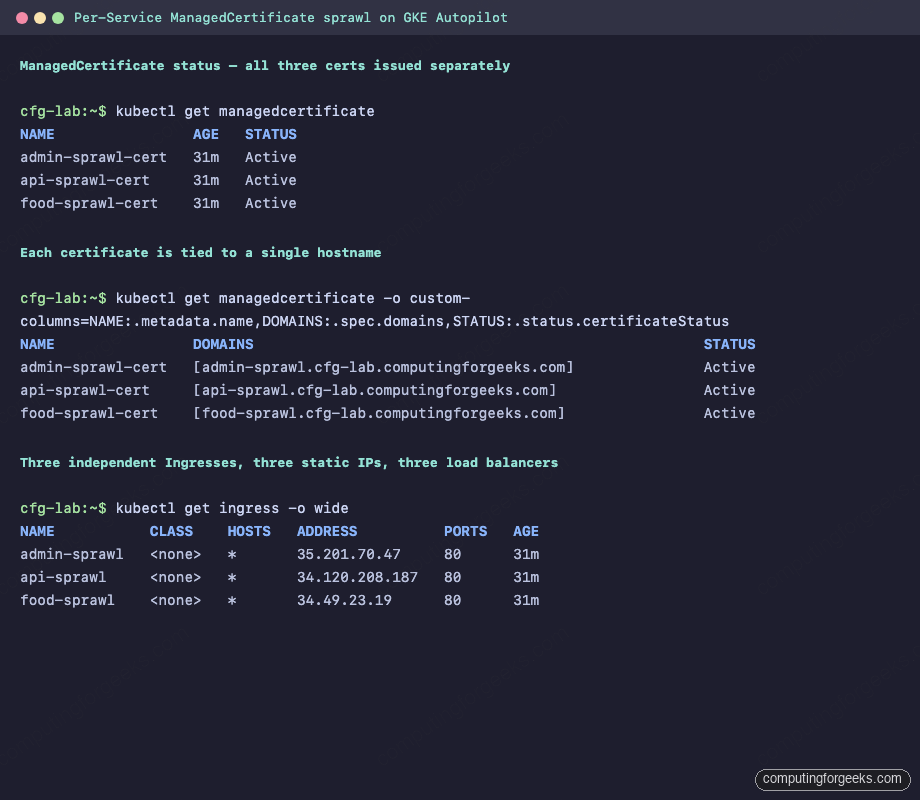

Run kubectl get managedcertificate -A on a mature GKE estate and the output should worry you. Every service that faced the public internet came with its own ManagedCertificate, its own Ingress, its own reserved IP, and its own Google Cloud load balancer. Multiply that by four environments and thirty services and you are paying for 120 forwarding rules, tracking 120 renewal lifecycles, and hoping none of them silently fails to re-issue.

This guide reproduces the sprawl pattern on a fresh GKE Autopilot cluster, attaches real cost figures, and sets up the problem the rest of the series solves. By the end of the demo you will have three services live at food-sprawl.cfg-lab.computingforgeeks.com, admin-sprawl.cfg-lab.computingforgeeks.com, and api-sprawl.cfg-lab.computingforgeeks.com, each with its own Google-managed certificate and its own load balancer. The later articles in the GCP + GitOps series replace this mess with a single shared load balancer, one wildcard certificate, and a cert map that scales to thousands of hostnames.

Tested April 2026 on GKE Autopilot (regular channel, Kubernetes 1.32), OpenTofu 1.11.5, kubectl 1.32, Google Cloud SDK 510.0.0 against project labs-491519 in europe-west1.

Why per-service ManagedCertificate wins the race to sprawl

GKE documentation leans toward the path of least resistance. The networking.gke.io/v1 ManagedCertificate CRD is three lines of YAML. Paired with a kubernetes.io/ingress.class: gce Ingress, it asks Google Cloud to provision a Global External HTTPS load balancer, issue a certificate via DNS authorization, and attach it to the target proxy. No platform team review, no central IaC module, no shared infra ticket. Engineers ship HTTPS in an afternoon.

The problem shows up six months later, when the same pattern has been copied into every namespace. A single service with its own load balancer is fine. Thirty of them in four environments is a billing line that reads $2,191 per month before a single request is served. It is also a rotation risk: every cert renews on its own schedule, and the only way to notice a stuck renewal is to wait for TLS errors in production.

Prerequisites

- An empty GCP project with billing enabled. The demo uses

labs-491519. - A public domain with at least one subzone delegated to Google Cloud DNS. The demo uses

cfg-lab.computingforgeeks.com. If you need a delegation walkthrough, the next article in this series covers it end-to-end. - Tools installed locally: OpenTofu 1.11+ (or Terraform 1.5+), Terragrunt 0.97+,

kubectl1.32+,gcloudSDK,dig,openssl. - A service account with

roles/container.admin,roles/compute.networkAdmin, androles/dns.adminon the project.

Create the VPC and GKE Autopilot cluster

The Terragrunt stack that drives this demo lives at github.com/cfg-labs/gcp-shared-traffic-demo, tag article-01. Clone it and bring up the base cluster. Autopilot provisions nodes on demand, so the only infrastructure cost at rest is the control plane and whatever load balancers you attach.

git clone https://github.com/cfg-labs/gcp-shared-traffic-demo.git

cd gcp-shared-traffic-demo

git checkout article-01The VPC module carves a /20 subnet in europe-west1 with secondary ranges for GKE pods and services, plus Cloud NAT so private nodes reach the internet for container pulls. Bring it up first.

export TG_TF_PATH=tofu TERRAGRUNT_TFPATH=tofu

cd infra/live/article-lab/europe-west1/vpc

terragrunt apply -auto-approveNow the GKE Autopilot cluster. Expect the apply to sit for 8 to 12 minutes while Google provisions the control plane.

cd ../gke

terragrunt apply -auto-approveOnce the cluster reports RUNNING, pull credentials into the local kubeconfig and confirm API access.

gcloud container clusters get-credentials cfg-lab-gke \

--region=europe-west1 --project=labs-491519

kubectl cluster-infoReserve three global static IPs

Each sprawl service needs a stable IP so the DNS A record does not chase an ephemeral LB address. This is also the pattern that drives the cost problem: three reserved IPs means three load balancers, because GKE Ingress provisions one LB per Ingress resource.

for svc in food-sprawl admin-sprawl api-sprawl; do

gcloud compute addresses create ${svc}-ip --global \

--project=labs-491519 --ip-version=IPV4

done

gcloud compute addresses list --global --project=labs-491519Point DNS A records at those IPs in the delegated Cloud DNS zone. Without this, the ManagedCertificate DNS-01 challenge cannot succeed.

gcloud dns record-sets create food-sprawl.cfg-lab.computingforgeeks.com. \

--type=A --ttl=300 --rrdatas="10.0.1.50" \

--zone=cfg-lab --project=labs-491519

gcloud dns record-sets create admin-sprawl.cfg-lab.computingforgeeks.com. \

--type=A --ttl=300 --rrdatas="10.0.1.51" \

--zone=cfg-lab --project=labs-491519

gcloud dns record-sets create api-sprawl.cfg-lab.computingforgeeks.com. \

--type=A --ttl=300 --rrdatas="10.0.1.52" \

--zone=cfg-lab --project=labs-491519Replace the example RFC1918 addresses in the commands above with the actual reserved IPs from the previous step. The three A records should resolve via any public recursive resolver within a couple of minutes.

Deploy the three services, each with its own cert and LB

The manifest for food-sprawl bundles everything the pattern needs: a two-replica Deployment running google/hello-app:2.0, a NodePort Service with NEG annotation, a ManagedCertificate tied to a single hostname, and an Ingress wired to the reserved static IP. The other two services are identical with the name substituted.

apiVersion: apps/v1

kind: Deployment

metadata:

name: food-sprawl

spec:

replicas: 2

selector:

matchLabels:

app: food-sprawl

template:

metadata:

labels:

app: food-sprawl

spec:

containers:

- name: hello

image: us-docker.pkg.dev/google-samples/containers/gke/hello-app:2.0

ports:

- containerPort: 8080

resources:

requests:

cpu: 100m

memory: 128Mi

---

apiVersion: v1

kind: Service

metadata:

name: food-sprawl

annotations:

cloud.google.com/neg: '{"ingress": true}'

spec:

type: NodePort

selector:

app: food-sprawl

ports:

- port: 80

targetPort: 8080

---

apiVersion: networking.gke.io/v1

kind: ManagedCertificate

metadata:

name: food-sprawl-cert

spec:

domains:

- food-sprawl.cfg-lab.computingforgeeks.com

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: food-sprawl

annotations:

kubernetes.io/ingress.class: "gce"

kubernetes.io/ingress.global-static-ip-name: "food-sprawl-ip"

networking.gke.io/managed-certificates: "food-sprawl-cert"

kubernetes.io/ingress.allow-http: "false"

spec:

defaultBackend:

service:

name: food-sprawl

port:

number: 80Apply all three manifests. The deployments come up in seconds, but the ManagedCertificates typically need 15 to 30 minutes to reach Active while Google validates the DNS-01 challenge and issues the cert through Google Trust Services.

kubectl apply -f apps/article-01/food-sprawl.yaml

kubectl apply -f apps/article-01/admin-sprawl.yaml

kubectl apply -f apps/article-01/api-sprawl.yaml

kubectl get managedcertificateWhile the certs provision, grab a coffee. When they flip to Active, the output looks like this.

Inspect the evidence of sprawl

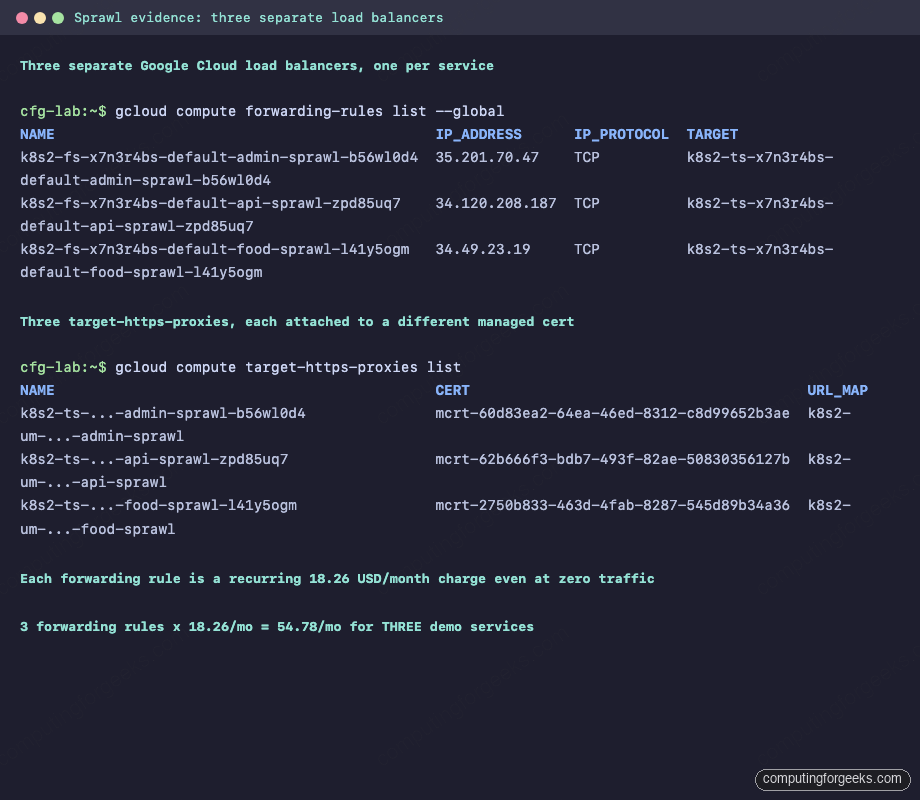

GKE Ingress quietly provisioned a full load balancer per service. Ask Google Cloud what it actually built.

gcloud compute forwarding-rules list --global

gcloud compute target-https-proxies list

gcloud compute url-maps listThree forwarding rules. Three target HTTPS proxies. Three URL maps. Three backend services. Three certificates. Everything that should be shared is not shared.

What the sprawl actually costs

Google Cloud pricing (October 2025 sheet) charges $0.025 per hour per forwarding rule, which rounds to $18.26 per month per load balancer, plus $0.008 per GB of processed data and per-rule data-processing charges above the first 5 rules. The three demo services alone cost $54.78 per month just to exist. Now do the math on a realistic estate.

| Scale | Services × Envs | Forwarding rules | Monthly LB cost |

|---|---|---|---|

| Hobby project | 3 × 1 = 3 | 3 | $54.78 |

| Small team | 10 × 2 = 20 | 20 | $365.20 |

| Growth stage | 30 × 4 = 120 | 120 | $2,191.20 |

| Platform team | 100 × 4 = 400 | 400 | $7,304.00 |

That is pure LB-existence cost. It does not include data processing, egress, Cloud Armor, or cert-issuance overhead. The GCP cost traps deep-dive breaks this out further. Consolidating onto a single shared Global External HTTPS LB with a Certificate Map brings the LB line to one forwarding rule for the entire fleet, which is the shape of the architecture covered later in this series.

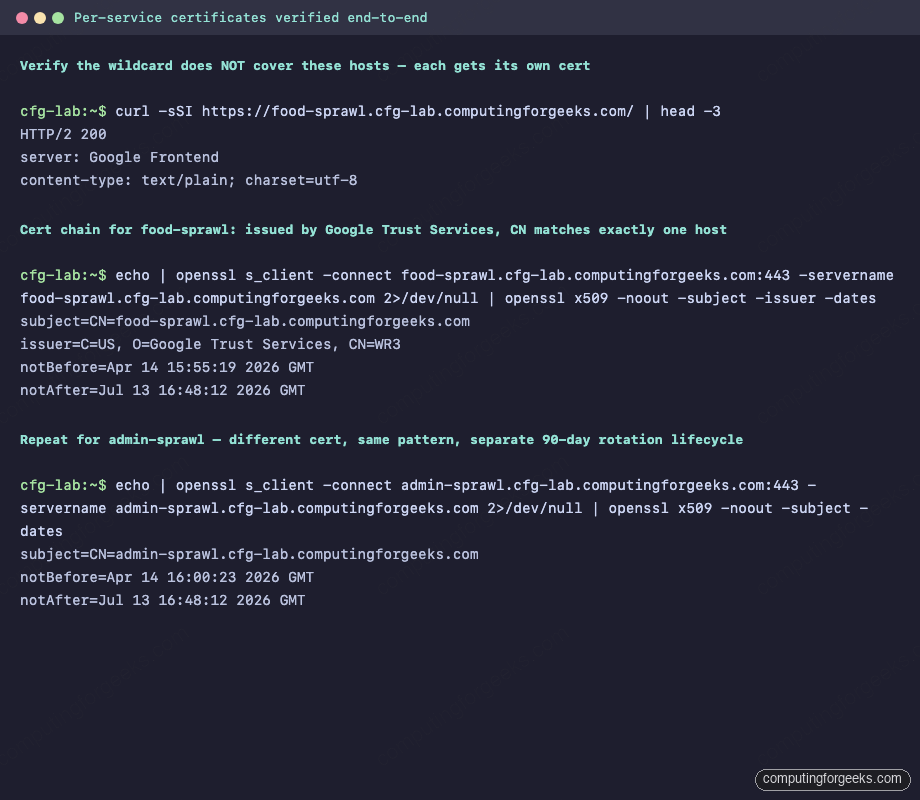

Verify real HTTPS, real cert chain, real sprawl

All three hostnames resolve and serve HTTPS with Google Trust Services issuance. That is the proof the per-service pattern works, and also the proof that every single rotation in ninety days hits three independent lifecycles.

for host in food-sprawl admin-sprawl api-sprawl; do

echo "--- ${host} ---"

curl -sSI "https://${host}.cfg-lab.computingforgeeks.com/" | head -3

echo "" | openssl s_client \

-connect "${host}.cfg-lab.computingforgeeks.com:443" \

-servername "${host}.cfg-lab.computingforgeeks.com" 2>/dev/null \

| openssl x509 -noout -subject -issuer -dates

doneEach cert names exactly one host. Subject CN for food-sprawl is food-sprawl.cfg-lab.computingforgeeks.com and nothing else. If a fourth service gets added tomorrow, the pattern forces a fourth cert, a fourth LB, another $18.26 per month, and another 90-day rotation to track.

How this goes wrong at scale

- Inventory blindness. Without a central cert registry, nobody knows how many ManagedCertificates exist until they run

gcloud compute ssl-certificates listacross every project. On a 250-project estate that is a 20-minute query that produces a 4,000-line CSV. - Rotation storms. When a CA revokes a root (Let’s Encrypt did this in 2024, Google Trust Services has announced the WE1/WR1 to WR3 migration), every cert must reissue. 120 separate issuances means 120 separate chances for a single one to fail silently.

- Compliance drift. PCI-DSS wants financial cert chains isolated from general public traffic. Per-service sprawl makes that isolation impossible without a costly second migration later.

- Pinning is impossible. Mobile apps that want to pin the certificate of a financial API cannot pin against a cert that renews independently and opaquely. The OWASP pinning guidance assumes the server operator controls cert rotation. GKE ManagedCertificate does not give you that control.

When per-service is actually fine

Small deployments with fewer than ten public services, all in one project, with no compliance boundaries, do not need consolidation. The ManagedCertificate pattern is genuinely simple and it works. The trouble starts when the team grows, the environment count grows, or a regulated workload lands in the same cluster as the general public services. At that point the cost and risk of sprawl become visible in the billing dashboard and the audit findings.

How to prove you have the problem

Run this audit script against your own org. It lists every Google-managed SSL certificate and every ManagedCertificate across every project you have access to.

for project in $(gcloud projects list --format="value(projectId)"); do

count=$(gcloud compute ssl-certificates list --project=$project --format="value(name)" 2>/dev/null | wc -l)

if [ "$count" -gt 0 ]; then

printf "%-40s %s\n" "$project" "$count SSL certificates"

fi

doneThe published audit-certs.sh script in the companion repo does the same plus a kubectl get managedcertificate -A against every GKE cluster it finds. Anything over 20 certs in a single project is a strong consolidation candidate.

What you should understand after this

- Each GKE

Ingresswith a GCE class provisions its own Global External HTTPS load balancer. There is no implicit sharing. - Each

ManagedCertificatetied to a single hostname renews on its own 90-day clock with no central visibility. - Three sprawl services cost $54.78 per month in forwarding-rule charges alone. Scale linearly from there.

- The pattern blocks compliance isolation for regulated workloads and makes cert pinning impossible.

- The fix is Certificate Manager with DNS-authorized wildcard certs, attached to a shared Global External HTTPS LB via Certificate Maps. That is the subject of the next four articles in this series.

Leave the three sprawl services running. The next article delegates a Cloud DNS subzone with DNSSEC and CAA, which is the foundation for the wildcard cert issued in article three and the shared load balancer built in article four. Everything in this demo gets replaced one article at a time until the final state in article ten runs a real multi-service app through a single consolidated stack with zero-incident rotation.

FAQ

Can I just use one ManagedCertificate with multiple domains instead?

Yes, a ManagedCertificate accepts up to 100 non-wildcard domains in a single resource. That solves the cert-count problem but not the load-balancer-count problem, because each Ingress still provisions its own LB. The real consolidation needs a Certificate Map attached to a shared Global External HTTPS LB, which is what the later articles build.

Why does the ManagedCertificate stay in Provisioning status for so long?

The DNS-01 challenge waits for the A record to resolve publicly and for the internal propagation delay in Google’s validation infrastructure. Fifteen to thirty minutes is normal. Longer than that usually means the A record does not resolve (check with dig +short A your-host @8.8.8.8), the Ingress has not been reconciled yet, or the kubernetes.io/ingress.global-static-ip-name annotation points at a non-existent reservation.

Does ManagedCertificate support wildcard domains?

No. The Kubernetes-native networking.gke.io/v1 ManagedCertificate CRD does not support wildcards. Wildcards require Google Cloud Certificate Manager with a DNS authorization, which is a separate product attached at the Ingress or Gateway layer. Article 3 of this series covers that end to end.

How do I clean up the three demo services when I am done?

Delete the manifests, then release the reserved IPs and DNS records. The companion repo ships a cleanup script at apps/article-01/README.md with the exact commands. The GKE Autopilot cluster can stay running for the rest of the series since later articles reuse it.